Why Banning ChatGPT Isn't Working -- and What Your AI Policy Should Say Instead

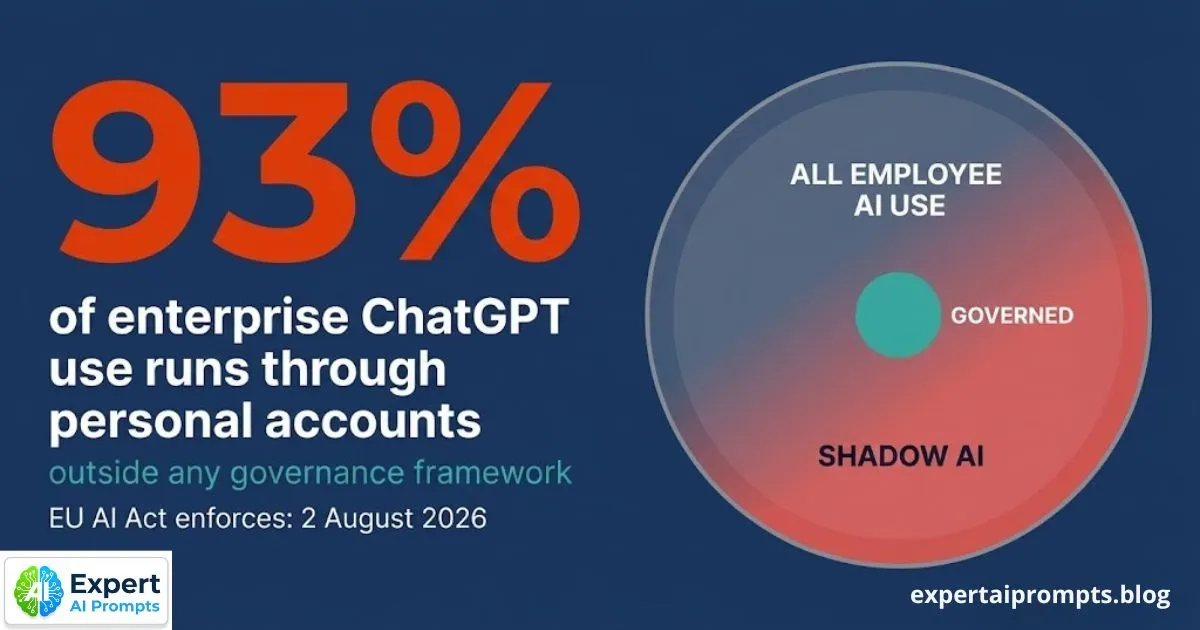

Your ChatGPT ban is not reducing AI use in your organisation. It is reducing reported AI use. The employees who were using personal ChatGPT accounts for work tasks before the ban are still using personal ChatGPT accounts for work tasks. They are simply no longer mentioning it. The data breach risk is the same. The EU AI Act compliance exposure is the same. The IP leakage is the same. The only thing that has changed is that your visibility into the problem has decreased.

This is the prohibition paradox -- and it plays out the same way across organisations of every size and sector that have implemented AI bans without simultaneously building a credible governed alternative. The ban does not eliminate the behaviour. It drives it underground, where it is harder to see, harder to govern, and harder to remediate.

The full statistical picture of Shadow AI -- including why 93% of enterprise ChatGPT use runs through personal accounts -- is in The Shadow AI Problem.

Section 1 - The Evidence: What Happens When Organisations Ban ChatGPT

Multiple organisations have implemented ChatGPT bans -- network-level blocks, updated acceptable use policies, disciplinary consequences for non-compliance. The pattern that follows is consistent.

In the first two to four weeks after a ban, reported AI tool usage in corporate systems drops. IT ticket volumes related to AI tools decrease. The governance dashboard looks cleaner. Leadership interprets the ban as working.

Within six to eight weeks, a different pattern emerges. Employees are accessing AI tools through personal devices on personal networks -- beyond the reach of corporate network monitoring. AI-generated content is appearing in work outputs without disclosure. The governance team begins receiving calls from managers who have discovered that team members are submitting AI-generated work without indicating it. The ban has not reduced AI use. It has changed where AI use is visible.

What 'No Reduction in AI Use' Actually Means

The research finding that prohibition does not reduce AI use has a specific implication: every measure of AI activity that the organisation can see -- network traffic, approved tool usage logs, self-reported usage surveys -- is measuring compliance with the reporting requirement, not compliance with the substance of the ban. The gap between what the governance dashboard shows and what is actually happening grows from the moment the ban is announced.

The practical consequence: an organisation with an active ChatGPT ban and no alternative has a governance problem that is worse than the one it had before the ban. The original problem -- Shadow AI -- was at least partially visible. The post-ban problem is invisible by design.

Enterprise AI Governance Framework

The governance architecture that addresses Shadow AI without prohibition is in the Enterprise AI Governance Framework.

Section 2 - The Three-Stage Failure of Prohibition

The prohibition response follows a predictable three-stage failure pattern. Understanding the stages helps explain why organisations that know the research on prohibition effectiveness continue to implement bans -- the early signals suggest success, and the failure does not become fully visible until the damage is done.

Stage 1: The Announcement Effect

The ban is announced. Compliance communication goes out. Network blocks are configured. Approved tool lists are updated. Visible AI activity in corporate systems decreases. Governance metrics improve. The leadership team receives positive feedback from the board: 'Good to see we are taking this seriously.'

The Announcement Effect lasts approximately two to four weeks. During this period, employees who were using AI tools casually and openly are made aware that this carries risk. They become more cautious -- not less active. They begin assessing how to continue their AI use in a way that does not create visibility.

Stage 2: The Workaround Effect

Employees who find AI genuinely useful for their work -- which is most of them, since 88% use AI tools daily -- develop workarounds that allow continued use outside corporate monitoring. Personal devices on personal networks. Browser extensions that cannot be monitored by corporate IT. Using AI tools before or after business hours, then importing outputs into work systems. Asking AI questions that are slightly removed from the specific work task, so the outputs can be represented as personal research.

The Workaround Effect produces a specific governance outcome: the most AI-proficient employees -- the ones who find AI most useful and are most capable of using it effectively -- become the best at hiding their AI use. They are the highest-risk Shadow AI operators from a data governance perspective, and they are the least visible to the organisation because their sophistication allows them to operate beyond monitoring reach.

Stage 3: The Disclosure Effect

Employees who are using AI tools and hiding it have an incentive to avoid disclosing AI involvement in their outputs. A contract drafted with ChatGPT is submitted as original work. An analysis synthesised with AI assistance is attributed to manual research. AI-generated code is committed without flagging. The prohibition creates a disclosure incentive structure in which honesty about AI use carries career risk, while dishonesty about AI use carries no immediate consequence.

The Disclosure Effect is the most damaging long-term consequence of prohibition. It creates an organisational culture in which AI use is simultaneously widespread and officially unacknowledged -- producing outputs that management cannot assess for AI-related quality risks, because the AI involvement is hidden. Quality assurance becomes impossible. Error patterns become invisible until they produce failures.

Section 3 - Why the Corporate AI Policy Fails Before It Is Published

Most corporate AI policies fail not because they are legally incorrect, but because they are designed by a legal team for the organisation's legal protection rather than by a governance team for employee behaviour change. The difference is consequential.

A policy designed for legal protection reads like a disclaimer. It lists prohibited activities, states consequences, and describes the organisation's rights. It is written in language that protects the organisation from liability. It says almost nothing about what employees should do, and it provides no practical guidance for the specific situations employees actually face.

The Four Structural Flaws in Most Corporate AI Policies

• Prohibition-first framing -- the policy leads with what employees cannot do. Employees read this and conclude that the policy's purpose is to catch and punish them, not to help them work more effectively. This framing activates defensive behaviour rather than collaborative compliance.

• No approved tool register -- the policy prohibits 'unapproved AI tools' without specifying which tools are approved. Employees are left in a position where any AI tool they use is either unapproved (because nothing has been approved) or requires a multi-week approval process that is slower than the work that needs to be done.

• No fast-track pathway -- the policy implies that using a new AI tool requires extensive review and approval, but provides no process for initiating that review. Employees who want to do the right thing cannot find a way to do it quickly. The path of least resistance is to use the tool informally and not mention it.

• Legal language throughout -- the policy is written at a reading level and in a tone that signals it was written by legal counsel to protect the organisation, not by governance practitioners to guide employees. Employees do not engage with content that reads like a terms-of-service agreement.

Section 4 - The Three Types of AI Policy

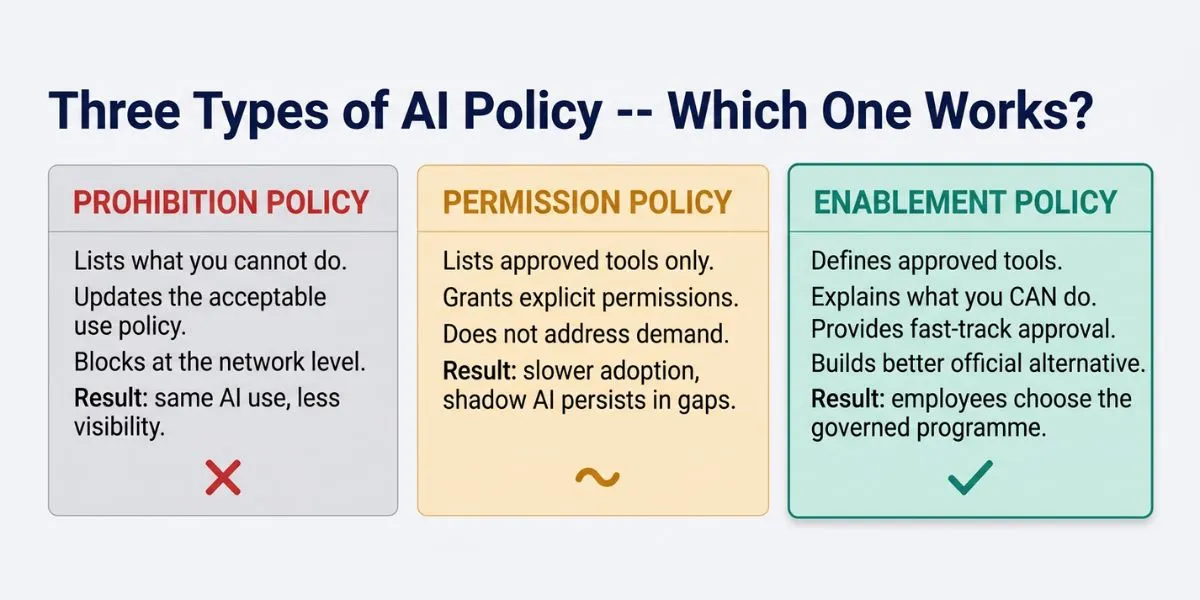

There are three distinct approaches to enterprise AI policy design. They produce measurably different outcomes in Shadow AI rates, employee compliance, and official programme adoption.

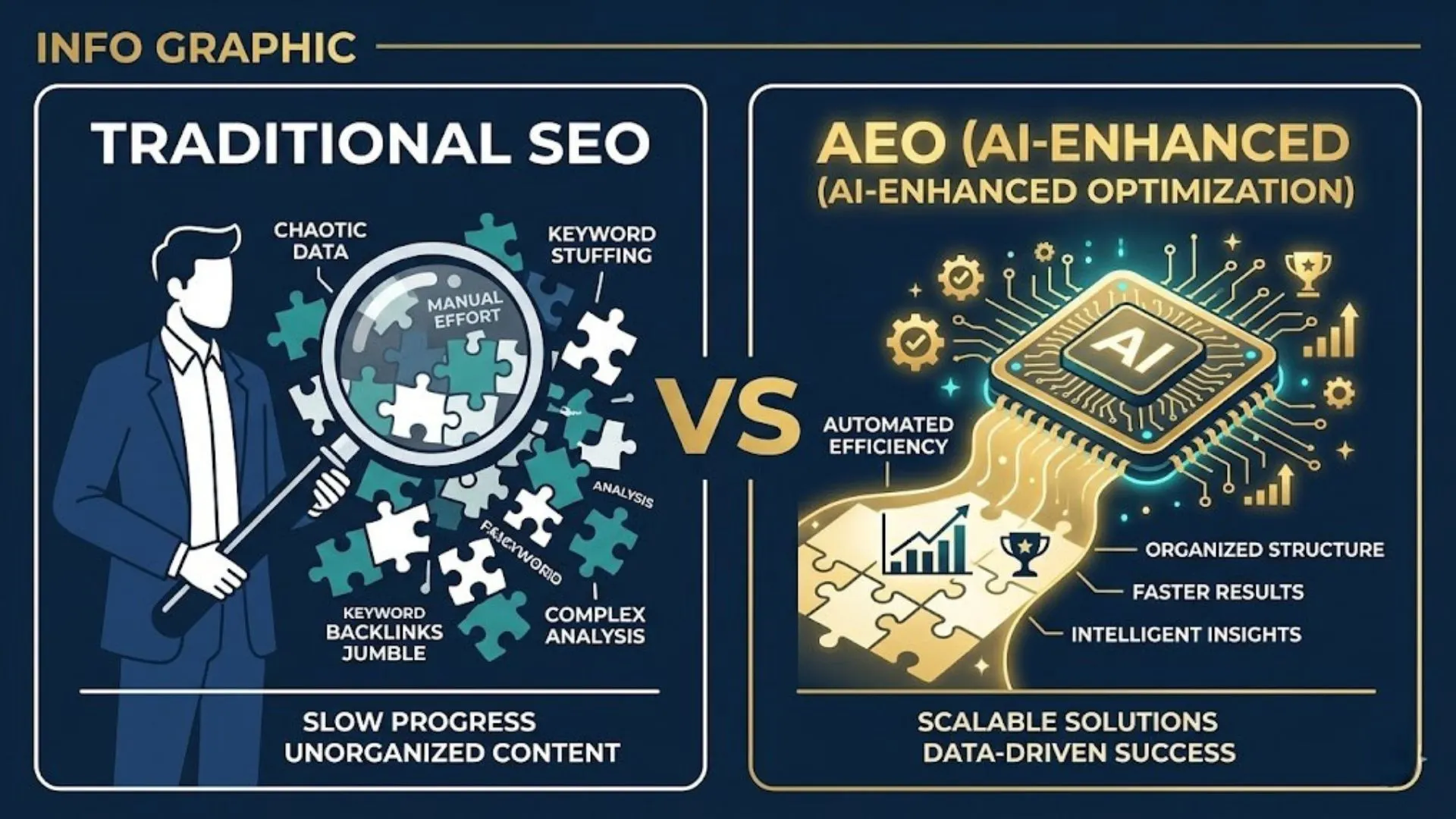

Type 1: Prohibition Policy

What it says: Lists what employees cannot do with AI. Updates the acceptable use policy with AI-specific prohibitions. Blocks access at the network level. States consequences for non-compliance.

What it produces: Three-stage failure (Announcement, Workaround, Disclosure). Same AI use, less visibility. Higher risk from the employees who are most capable of hiding their AI activity.

Type 2: Permission Policy

What it says: Lists which AI tools are approved and the conditions under which each can be used. Grants explicit permission for specific tools and use cases. Does not address the underlying demand for AI utility.

What it produces: Slower adoption, because the approved tool list is always behind demand. Shadow AI persists for use cases that the approved tools do not cover. Better than prohibition, but insufficient because the approved programme cannot keep pace with the rate of new AI tool emergence.

Type 3: Enablement Policy -- The One That Works

What it says: Defines what employees can do with AI. Lists approved tools and explains specific use cases for each. Provides a fast-track approval pathway for new tools that employees want to use. Acknowledges that employees are AI users and treats them as partners in building a governed AI programme, not as compliance risks to be monitored.

What it produces: Higher compliance, lower Shadow AI rates, and faster official programme adoption than either prohibition or permission approaches. Employees choose the official programme because it is more useful and because the pathway to expand it is clear and fast.

Section 5 - What Your AI Policy Should Actually Say

An effective AI Acceptable Use Policy does not read like a legal document. It reads like an onboarding guide. It assumes that employees are adults who want to do the right thing, and that the organisation's job is to make the right thing clear, accessible, and faster than the wrong thing.

The policy should answer five questions that employees actually ask, in language that employees can actually act on:

• Which AI tools can I use right now, for which tasks, with which data? (The approved tool register, with specific use cases and data classification guidance for each.)

• Which AI tools are explicitly not allowed, and why? (A short, specific list -- not a generic prohibition of 'unapproved AI tools.')

• If I want to use an AI tool that is not on the approved list, how do I get approval, and how long will it take? (The fast-track approval pathway -- with a named contact and a commitment to respond within a defined timeframe.)

• If I discover that I or a colleague has used AI in a way that might be a problem, what do I do? (The incident reporting process -- blame-free, with a named contact and a commitment to treat good-faith reporting as a governance contribution.)

• What are the rules around AI-generated outputs -- do I need to disclose AI involvement, and when? (Specific, practical guidance by output type and context, not a blanket 'always disclose' requirement that ignores the nuance of different use cases.)

'The AI Centre of Excellence maintains the approved tool register and owns the fast-track approval process that the Enablement Policy depends on.

Section 6 - The Six Elements of an Effective AI Acceptable Use Policy

1. A published, maintained approved tool register -- every AI tool the organisation has evaluated, approved, and deployed, with specific guidance on what it can be used for and what data it can process. Updated quarterly. Owned by the AI Centre of Excellence. Accessible to all employees through the organisation's intranet.

2. A clear data classification matrix applied to AI use -- which categories of data can be used with which AI tools. Regulated personal data, confidential client information, proprietary research, and public information each have different permitted AI use cases. The matrix should be specific enough that an employee can look at a piece of data and know which AI tools they can use with it, without asking.

3. A fast-track approval pathway for new AI tools -- a named process for submitting new tool requests, a named contact for receiving them, and a commitment to respond within a defined period (target: 10 business days for straightforward requests). The pathway exists because new AI tools emerge faster than any approval committee can pre-approve them. The alternative to a fast-track pathway is Shadow AI adoption of every new tool the committee has not yet reviewed.

4. A blame-free incident reporting mechanism -- employees who discover they have used AI in a way that may have violated the policy, or who witness a colleague doing so, need a pathway to report that does not carry career risk. Good-faith reporting should be explicitly encouraged and explicitly protected from disciplinary action. Without this, incidents are hidden rather than reported.

5. Specific guidance by role and use case -- the policy should include worked examples: 'If you are in the Legal team and you want to draft a contract using AI, here is the approved tool, here is what data you can include, and here is the disclosure requirement for the final document.' Generic guidance produces generic compliance. Specific guidance produces specific behaviour change.

6. A quarterly review cycle -- the AI landscape changes faster than annual policy review cycles can accommodate. The policy should be reviewed quarterly against: new tools that have emerged, incidents that have occurred, regulatory developments (EU AI Act enforcement, AU Privacy Act activation), and employee feedback from the fast-track pathway. Employees who submit tool requests and receive no response within the committed timeframe are making a rational decision to use the tool informally.

AI Governance Framework Template

'The AI Governance Framework Template contains a complete AI Acceptable Use Policy template in Section 7 -- editable DOCX, free download.'

Section 7 - The Fast-Track Approval Pathway: Why Speed Is a Governance Mechanism

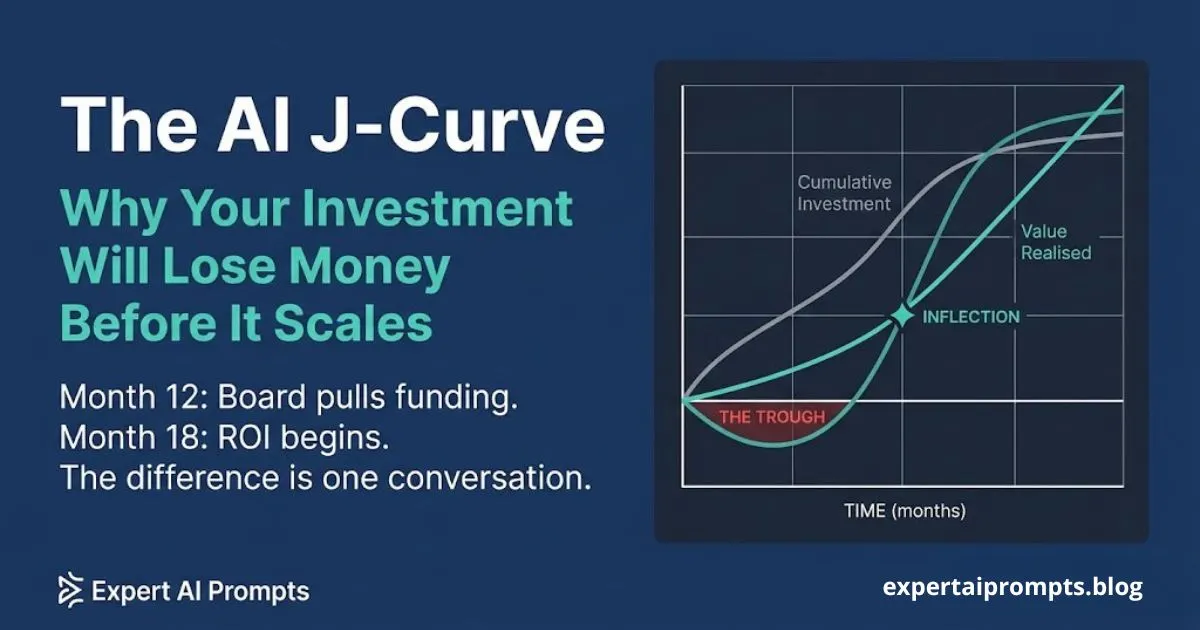

The single most important element of the Enablement Policy -- the one that most directly determines whether employees choose the official programme over Shadow AI adoption of new tools -- is the speed of the fast-track approval pathway. An approval pathway that takes four to six weeks is not a fast-track pathway. It is a permission structure with a name that implies responsiveness but delivers bureaucracy.

The test of the fast-track pathway is simple: if an employee identifies a new AI tool that they want to use for a specific work task, and they submit a formal approval request through the official process, does the organisation respond with a decision faster than the employee's natural impatience would drive them to try the tool informally? If the answer is no, the fast-track pathway is producing Shadow AI adoption of every new tool.

A functional fast-track pathway has five characteristics:

• A named contact -- one person or team who receives tool approval requests. Not 'submit to IT' or 'contact your manager.' A named contact with a direct submission route.

• A committed response time -- ten business days for a standard evaluation. Five business days for tools with an existing vendor relationship. Published and visible in the policy.

• A standardised evaluation checklist -- so that approval decisions are consistent, documentable, and not dependent on which evaluator happens to receive the request.

• A provisional use mechanism -- for tools that are under evaluation and pose minimal risk, allow provisional use under defined constraints while the formal evaluation proceeds. This prevents the 'I need it now' pressure from becoming Shadow AI adoption.

• A feedback loop -- employees who submitted requests receive a decision with a rationale, regardless of outcome. If a request is declined, the rationale helps the employee understand what alternatives exist or what conditions would change the decision.

AI Transformation Roadmap 2026

The fast-track approval pathway is a Phase 2 governance deliverable in the AI Transformation Roadmap 2026 -- established before the first production deployment.

Closing - From Prohibition to Enablement

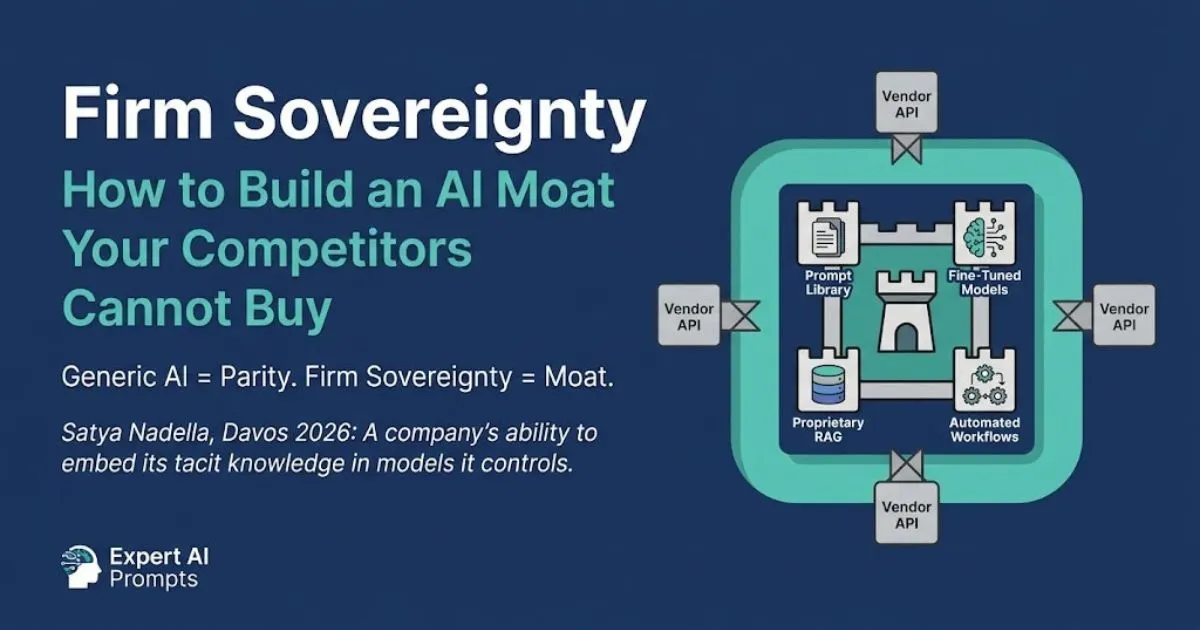

The organisations that successfully reduce Shadow AI do not do it through prohibition. They do it by building an official AI programme that is faster, more useful, and more domain-specific than the shadow alternatives -- and by creating a policy environment in which employees are treated as partners in that programme rather than compliance risks to be monitored.

The Enablement Policy is not a permissive policy. It still defines what employees cannot do. It still classifies data handling requirements. It still states consequences for deliberate policy violations. But it leads with what employees can do, it provides the tools to do it within a governed framework, and it makes the pathway to the official programme faster and more attractive than the shadow alternative.

The test of whether your AI policy is working is not whether reported AI tool violations have decreased. It is whether employees are actively choosing the official programme over the shadow alternatives. That test requires measurement of official tool adoption rates, not just violation reports.

Your next steps:

AI Governance Framework Template

Download the complete template including the AI Acceptable Use Policy in Section 7.

Enterprise AI Governance Framework

The full governance architecture including the Shadow AI containment playbook.

Why building the right official AI programme is the Shadow AI solution.

About the Author

Matthew Bulat is the Founder of Expert AI Prompts and a 20+ year technology and AI strategy executive. Former CTO, Federal Government Technical Operations Manager for national infrastructure across 20 cities and 4,000 users, and 8+ year University Lecturer at CQUniversity. The AI policy framework in this article is derived from the Enterprise AI Governance Framework -- the same governance architecture applied in the Expert AI Prompts live platform across 30 industries.