From Chaos to Control: Structuring Your First AI Centre of Excellence

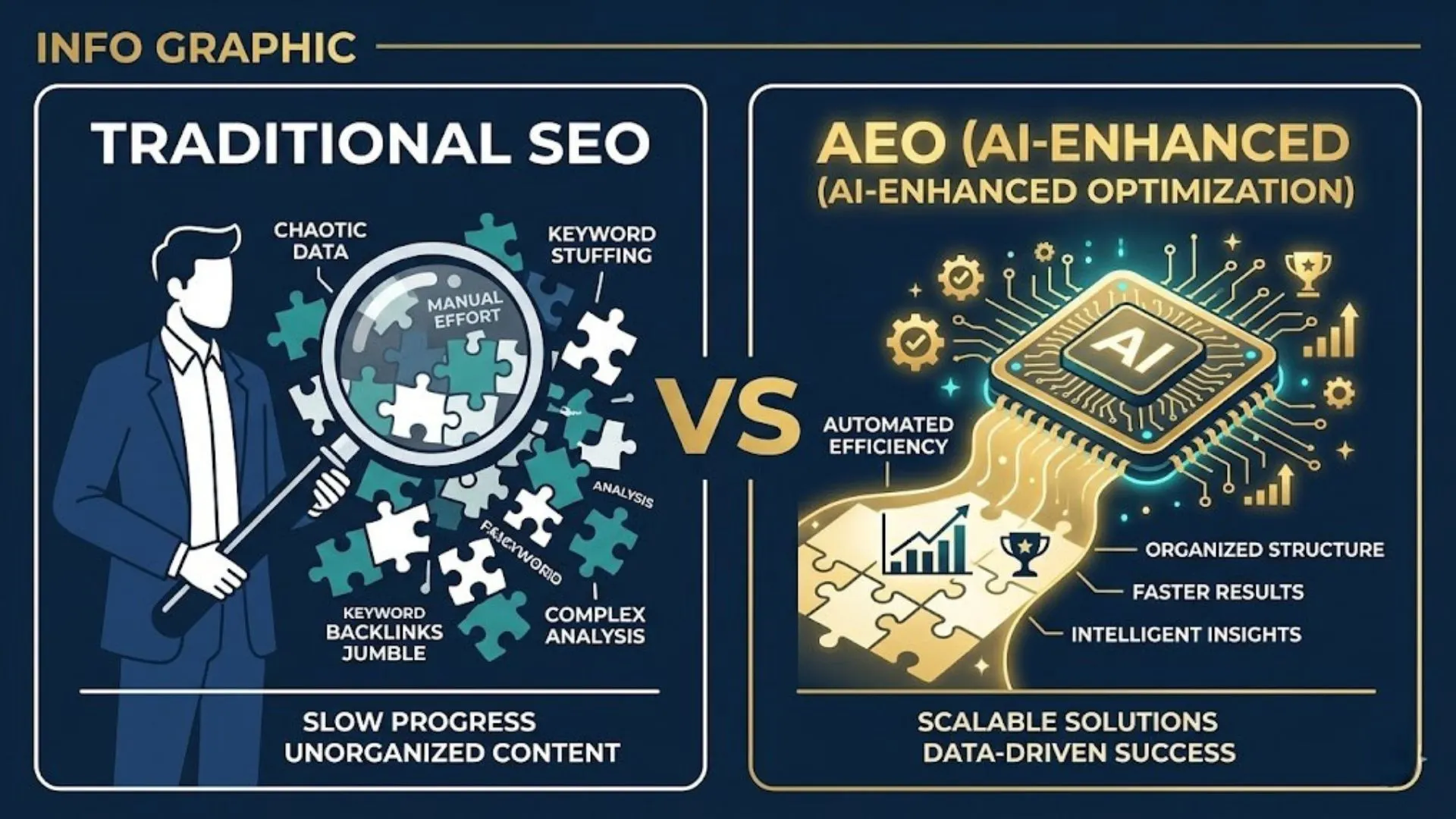

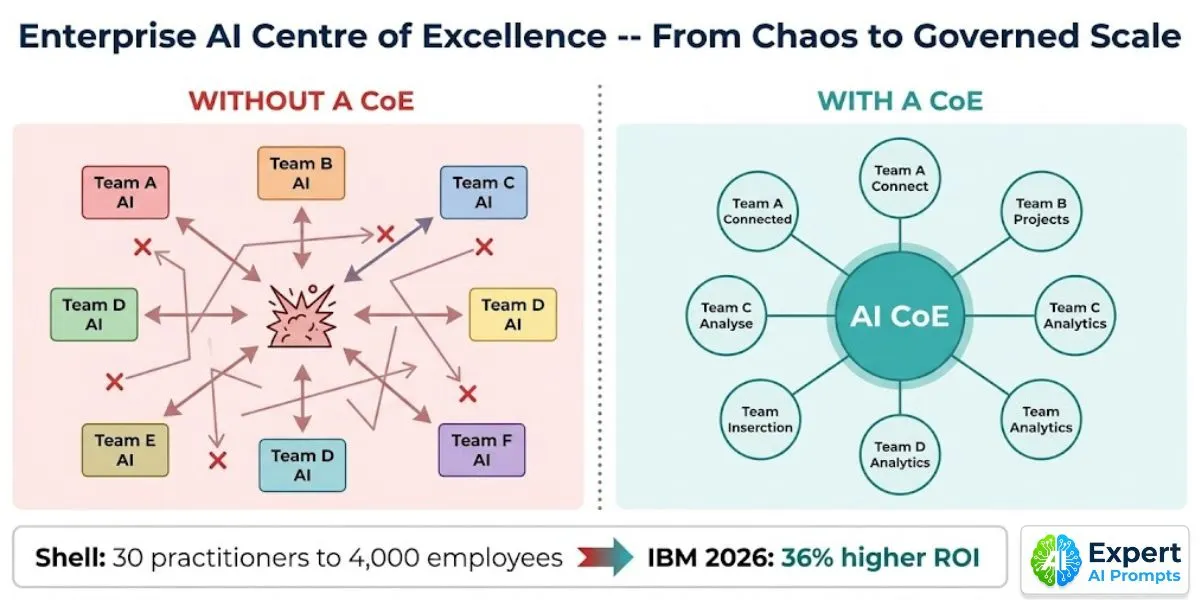

Without an AI Centre of Excellence, enterprise AI produces bespoke chaos. Eight to ten business lines, each making independent AI investments. Each rebuilding the same data pipeline infrastructure. Each managing its own vendor relationships with no negotiating leverage. Each generating AI outputs that nobody governs, audits, or connects to a shared quality standard. And when the EU AI Act asks which AI systems you are running and what their risk classification is -- the answer is: 'We are not entirely sure.'

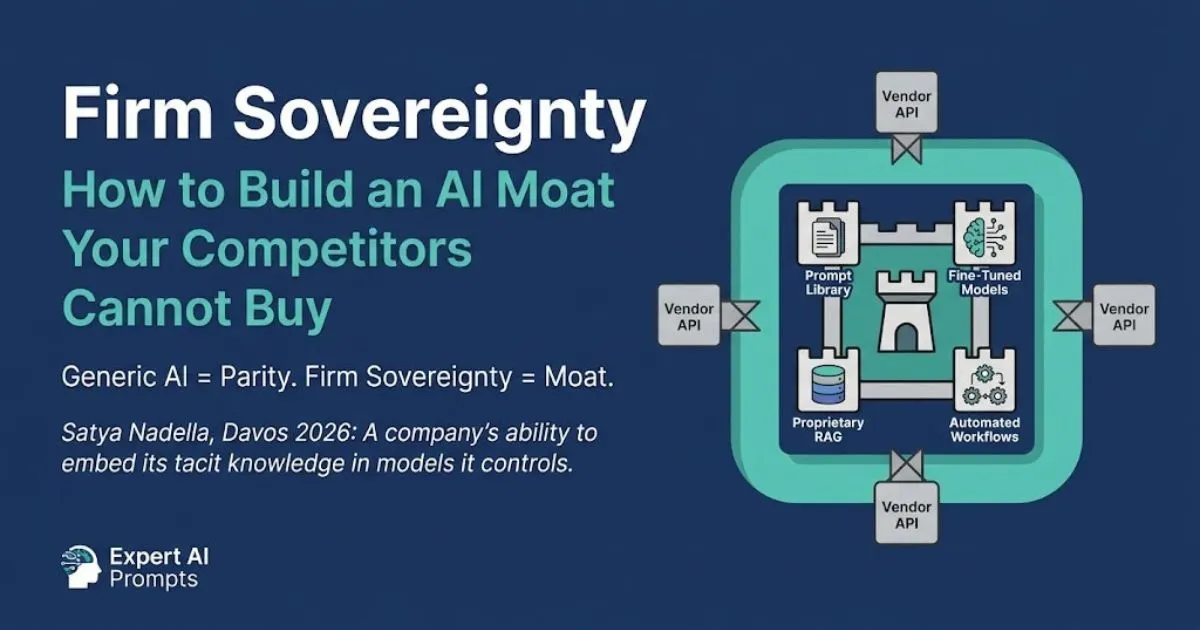

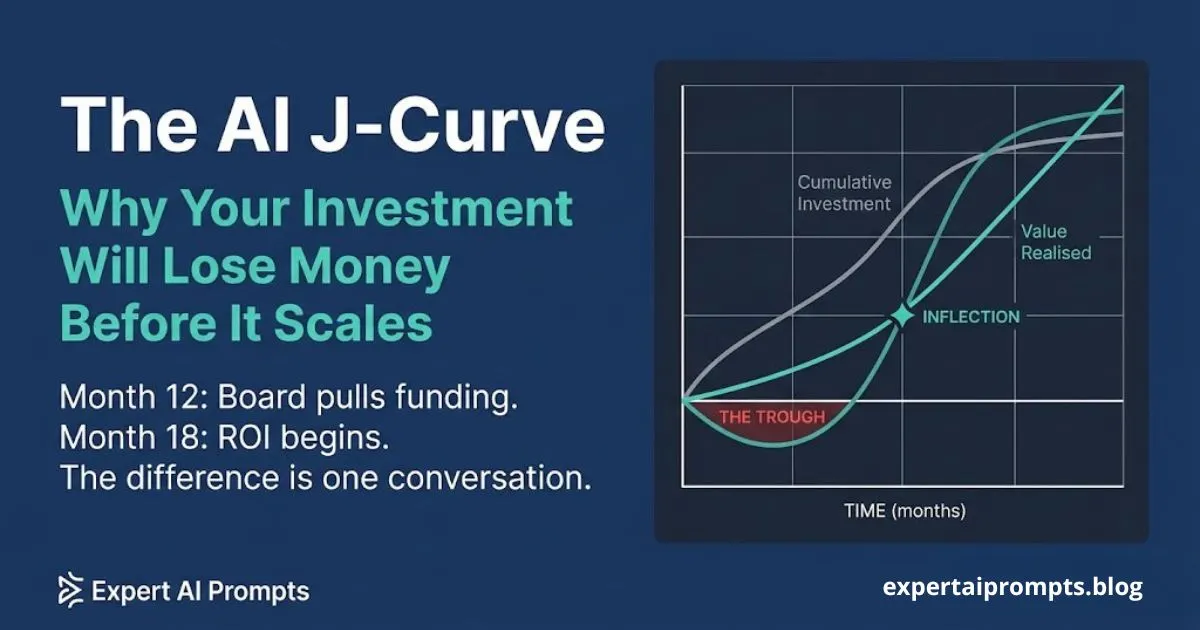

The AI Centre of Excellence is the organisational infrastructure that prevents bespoke chaos. Shell built one before scaling. Most organisations have not. IBM's 2026 research is specific about what that costs: centralised or hub-and-spoke AI operating models yield 36% higher ROI than decentralised approaches. The CoE is not a bureaucratic overhead. It is the compounding foundation that makes every subsequent AI investment cheaper, faster, and safer than the one before it.

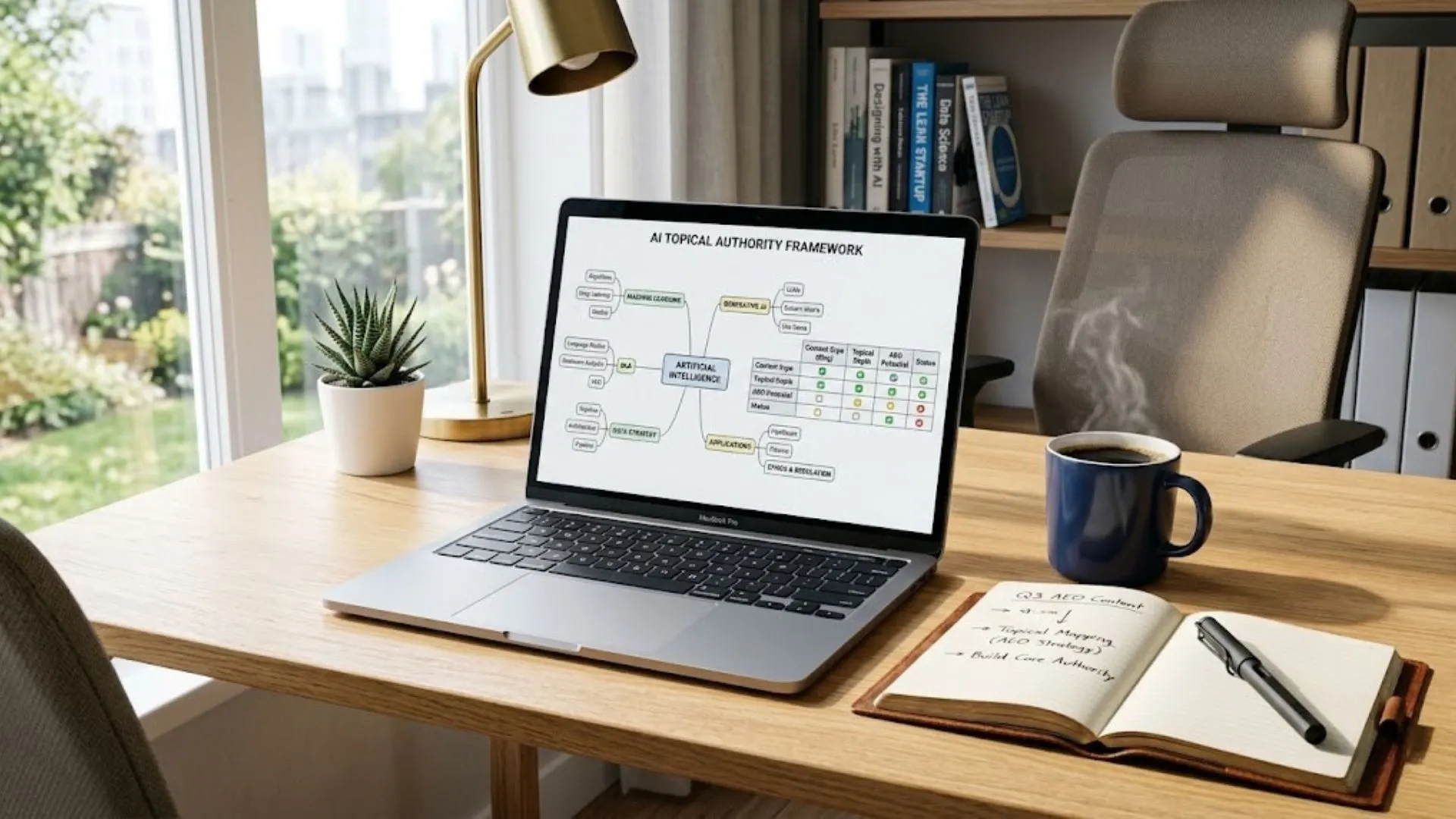

Enterprise AI Governance Framework

The full governance architecture for the CoE is in the Enterprise AI Governance Framework.

Section 1 - Why Enterprise AI Fails Without a CoE: The Bespoke Chaos Problem

The Shell AI CIO put it precisely: 'Without a centre of excellence, you have bespoke investments across eight to ten business lines, everyone pinging IT from different areas -- less efficient investment, more risk, no ability to scale what works.' Before Shell built its CoE, this was exactly the pattern. Before most organisations build a CoE, this is still exactly the pattern.

The bespoke chaos problem compounds over time. In Year 1, two business lines pilot AI independently. In Year 2, four more begin. By Year 3, eight functions are running independent AI programmes with no shared standards, no shared data infrastructure, no shared vendor register, and no governance framework that any of them applies consistently. Each new use case costs as much as the first. Each new vendor relationship is evaluated from scratch. Each governance question is answered independently -- often differently -- by each function.

What Bespoke Chaos Actually Costs

• Rebuilt infrastructure: every new AI use case rebuilds the same data pipeline, integration layer, and governance scaffolding. No compounding. No marginal cost reduction. The tenth use case costs the same as the first.

• No shared standards: each team interprets compliance differently. When the EU Act audit question arrives, five different teams produce five different answers about the same type of AI system.

• Vendor fragmentation: each function manages its own AI vendor relationships. No negotiating leverage, no visibility of total spend, no central evaluation of performance or redundancy.

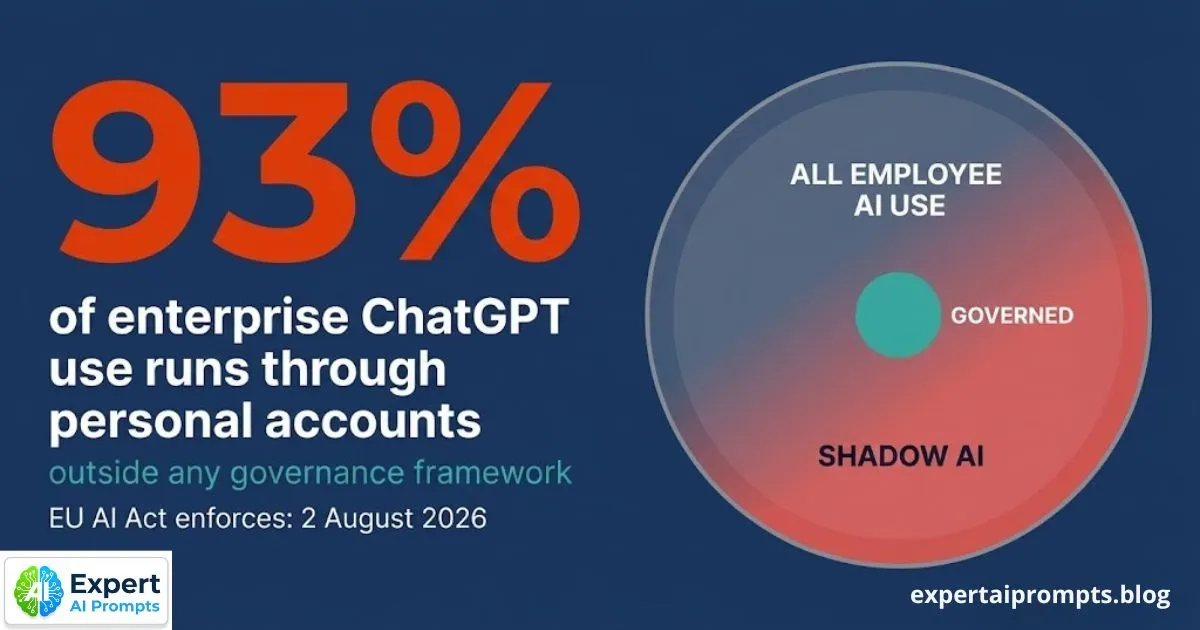

• Shadow AI growth: when the official programme is too slow or restrictive, employees use personal accounts. 93% of enterprise ChatGPT use runs through personal accounts today. Bespoke chaos makes this worse -- there is no official programme credible enough to compete.

The full analysis of shadow AI and how to contain it is in The Shadow AI Problem.

Section 2 - The Shell Case: From 30 Practitioners to 4,000 Employees Engaged

Shell's AI Centre of Excellence was founded as a coordination body -- its first purpose was to prevent the bespoke fragmentation that the CIO described. Before the CoE, each of Shell's ten business lines was making independent AI investments. The CoE established shared standards, shared infrastructure, and shared vendor relationships. It did not own all AI. It owned the foundations that allowed every function to build AI with confidence.

The compounding effect was significant. Thirty AI practitioners in the founding year. Four thousand employees actively engaged with AI today. The CoE evolved from a coordination body into a deep expertise centre -- setting policies, standards, and shared platforms that every function inherits. The fixed cost of governance and infrastructure was paid once. Every subsequent use case inherited it at zero marginal infrastructure cost.

What the Shell CIO Said -- and Why It Applies to Your Programme

Shell AI CIO: 'Without a centre of excellence, you have bespoke investments across eight to ten business lines, everyone pinging IT from different areas -- less efficient investment, more risk, no ability to scale what works.' The CoE solves all three simultaneously: shared infrastructure makes investment more efficient, shared governance reduces risk, and shared foundations enable scale.

IBM's 2026 research confirms this pattern is not specific to Shell. Centralised or hub-and-spoke AI operating models yield 36% higher ROI than decentralised approaches across all sectors studied. The CoE investment produces measurably better outcomes. The question is not whether to build one -- it is when to start, and what the minimum viable starting point looks like.

Section 3 - What a CoE Actually Does: Scope and Decision Rights

The most common CoE design failure is scope confusion. Either the CoE tries to own everything -- approving every prompt, reviewing every AI output, controlling every tool adoption -- and becomes the bottleneck that prevents the official programme from competing with shadow AI. Or it owns nothing and has no authority, producing governance documents that nobody is required to follow.

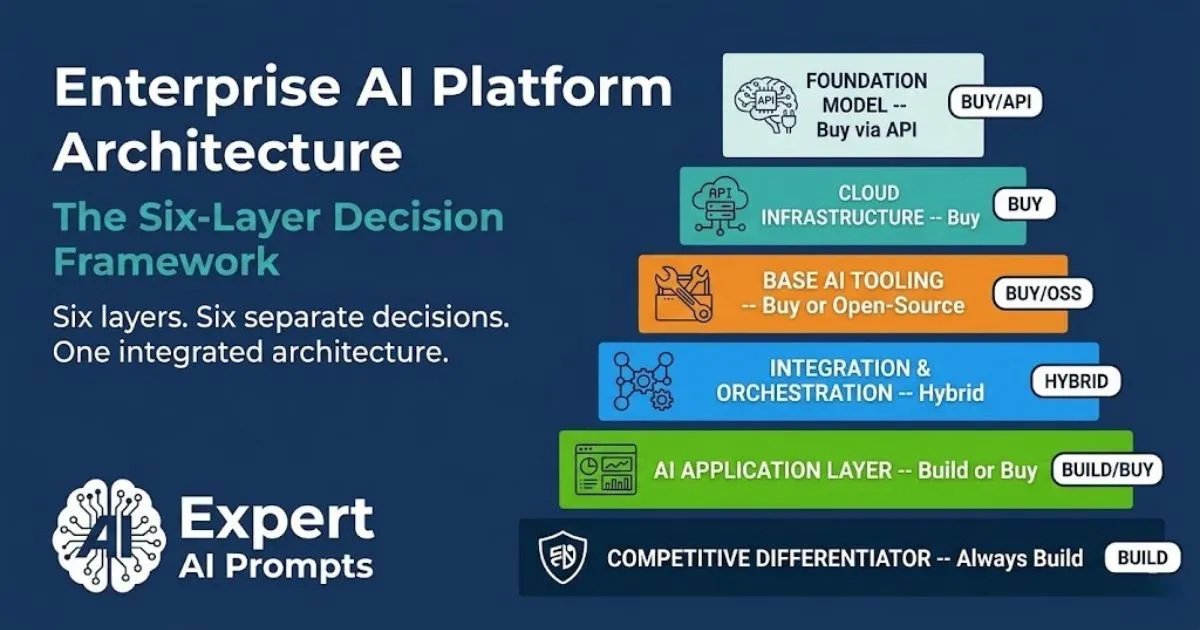

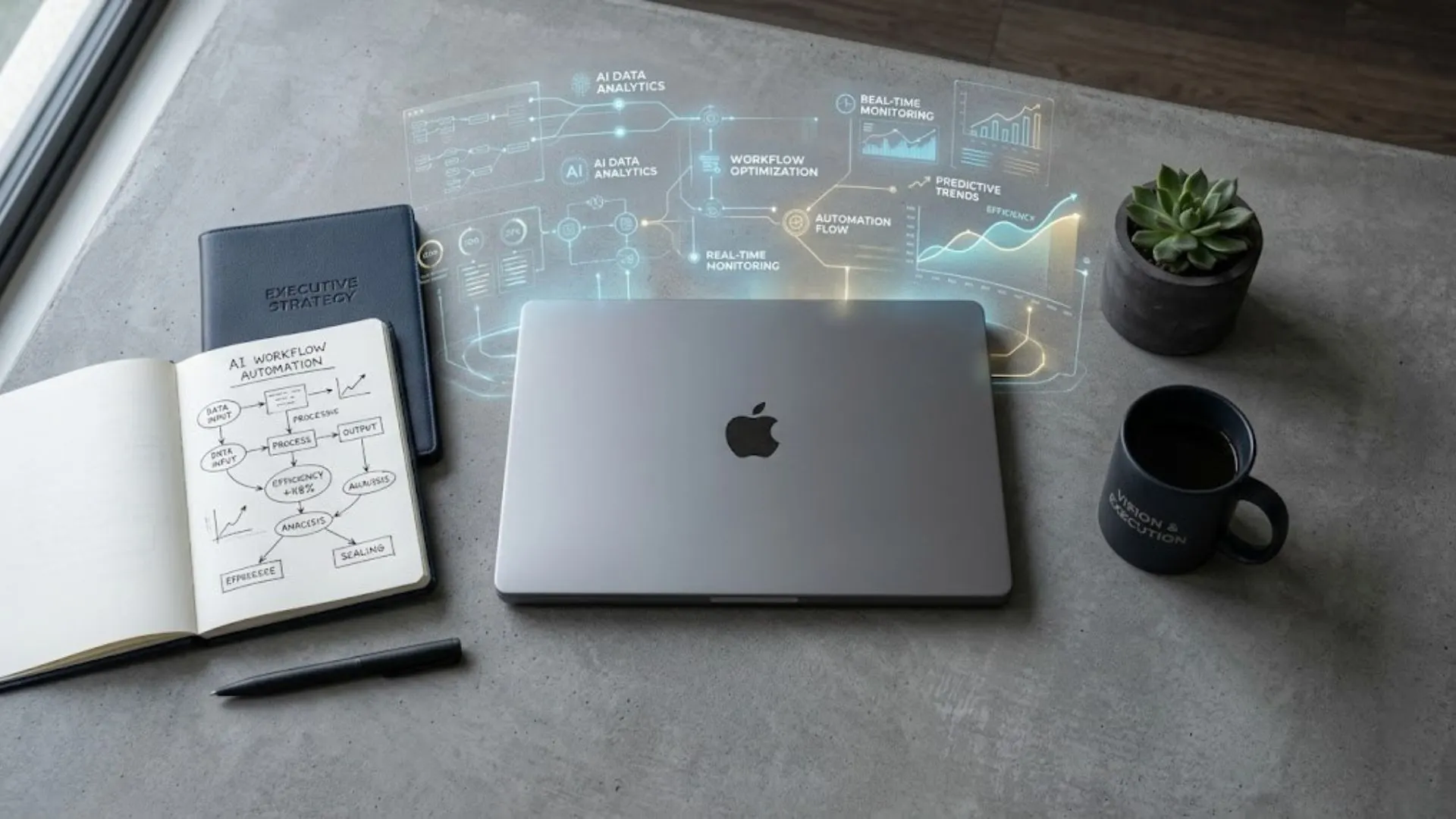

The CoE has authority over: new AI tool adoption (it maintains the published approved tool register); use case risk classification against the EU AI Act framework before any pilot begins; the enterprise AI governance standards including the acceptable use policy and data handling requirements; the enterprise AI vendor roster and central contract negotiations; and the AI skills certification programme. These are the levers that make the CoE effective. Each creates shared infrastructure that compounds.

What the CoE Does NOT Own

• Day-to-day operation of production AI systems -- business functions own their deployed systems once operational handover is complete

• All AI budgets -- business functions fund their own use cases within the CoE-approved framework and cost standards

• All AI talent -- Champions and skilled employees belong to their business functions, not the CoE

• All AI strategy -- the AI Governance Committee (not the CoE) sets the risk appetite and portfolio approval

• All AI output quality -- AI Product Managers own quality validation; business functions own operational accountability

Section 4 - The Six Core CoE Roles

In the first 90 days, the CoE Director and Governance Lead are the minimum viable team. Add roles as the programme scales -- AI Architects and Data Engineers from Phase 2, AI Product Managers from Phase 3, Change Management Lead from Phase 1 onwards on a contracted basis.

Role 1: CoE Director or CAIO

Primary accountability: Enterprise AI strategy, board reporting, governance posture, programme ownership, AI horizon scanning, CoE leadership. Active across all phases.

Key outputs: Annual AI Report to board, Risk Appetite Statement, quarterly Governance Committee briefings, portfolio prioritisation decisions.

Role 2: AI Architects

Primary accountability: End-to-end technical design -- model selection, production architecture, security design, cross-border data flow, integration standards. Phases 2-5. Two FTE.

Key output: Technology Stack Decision at Phase 2, Wave architecture design at Phase 3-4. Critical: hire before you hire AI Product Managers.

Role 3: Data Engineers

Primary accountability: AI-ready data pipelines, ETL/ELT for AI workloads, data quality assurance, governance enforcement, EU AI Act data lineage documentation. Phases 2-5. Two FTE.

Key output: Data Readiness Assessment at Phase 2, shared pipeline platform at Phase 3-4. Gartner 2026: 60% of agentic AI projects fail due to lack of AI-ready data. This role prevents that.

Role 4: Governance and Compliance Lead

Primary accountability: EU AI Act risk classification, AU Privacy Act APP 1.7 compliance, SOC 2 controls, audit trail management, Kill/Scale Gate 4 ownership, regulatory horizon scanning. Phase 2 onwards. One FTE.

Key output: Compliance Map, Gate 4 decision authority for every production deployment, quarterly regulatory briefings. EU AI Act full enforcement: 2 August 2026.

The Governance Lead owns Gate 4 for every use case -- the compliance gate that prevents EU AI Act violations at enterprise deployment. Gate 4 is covered in 4 Gates to Production.

Role 5: AI Product Managers

Primary accountability: Use case ownership, Success Contract management, technical documentation including EU AI Act Annex IV, business unit liaison, post-deployment monitoring, handover to business unit operational ownership. Phase 3 onwards. One per Wave.

Key output: Board Value Report, signed Success Contract, validated ROI evidence for each production deployment. The AI Product Manager is the named accountable owner between pilot approval and production handover.

Role 6: Change Management Lead

Primary accountability: ADKAR implementation across all phases, Champion Network development and support, three-tier AI skills certification programme delivery, adoption rate metrics, resistance management. All phases -- contracted Phase 1-2, dedicated from Phase 3.

Key output: Active Champion Network, AI Adoption Rate metric, skills certification completions. The BCG 10-20-70 principle: 70% of AI transformation outcomes are determined by people, processes and culture. This role owns the 70%.

Section 5 - The Three CoE Operating Models

The CoE operating model must evolve as the transformation matures. A permanently centralised CoE becomes a bottleneck at Phase 4 scale. A prematurely federated CoE loses governance coherence. The evolution is deliberate and phase-gated.

CENTRALISED (Phases 1-2) -- Speed-to-Production: 6 months average.

All AI decision-making, investment, and delivery flows through the CoE. Business unit involvement is consultative. Required to establish standards, prevent shadow AI, and build shared infrastructure before business functions operate independently. Governance coherence is highest; deployment velocity is lowest. This is correct at programme inception.

HUB AND SPOKE (Phases 3-4) -- Speed-to-Production: 30 days.

The CoE owns standards, shared infrastructure, and governance. Business functions own deployment and operation within those standards. AI Product Managers sit in the CoE but are assigned to specific business function portfolios. Champions are the spoke connections. IBM 2026: this model yields 36% higher ROI than decentralised approaches.

FEDERATED (Phase 5) -- Speed-to-Production: under 21 days.

Business functions operate AI capabilities autonomously within CoE-defined standards and shared infrastructure. The CoE shifts from delivery to enablement -- governance evolution, horizon scanning, and standards maintenance. This is the Phase 5 target state.

When to Transition Between Models

Transition from Centralised to Hub and Spoke when: 3+ use cases are in production, Wave 1 ROI is validated, shared infrastructure is operational, and at least one Champion is active in each major business function. Premature transition before these conditions is the governance fragmentation that the CoE was built to prevent.

Transition from Hub and Spoke to Federated when: 10+ use cases in production across 3+ functions, AI Adoption Rate is 40% or more of eligible workforce, Speed-to-Production is consistently under 30 days, and business function Champions are operating at Tier 3 AI mastery. The transition to federated should feel like a release of capability, not a surrender of control.

Section 6 - Three Performance Metrics That Determine CoE Maturity

These three metrics tell you more about CoE health than any other measure. Track them from Phase 3 onwards. Report them to the board quarterly.

1. Speed-to-Production. Time from use case approval to production deployment with governance controls active. Phase 3 target: under 60 days. Phase 4 target: under 30 days. Phase 5 target: under 21 days. Declining Speed-to-Production is the primary signal of CoE maturity. Without shared infrastructure, every use case takes 6 months. With it, 21 days.

2. AI Adoption Rate. Percentage of eligible workforce actively using approved AI tools at least weekly. Phase 3 gate: 40% or more. Phase 4 target: 60% or more. Phase 5 target: 80% or more. A high production count with low adoption rate means Champions are building systems nobody uses. The Adoption Rate reveals whether the official programme is actually competing with shadow AI alternatives.

3. Production Count. Number of AI use cases running in production with validated ROI. Not pilots. Not demos. Production. Phase 3 gate: 3-5 active. Phase 4 target: 10 or more. Phase 5 standard: 20 or more. Production Count is the only metric that measures actual transformation rather than activity.

Section 7 - The Shadow AI Dividend: Making the Official Programme Win

93% of enterprise ChatGPT use runs through personal, non-corporate accounts. This is not a security failure. It is a market signal. Employees have determined that AI makes them more effective. The official AI programme is not meeting them where they are. The CoE's response to shadow AI is not restriction -- it is competition.

Make the approved AI programme faster, more useful, and better governed than the shadow alternatives. When the official programme can deploy a governed, domain-specific AI tool in under 30 days -- tool approved, data pipeline confirmed, compliance classification complete, prompt library built -- employees stop reaching for personal ChatGPT accounts. Not because they are forced to, but because the official programme is genuinely better.

The three-step shadow AI response for CoE leaders:

1. Conduct the quarterly Shadow AI audit to identify every tool in use across all functions, including personal accounts. Classify by risk tier. Identify what problems employees are solving with shadow tools that the official programme is not addressing.

2. For each major shadow AI use pattern, build or fast-track an official governed alternative that is measurably better -- more domain-specific, better integrated, faster to access.

3. Communicate the migration pathway to the workforce. Publish the approved tool register. Create a fast-track approval process for new tools identified by Champions.

Why Banning ChatGPT Isn't Working

Why prohibition accelerates shadow AI rather than containing it is covered in Why Banning ChatGPT Isn't Working.

Section 8 - How to Start: The CoE Charter in Five Steps

The CoE Charter is the founding document of the Centre of Excellence. It can be produced in a single working session with the right stakeholders. A functional CoE Charter can have the CoE operational within 60 days of programme inception -- the Phase 2 gate requires it before any production AI deployment. Five elements must be present.

1. Purpose statement: a single sentence defining what the CoE exists to do in business outcome terms. Not technology terms. Business outcome terms. Example: 'This CoE exists to make every AI investment in this organisation cheaper, faster, safer, and more strategically aligned than the one before it.'

2. Scope and authority: a clear list of what the CoE has authority over (tool approval, risk classification, governance standards, vendor roster, skills certification) and what it does not own (daily operations, all budgets, all talent, all strategy). The boundary must be explicit -- ambiguity creates either bottleneck or irrelevance.

3. Membership: named individuals in each role with reporting relationships defined. The CoE Director should report to the CEO or CAIO -- not the CTO. CEO reporting signals genuine cross-functional strategic authority, which is what makes the CoE effective at resolving cross-functional conflicts.

4. Decision Rights Matrix: for each decision type (tool adoption, use case approval, risk classification, budget allocation, incident escalation) -- who decides, who is consulted, who is informed. This document prevents the CoE from becoming either a bottleneck (decides everything) or a governance fig leaf (decides nothing).

5. Operating Cadence: monthly CoE working meeting, quarterly AI Governance Committee meeting, annual board AI report. These are governance obligations, not aspirational. The quarterly board report is the accountability mechanism that keeps the CoE from becoming invisible.

AI Transformation Roadmap 2026

The CoE Charter is the Phase 2 governance deliverable in the 90-day AI Transformation Roadmap 2026 -- required before any production deployment.

AI Governance Framework Template

The AI Governance Framework Template contains the complete CoE Charter template and Decision Rights Matrix -- free download.

Closing - What Building the CoE Actually Means

Building the CoE is not a governance exercise. It is a compounding infrastructure investment. Every dollar invested in shared data pipelines, governance standards, pre-clearance decisions, and shared vendor relationships reduces the cost of every subsequent AI deployment. The first use case is the most expensive. The tenth use case -- on shared foundations -- costs a fraction of the first.

Shell's CoE started with 30 AI practitioners. It grew to 4,000 employees engaged today. That growth was not linear -- it was compounding. Each governance standard set once applied to every function. Each vendor relationship established centrally served every business line. Each Champion developed at one function became the template for the next. The CoE does not own the AI. It owns the conditions that make AI work at scale.

Your next steps:

Enterprise AI Governance Framework

The complete governance architecture including the four-layer model, shadow AI containment, and board reporting framework.

AI Governance Framework Template

Download the template including the CoE Charter, Decision Rights Matrix, and Acceptable Use Policy.

Enterprise AI Transformation Playbook

The full five-phase framework in which the CoE is built and evolves.

About the Author

Matthew Bulat is the Founder of Expert AI Prompts and a 20+ year technology and AI strategy executive. Former CTO, Federal Government Technical Operations Manager for national infrastructure across 20 cities and 4,000 users, and 8+ year University Lecturer at CQUniversity. The CoE framework in this article is the governance architecture applied in the Expert AI Prompts live platform -- 30 industries, 1,500+ prompts, 15 AI workflow systems.