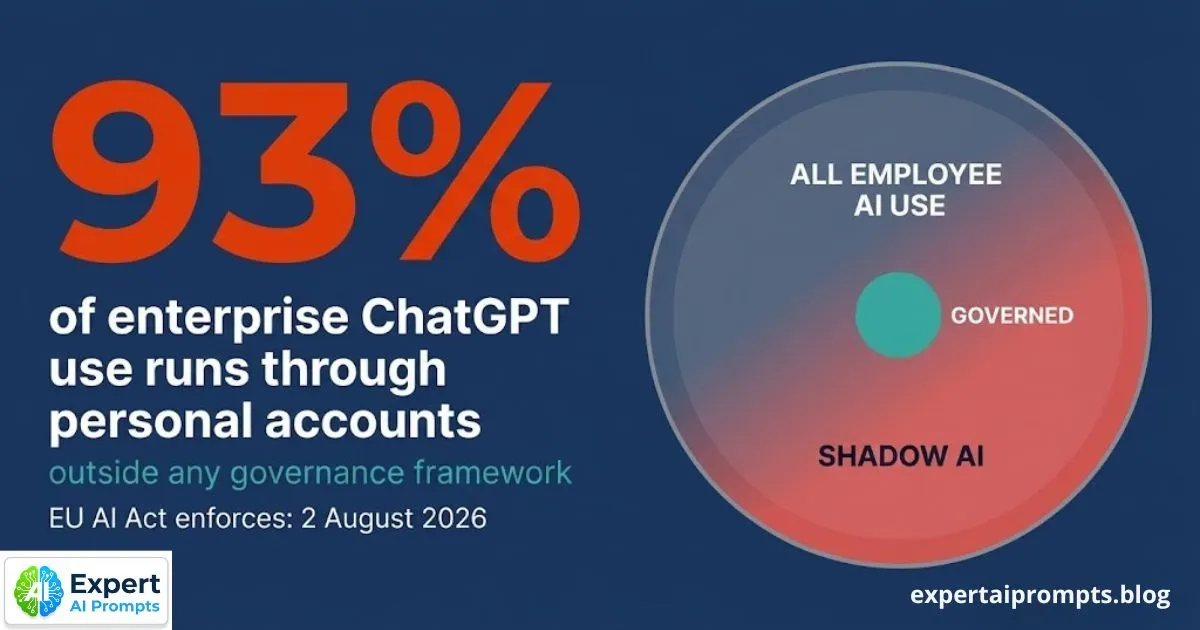

The Shadow AI Problem: 93% of Enterprise ChatGPT Use Is Through Personal Accounts

Before your organisation deploys its first officially sanctioned AI system, the AI deployment has already begun. Research consistently shows that 93% of enterprise ChatGPT use occurs through personal, non-corporate accounts -- employees accessing public AI tools with work data, work prompts, and sensitive business context, completely outside any organisational governance framework. The AI programme your organisation is planning is not the first AI programme running in your organisation.

This is the Shadow AI problem. And in 2026, with the EU AI Act enforcing on 2 August and the Australian Privacy Act activating automated decision-making obligations in December, it is not just an IT governance problem. It is a regulatory compliance problem with specific, named penalties for organisations that cannot demonstrate governance of their AI use.

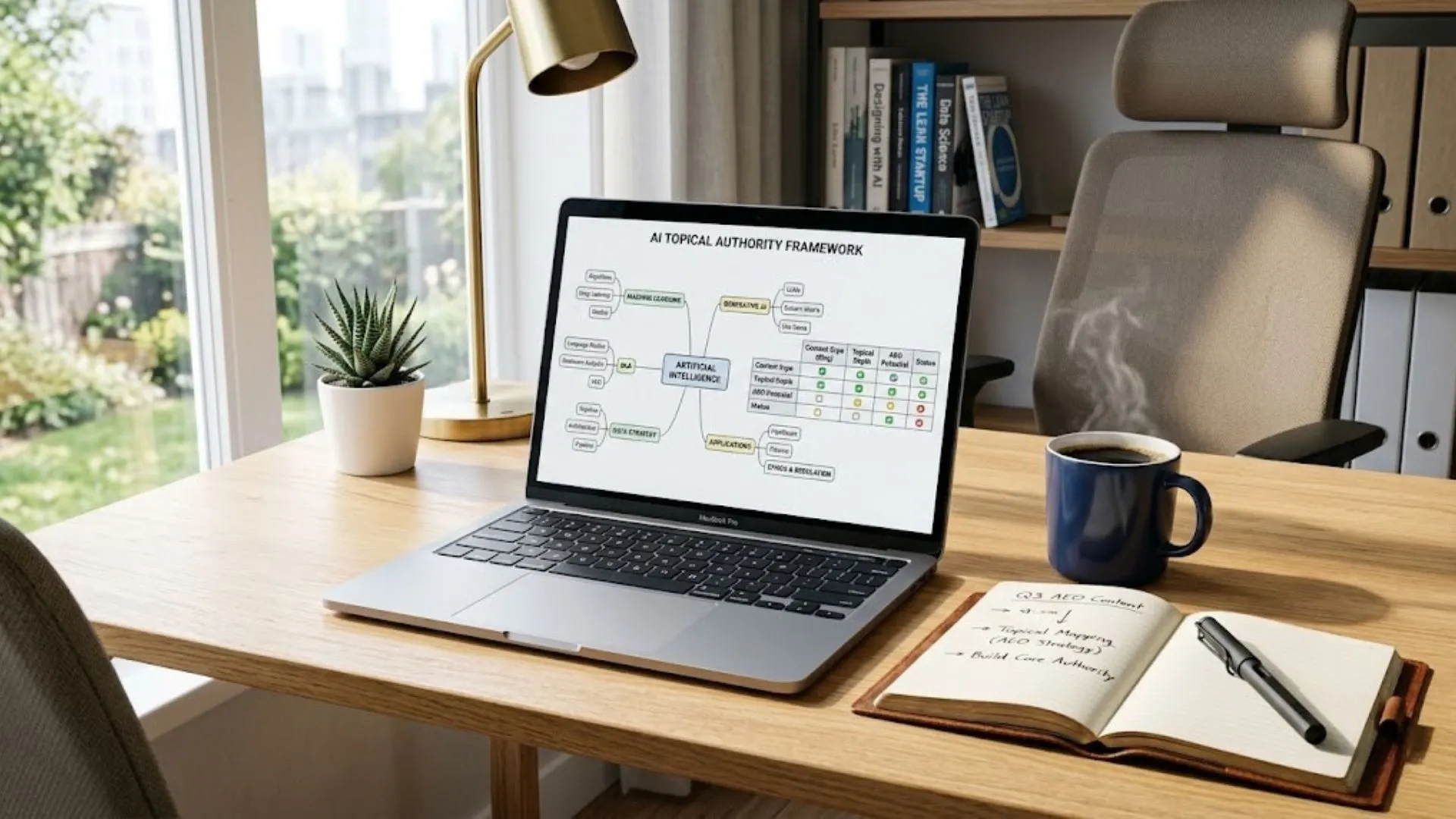

Enterprise AI Governance Framework

The full governance architecture that addresses Shadow AI is in the Enterprise AI Governance Framework.

Section 1 - What Shadow AI Actually Is

Shadow AI is the use of AI tools by employees outside the organisation's officially approved and governed AI programme. It includes personal ChatGPT accounts used for work tasks, consumer AI tools accessed without IT approval, AI-assisted features embedded in SaaS platforms that were not part of the vendor evaluation, and any AI tool that produces work outputs -- drafts, analyses, summaries, decisions -- without being subject to the organisation's data handling standards, access controls, or quality review processes.

The scale is not marginal. 88% of employees use AI tools daily. Only 5% use them at an advanced level. The 83-point gap between daily AI activity and advanced AI proficiency is not a training problem -- it is an access and relevance problem. Employees have determined that AI makes them more effective. The official programme is not meeting them where they are.

Shadow AI vs IT Shadow IT -- an Important Distinction

Shadow IT -- employees using unapproved software -- has been a governance challenge for two decades. Shadow AI is different in two important ways that make the governance stakes significantly higher.

First, AI tools process information -- they analyse, summarise, and generate content based on the inputs employees provide. When an employee uses an unapproved file-sharing tool, the organisation's data is stored in an ungoverned location. When an employee uses a personal ChatGPT account for contract analysis, the organisation's data is processed by a system that may be training future models, may have logging enabled, and is certainly not subject to the organisation's data classification standards.

Second, AI tools make decisions that can have regulatory implications. An AI system used to screen job applications, assess customer creditworthiness, or make healthcare recommendations is, under the EU AI Act, a HIGH RISK AI system -- requiring Annex IV technical documentation, human oversight design, and conformity assessment before deployment. When this happens through an employee's personal ChatGPT account, none of these requirements are met.

Section 2 - The 93% Statistic in Context

The 93% figure -- 93% of enterprise ChatGPT use through personal, non-corporate accounts -- is consistent across multiple 2026 research sources covering enterprise AI adoption. It is not a usage preference statistic. It is a governance failure statistic. It tells you that the official AI programme, in most enterprises, is failing to meet the demand that already exists.

Consider what this means in practical terms. An organisation with 500 employees, 88% of whom use AI daily, has approximately 440 people using AI every working day. If 93% of that activity is through personal accounts, the organisation has approximately 409 employees actively using AI outside any governance framework every day -- creating potential IP leakage, data breach exposure, and EU AI Act compliance gaps at significant scale, every single day, including today.

The Gap Between Activity and Proficiency

The 93% ungoverned use statistic should be read alongside a second data point: only 5% of employees who use AI tools use them at an advanced level. The vast majority of employee AI use is at basic or intermediate proficiency -- generic prompts producing generic outputs. This represents both the problem and the solution.

The problem: employees are using AI at scale without domain-specific expertise, producing outputs that may be adequate for personal use but are not reliably good enough for professional production use -- yet are being used in professional contexts regardless.

The solution: an official AI programme that provides domain-specific prompt libraries, governed AI tools calibrated to the organisation's specific context, and a structured path from basic to advanced AI proficiency will be demonstrably more useful than a personal ChatGPT account. Employees will choose the better tool when it exists.

Section 3 - Why Shadow AI Is a Governance Design Failure, Not a Policy Failure

The most important reframe in Shadow AI governance is this: the 93% figure is not evidence that employees are ignoring policy. It is evidence that the official programme is not competitive with the shadow alternative.

Employees are not using personal ChatGPT accounts because they do not know about the organisation's AI policy. They are using them because the official AI programme is too slow to meet the need they have right now, or too restrictive to do what they need it to do, or too generic to produce outputs that are genuinely useful for their specific work. Shadow AI is not insubordination. It is market feedback.

A governance design failure has a governance design solution. The organisation needs a faster, more capable, more domain-specific official AI programme that employees choose -- not because they are mandated to, but because it is genuinely better than the personal alternative. Building that programme is the Shadow AI containment strategy. Policy alone is not.

Section 4 - Why Banning ChatGPT Makes the Problem Worse

The most common first response to the Shadow AI problem is prohibition: ban personal AI tool use, block access at the network level, update the acceptable use policy. This response is understandable. It is also structurally counterproductive.

The Prohibition Paradox

Employees do not stop using AI when it is banned. They become better at hiding it. Every banned tool creates an incentive for employees to find workarounds -- use personal devices on personal networks, avoid disclosing AI involvement in their work outputs, or access tools through methods that bypass network monitoring. The prohibition strategy produces the worst possible governance outcome: ungoverned AI that the organisation cannot see, cannot monitor, and cannot remediate.

The organisation that prohibits AI use without providing a credible governed alternative has not solved the Shadow AI problem. It has converted a visible governance gap into an invisible one. The data breach risk, the EU AI Act compliance exposure, and the IP leakage are all still present -- they are simply no longer measurable, because the activity is hidden.

The research is consistent: organisations that respond to Shadow AI with prohibition and no alternative see no reduction in AI use -- only a reduction in reported AI use. The activity continues; the visibility does not.

Why Banning ChatGPT Isn't Working

The full analysis of why prohibition fails and what to do instead is in Why Banning ChatGPT Isn't Working.

Section 5 - The Regulatory Exposure You May Not Have Mapped

The 93% ungoverned AI use figure has a specific 2026 regulatory dimension that most organisations have not fully mapped. Shadow AI is not merely a data governance problem -- it is a potential EU AI Act compliance incident at scale, operating every working day.

EU AI Act Exposure: Annex III High-Risk AI Without Documentation

The EU AI Act classifies AI systems that make or substantially contribute to decisions in specific categories as HIGH RISK (Annex III): employment and recruitment decisions, credit scoring, educational assessment, healthcare recommendations, law enforcement inputs, and critical infrastructure management.

When an employee uses a personal ChatGPT account to draft interview assessment notes, analyse a candidate's application, or summarise a credit application file, they are -- under the EU AI Act's functional definition of AI deployment -- operating a HIGH RISK AI system. That system has no Annex IV technical documentation. It has no human oversight design. It has no named accountable operator. It has no EU AI database registration. It has none of the compliance requirements that the EU AI Act mandates for HIGH RISK AI deployment.

Full enforcement begins 2 August 2026. Penalties reach EUR 35 million or 7% of global annual revenue for prohibited practices; EUR 15 million or 3% for high-risk violations. The compliance gap created by ungoverned Shadow AI is not theoretical. It is active, today, in most organisations.

AI Governance Framework Template

The complete Annex IV technical documentation template and compliance mapping are in the AI Governance Framework Template -- free download.

Australian Privacy Act Exposure: APP 1.7 Automated Decision-Making

For organisations handling personal information of Australian individuals, Shadow AI creates a second compliance exposure: the Australian Privacy Act APP 1.7 obligations that activate in December 2026. APP 1.7 requires disclosure in privacy policies of automated decision-making that uses personal information and materially affects individuals' rights or interests.

When employees use personal AI accounts to process customer data, HR information, or any personal information of Australian individuals, the automated decision-making that results is invisible to the privacy compliance programme. The organisation cannot disclose what it cannot see. A Shadow AI instance involving Australian personal information is potentially a privacy compliance gap with penalties reaching AUD 50 million or 30% of adjusted Australian turnover.

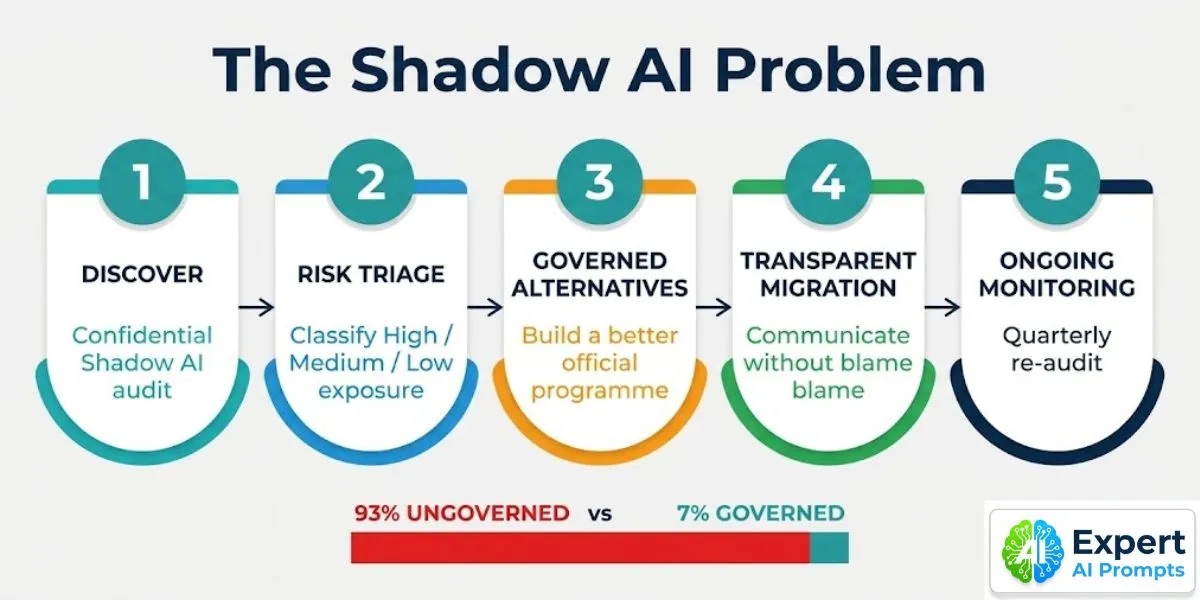

Section 6 - The Five-Step Shadow AI Containment Playbook

Shadow AI containment is not a technology problem -- it is a governance response problem. The most effective containment strategy does not start with prohibition. It starts with discovery. Organisations cannot govern what they cannot see, and they cannot design a competitive official programme without understanding what the shadow alternatives are actually being used for.

Step 1: Discovery -- Conduct the Shadow AI Audit

A confidential Shadow AI audit conducted across all major business functions. The audit has three components: a structured employee survey (confidential, framed as understanding AI adoption rather than auditing compliance); an IT traffic analysis reviewing AI domain access logs, browser extension installs, and network requests to known AI services; and a function-by-function review of AI tool use patterns identified through both channels.

The output of Step 1 is a Shadow AI Inventory: every tool identified, every use pattern documented, and every instance classified into one of three risk tiers. Step 1 takes 2-4 weeks. It should be completed before any governance response is designed.

AI Transformation Roadmap 2026

'The Phase 1 90-day onboarding framework includes a Shadow AI audit as a Day 1 to 30 governance deliverable in the AI Transformation Roadmap 2026.

Enterprise AI Readiness Checklist

'The governance dimension of the Enterprise AI Readiness Checklist includes Shadow AI assessment questions -- use it as the audit framework.

Step 2: Risk Triage -- Classify and Prioritise the Exposure

For each Shadow AI instance identified in Step 1, apply the three-tier risk classification:

• HIGH RISK: Shadow AI instances involving regulated personal information, health data, financial data, employment decisions, or EU market data. These may constitute EU AI Act Annex III violations or Australian Privacy Act APP 1.7 trigger events. Remediation required within 30 days of identification. Assess with Governance and Compliance Lead within 48 hours.

• MEDIUM RISK: Shadow AI instances involving work data (proprietary information, internal documents, client data) in personal AI accounts -- without the specific regulated data categories that trigger HIGH RISK. IP leakage and data classification exposure, but lower immediate regulatory liability.

• LOW RISK: Shadow AI instances involving personal productivity tools with no work data -- employees using AI for personal tasks or general research without organisational data exposure. Monitor; not an immediate governance priority.

Address all HIGH RISK instances first. Do not attempt to address all Shadow AI simultaneously. The organisation does not have the capacity, and the effort required to address LOW RISK instances would be better deployed building governed alternatives for HIGH RISK use patterns.

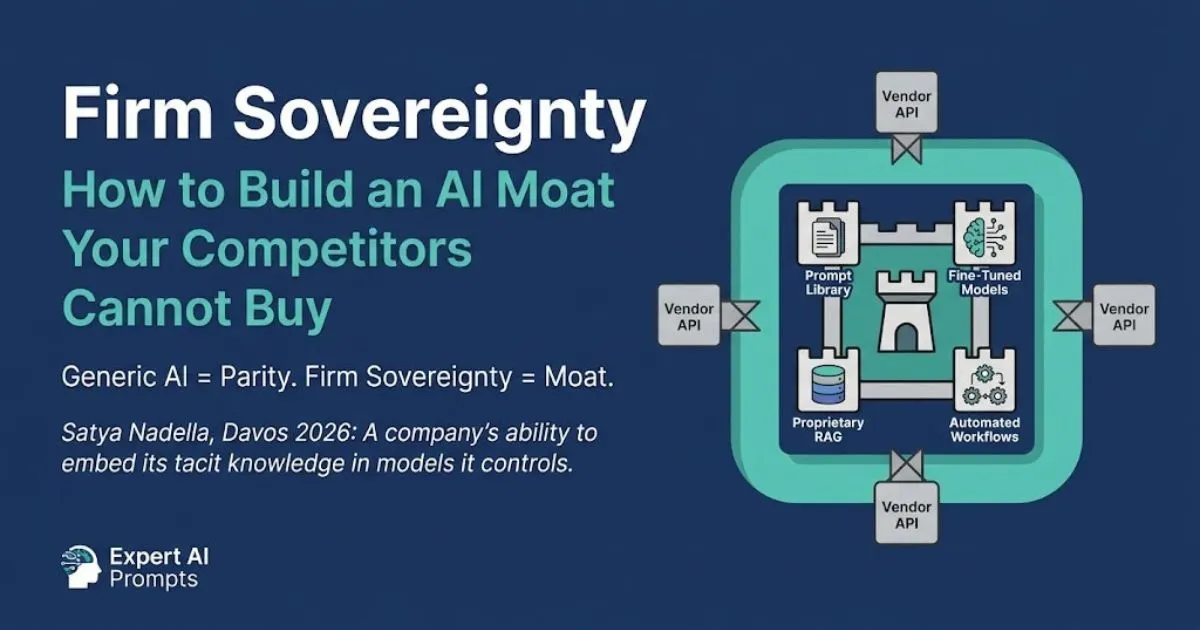

Step 3: Governed Alternatives -- Build a Better Official Programme

For each major Shadow AI use pattern identified in Step 1, develop or fast-track an official governed alternative that is measurably better than the shadow version: more domain-specific, better integrated with existing workflows, faster to access, and calibrated to the organisation's specific documents, language, and quality standards.

If employees are using personal ChatGPT accounts to draft legal contracts, build a governed contract drafting tool within the CoE infrastructure -- with a domain-specific prompt library built on the organisation's actual contract precedents, access controls that ensure appropriate data handling, and output quality that a generic ChatGPT prompt cannot match. The official version wins when employees choose it because it is more useful, not because the alternative is banned.

The AI Centre of Excellence provides the governed infrastructure within which official alternatives to shadow tools are built and maintained.

Step 4: Transparent Migration -- Communicate Without Blame

Communicate the Shadow AI audit findings to the workforce without blame attribution. Frame the migration to official tools as an improvement, not a restriction. The communication acknowledges that employees have been using AI tools to do their work better -- and that the organisation is now providing a governed alternative that is better than what they have been using.

Publish the AI Acceptable Use Policy as a clear, practical guide -- not a legal document designed to minimise organisational liability. Give employees a specific timeline for migrating each shadow tool. Provide a fast-track approval pathway for new tools that employees want to use -- so that legitimate demand can be met through official channels rather than driving new Shadow AI adoption as new tools emerge.

Step 5: Ongoing Monitoring -- The Quarterly Re-Audit

Shadow AI is not a one-time problem. It recurs as new AI tools emerge, as employee AI sophistication grows, and as the gap between the official programme and the cutting edge of available tools widens. The quarterly Shadow AI re-audit is a standing CoE activity.

The re-audit has three functions: identifying new Shadow AI tools that have entered use since the last audit; tracking whether previously identified HIGH and MEDIUM RISK instances have been successfully migrated to governed alternatives; and maintaining the approved tool register as a living document that reflects current employee AI needs. The register is updated after each re-audit. New tools can be submitted for approval through a defined fast-track process managed by the CoE Governance and Compliance Lead.

Section 7 - The Risk Triage Framework: Three Tiers of Shadow AI Exposure

TIER 1 -- HIGH RISK (remediate within 30 days):

Any Shadow AI instance where work data includes: regulated personal information (names, contact details, financial data, health records, HR records); employment or recruitment decisions; credit or financial assessments; healthcare recommendations; or any data subject to the EU AI Act's Annex III high-risk categories or the Australian Privacy Act APP 1.7 trigger condition. Requires immediate assessment by Governance and Compliance Lead.

TIER 2 -- MEDIUM RISK (remediate within 60 days):

Shadow AI instances involving proprietary work data, client information, internal documents, or sensitive business information -- without the specific regulated data categories that trigger TIER 1. IP leakage, confidentiality breach, and data classification exposure. Build governed alternative and migrate within 60 days.

TIER 3 -- LOW RISK (monitor; address in next re-audit cycle):

Shadow AI instances involving no work data -- employees using AI tools for personal productivity tasks or general research without organisational data input. No immediate regulatory or IP exposure. Include in the approved tool evaluation queue for the next audit cycle.

Section 8 - The Champion Network as the Ongoing Solution

The five-step containment playbook addresses the existing Shadow AI inventory. The Champion Network is the ongoing solution that prevents new Shadow AI from emerging at the same rate in the future.

AI Champions -- one per major business function, developed from the existing workforce, operating at Tier 3 AI skills proficiency -- serve as the human infrastructure that connects the official programme to the business functions where Shadow AI occurs. Champions identify new tool use patterns in their function and escalate through the CoE fast-track approval pathway. They demonstrate the official programme's capability to their colleagues -- peer demonstration is more effective at changing tool adoption behaviour than any corporate policy communication. And they serve as the feedback channel between business function needs and the CoE's governed alternative development pipeline.

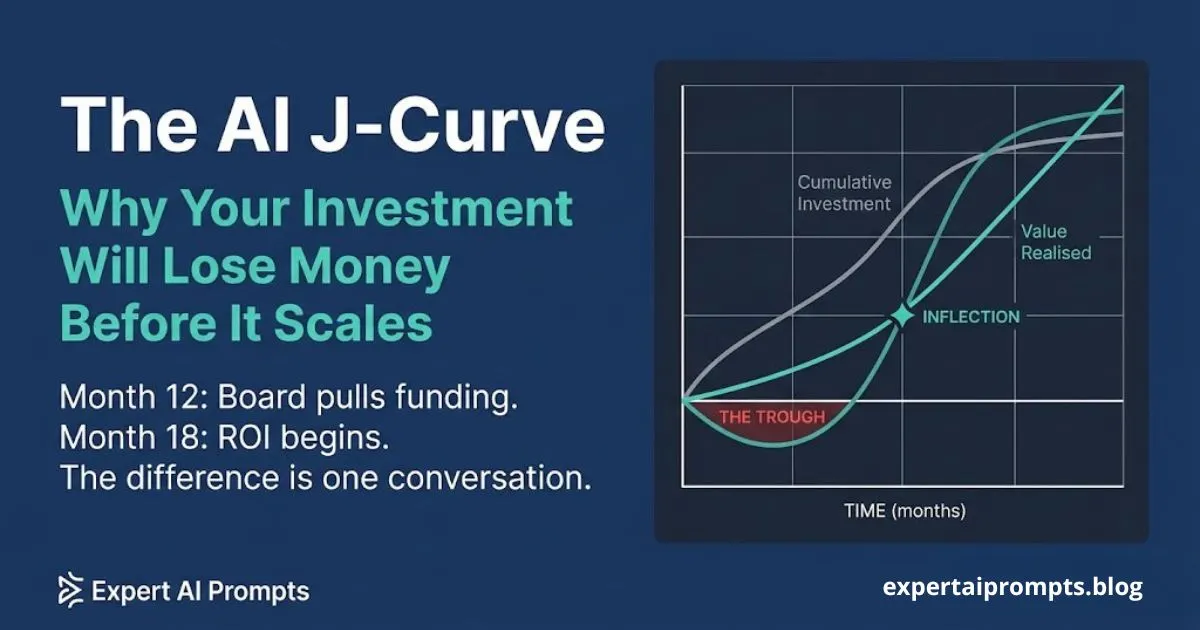

The organisations that successfully reduce Shadow AI over a 12-24 month programme share a consistent pattern: a fast and useful official AI programme, a transparent and blame-free migration process, and an active Champion Network that maintains the governance culture at the function level. The combination means that when new AI tools emerge -- and they will -- the organisation has the infrastructure to evaluate, approve, and integrate them faster than employees are driven to adopt them personally.

Closing - From Shadow AI to Governed Programme

The 93% figure is not a shameful statistic. It is a starting point. Every organisation that has successfully built a governed, high-adoption AI programme started from a position where the vast majority of employee AI activity was ungoverned. The figure tells you where to start, not where you are destined to remain.

The path from 93% ungoverned to a governed, compliant, and competitive AI programme runs through the five steps in this article. It does not run through prohibition. It runs through building something better, migrating to it transparently, and maintaining the governance infrastructure that keeps it current as the AI landscape evolves around it.

Your next steps:

Enterprise AI Governance Framework

-- The full governance architecture including Shadow AI containment, the four-layer model, and the EU AI Act compliance framework.

AI Governance Framework Template

-- Download the complete governance template including the AI Acceptable Use Policy and the Shadow AI risk triage framework.

-- The CoE structure that builds and maintains the governed alternatives to shadow tools.

About the Author

Matthew Bulat is the Founder of Expert AI Prompts and a 20+ year technology and AI strategy executive. Former CTO, Federal Government Technical Operations Manager for national infrastructure across 20 cities and 4,000 users, and 8+ year University Lecturer at CQUniversity. The Shadow AI governance framework in this article is derived from the Enterprise AI Governance Framework -- the same governance architecture applied in the Expert AI Prompts live platform and taught across 30 industries.