Escaping Pilot Purgatory: Why 70% of Enterprise AI Pilots Never Scale

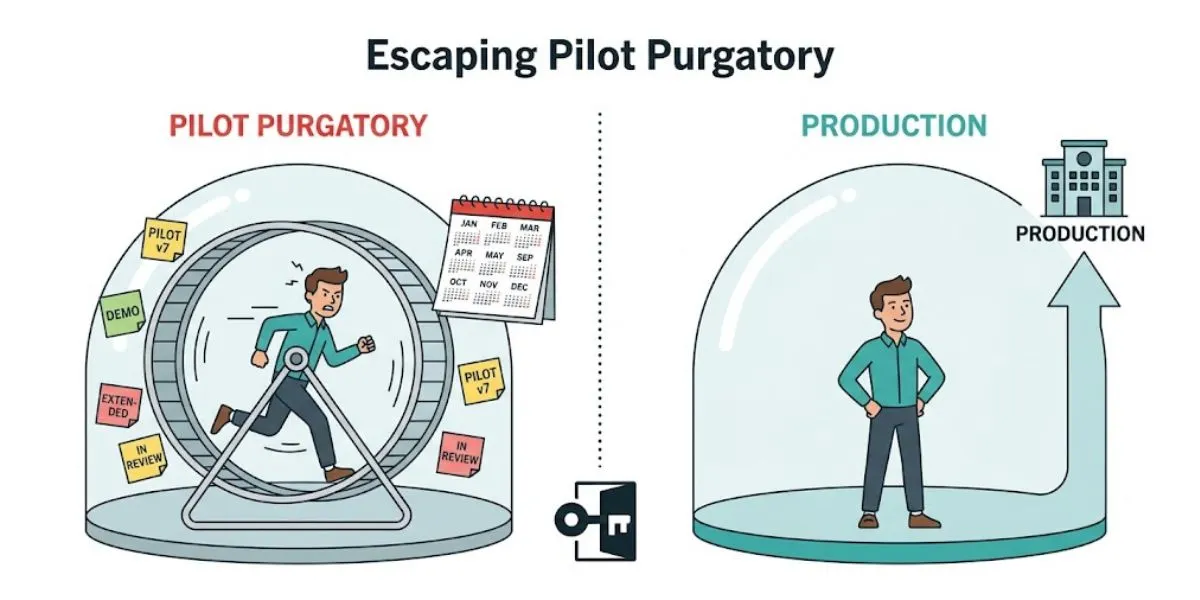

78% of enterprises have AI pilots running. Only 14% have successfully scaled to production. That 64-point gap -- between experimentation and operational deployment -- is the most expensive condition in enterprise AI. Not because the pilots cost money. Because they consume organisational attention, engineering capacity, and executive credibility without producing any of the value they promised.

This is Pilot Purgatory. And if you are reading this, you have almost certainly lived inside it.

Section 1 - What Pilot Purgatory Actually Is

Pilot purgatory is the space between 'it works in a sandbox' and 'it is running in production, delivering measurable value, and someone is accountable for its outcomes.'

The signs are consistent across organisations and industries. The same AI initiative has been in pilot for six months or more. Success criteria were never defined before the pilot launched. No kill criteria exist. The project owner changes every quarter. The board hears quarterly updates about 'AI pilots underway' -- but never hears about production deployments delivering measured value.

Your organisation is probably in pilot purgatory if any of the following are true:

• The same AI initiative has been described as 'in pilot' for more than 6 months

• You have never killed an AI pilot -- every one has either succeeded or been extended

• Success criteria for your current pilots were defined after they launched, not before

• No named individual has production deployment as a personal performance metric

• Your shared drive contains files titled something like 'Q2_AI_PILOT_v7_FINAL_USE_THIS'

• The board hears about AI activity, but not about AI value delivered

The critical insight is this: purgatory is not a technology problem. The demo worked. The model performed. The accuracy metrics were impressive. The failure is always organisational -- wrong use case selected, no named owner, no kill criteria, no production readiness definition, and no governance infrastructure capable of moving from sandbox to production.

The Enterprise AI Transformation Playbook

The 5-phase framework for moving from pilot to production is covered in full in The Enterprise AI Transformation Playbook

Section 2 - The Numbers That Frame the Problem

These numbers come from multiple 2026 research sources. They are not industry-specific -- they appear consistently across sectors.

• 78% of enterprises have AI agent pilots running. Only 14% have successfully scaled to production. (March 2026 survey, 650 enterprise technology leaders)

• 88% of AI pilots never reach production. This is not a technology failure rate -- it is an organisational and governance failure rate. (Gartner, multiple 2026 sources)

• 56% of CEOs report no financial impact from AI investment despite broad adoption. (PwC 2026 Global CEO Survey)

• Deloitte's State of AI 2026 documents 'pilot fatigue' -- leadership losing confidence in AI not because of one failure, but because of repeated pilots that never ship. The credibility cost compounds with every stranded initiative.

• The global AI market is on track to hit $1.8 trillion by 2026. Forrester estimates 25% of planned AI spend will be deferred into 2027 unless organisations can demonstrate ROI -- which requires production deployments, not pilots.

Why These Numbers Matter More Than Model Performance

The most common mistake in enterprise AI investment is optimising for the wrong variable. Organisations spend enormous energy on model selection, vendor evaluation, and technology architecture -- the components that determine 30% of AI transformation outcomes. They underinvest in the organisational components that determine the other 70%: use case selection, governance, ownership accountability, and change management.

BCG's 10-20-70 principle states it directly: 10% of AI success is determined by algorithms. 20% by data and technology. 70% by people, processes, and cultural transformation. Leaders who focus on the technical 30% are structurally under-investing in the layer that determines whether a demo ever becomes a product.

Enterprise AI Readiness Checklist

Before reading further, you may want to benchmark your organisation's current readiness using the Enterprise AI Readiness Checklist -- 25 questions across 5 dimensions, free download.

Section 3 - The Five Root Causes of Scaling Failure

A March 2026 survey of 650 enterprise technology leaders identified five root causes that account for 89% of AI scaling failures. They are interrelated -- fixing one without addressing the others produces partial improvement at best.

Root Cause 1: Integration complexity with legacy systems

The pilot uses clean, curated data. Production requires integration with legacy systems that were not part of the pilot design -- and nobody scoped them. Integration requirements are treated as a post-pilot problem rather than a pilot design constraint. By the time production integration is attempted, the use case is already over budget and behind schedule.

Root Cause 2: Inconsistent output quality at volume

High accuracy in the sandbox. Degraded accuracy at production volume with real-world edge cases, incomplete inputs, and adversarial data. Pilot success criteria measure accuracy in controlled conditions, not resilience under production conditions. The model that performed at 94% accuracy on curated test data may perform at 71% accuracy on the messy, incomplete, real-world data that production users actually generate.

Root Cause 3: Absence of monitoring tooling

No drift detection. No accuracy monitoring. No alerting. AI behaviour degrades progressively and nobody knows until a business failure surfaces it. Monitoring is assumed to be someone else's responsibility -- and is usually discovered to belong to nobody. An AI system without monitoring is not a production system; it is a sandbox that happens to have real users.

Root Cause 4: Unclear organisational ownership

Five executive sponsors. Zero accountable owners. No named person whose performance metric includes the production outcome. Enthusiasm is distributed; accountability is concentrated -- and nobody wants to own a system they did not build. Without a named owner who has both the authority and the performance incentive to drive the use case to production, deployment stalls at every cross-functional friction point.

Root Cause 5: Insufficient domain training data

The model performs adequately on generic data but cannot handle domain-specific edge cases at production volume. Domain data requirements are discovered during production deployment, not during pilot scoping. The right question is not 'does this model work?' -- it is 'does this model work on our data, in our domain, at our production volume?'

Section 4 - The Pilot Trap Cycle: Why Smart Organisations Keep Making the Same Mistake

Pilot purgatory persists not because organisations are naive -- most leadership teams have read the research and know the statistics. It persists because the structural incentives consistently produce pilot-optimised behaviour rather than production-optimised behaviour.

The pilot trap cycle looks like this:

1. A new AI use case is proposed. The business case is compelling. The demo is impressive. Executive enthusiasm is high.

2. A pilot is approved and funded. Success is measured by demo quality, model accuracy, and stakeholder excitement -- not by production readiness criteria.

3. The pilot runs. Results are promising. But production integration has not been scoped. There is no named deployment owner. The success criteria are vague.

4. The pilot is extended. Then extended again. The team is not failing -- they are succeeding at piloting, which is a different activity from deploying.

5. Before the pilot reaches production, a new AI use case is proposed. The business case is compelling. The cycle begins again.

6. The organisation accumulates a portfolio of 'promising pilots' and zero production deployments. The board is told AI is 'progressing'. Executive credibility begins to erode.

The trap is reinforced by how success is measured. Pilots that run cleanly and produce impressive demos are celebrated. Production deployments that require hard governance decisions, difficult integration work, and messy real-world data are less telegenic -- even when they deliver more actual value. The organisation unconsciously learns to optimise for pilot quality rather than production outcomes.

Section 5 - The Best First Use Case Is Not the Most Impressive One

The most consistent finding from enterprise AI transformation research is that the best first AI use case is not the most strategically significant one -- it is the most deployable one at meaningful impact. These are almost never the same use case.

Consider a hypothetical pharmaceutical company that launched four AI pilots simultaneously: drug discovery acceleration, clinical trial optimisation, predictive maintenance, and talent matching. All four were strategically legitimate. All four had genuine business cases. None was the right first use case.

The right first use case was claims processing automation. Moderate impact -- not revolutionary. Data available and clean. Proven approach with documented implementations elsewhere. Deployable in 4 months. Not a showpiece. But deployable.

The Claims Processing Principle

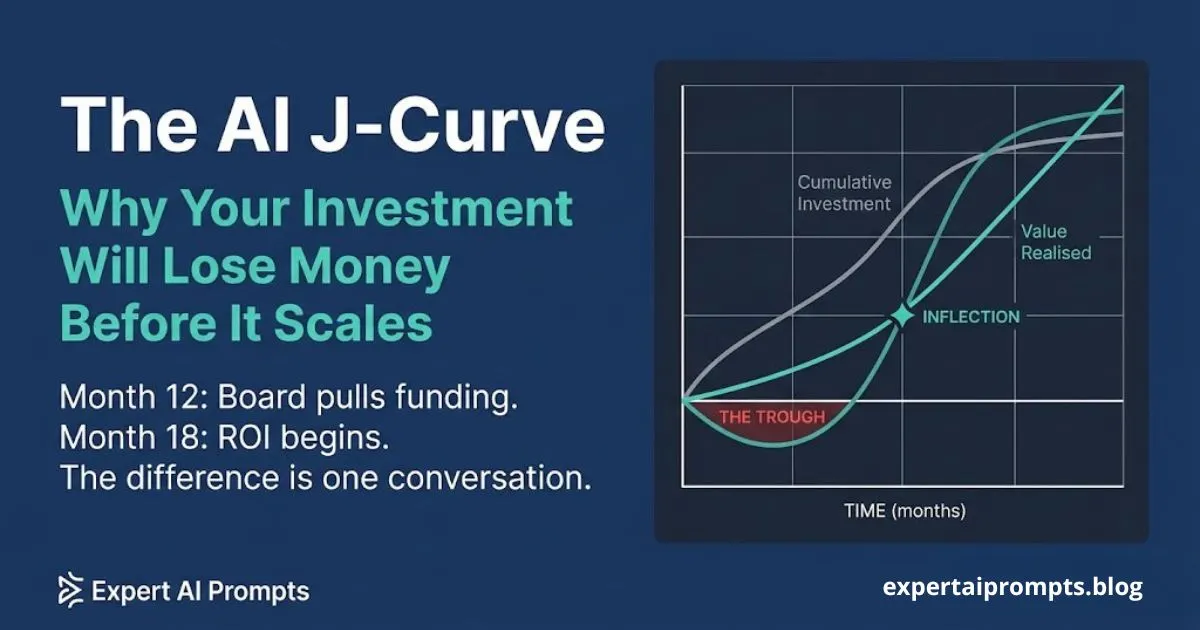

A use case that delivers $50 million of value in three years after a failed 18-month pilot produces zero actual ROI and significant credibility damage. A use case that delivers $500,000 in four months builds the organisational infrastructure -- shared data pipelines, governance standards, monitoring tooling, change management capability -- that makes the $50 million initiative achievable in Year 2.

The sequencing is the strategy. The first use case is not chosen for its own impact. It is chosen for what it proves about the organisation's ability to deploy -- and what shared infrastructure it creates for every use case that follows.

The six dimensions that determine whether a use case should be first in the portfolio: business impact potential, data readiness, technical feasibility, organisational readiness, strategic alignment, and speed to value. A use case that scores highly on all six dimensions is exceptional. A use case that scores 3 or lower on data readiness or technical feasibility is not a first use case regardless of its impact potential -- because it will not deploy.

Section 6 - The Four Conditions for Production Readiness

Before any AI use case is approved to move from pilot to production, four conditions must be verified. Not aspirational. Not in progress. Verified.

7. Named owner with production accountability. A specific individual -- not a team, not a function, not a committee -- whose performance metric includes the production outcome. The Success Contract (see Section 7) is signed by this person before the engineering sprint begins.

8. Defined success threshold, measured from a pre-AI baseline. Not 'improved accuracy' or 'faster processing time.' A specific, auditable metric: this use case will reduce processing time from X to Y for Z workflows per month. The pre-AI baseline measurement is taken before the pilot begins, not after. Without the baseline, the improvement cannot be validated.

9. Integration with production systems confirmed. Not designed. Not planned. Confirmed -- with the integration architecture agreed and the legacy system owner committed to the timeline. Integration complexity discovered in production is one of the five root causes listed in Section 3. It should be discovered in pilot design.

10. Monitoring tooling operational before deployment. Drift detection, accuracy monitoring, alerting, and incident response process. Not configured. Operational. The governance framework determines the monitoring standards -- the use case cannot deploy without meeting them.

Enterprise AI Governance Framework

The Enterprise AI Governance Framework covers the complete compliance and governance requirements for production deployment, including EU AI Act risk classification.

Section 7 - The Success Contract: Making Exit Criteria Non-Negotiable

The Success Contract is the single document that most reliably separates organisations that escape pilot purgatory from those that stay in it. It is signed before the engineering sprint begins. It defines five elements that must be documented, agreed, and signed off before any pilot advances to production:

11. The specific business metric the use case will improve

12. The pre-AI baseline measurement for that metric (taken before any AI is introduced)

13. The improvement threshold required to pass to production -- the exact number, not a range

14. The measurement timeline -- how many production user-days of data must accumulate before the gate decision is made

15. The named decision authority -- the individual who makes the pass/fail decision against the Success Contract criteria, with the authority to kill the use case if the threshold is not met

The gate decision is binary. Either the use case meets the threshold within the defined measurement timeline, or it is killed. There is no third option of 'extended pending further review.' Extension is how pilot purgatory perpetuates itself. The Success Contract exists precisely to remove the extension option from the decision table.

The Success Contract is not a bureaucratic formality. It is the governance infrastructure that makes deployment decisions credible. A board that receives a board briefing citing 'actual productivity improvement of 2.3x versus the pre-AI baseline, measured over 10 production user-days' trusts the next investment proposal. A board that receives 'the pilot is showing promising results' does not.

Download the AI Governance Framework Template

The AI Governance Framework Template includes a ready-to-use Success Contract template in Section 5.

Section 8 - The Kill Decision: Why Ending a Pilot Is a Governance Win

The most counterintuitive principle in escaping pilot purgatory: killing a failing pilot with evidence and discipline demonstrates more AI governance maturity than extending it indefinitely.

A board that sees a CAIO kill a failing pilot because it missed the Success Contract threshold -- and immediately redirect the freed engineering capacity to the next use case in the prioritised pipeline -- trusts the next investment proposal more than a board that watches every pilot survive through infinite extension.

The kill decision requires three things that most organisations have not built:

• Pre-defined kill criteria (the Success Contract threshold) -- without these, there is no principled basis for a kill decision

• A named decision authority with the political capital to enforce the kill -- without this, the decision defers to whoever argues most loudly for extension

• A funded pipeline of prioritised next use cases -- without this, killing one pilot creates a vacuum that the organisation has no immediate way to fill, making extension feel like the responsible choice

The organisations that consistently escape pilot purgatory have all three. They have a defined process for killing use cases that do not meet production thresholds. They have a named individual with the authority and the incentive to enforce it. And they have a pipeline of scored, sequenced, ready-to-fund use cases that makes every kill decision feel like an acceleration rather than a failure.

The kill is not the end of the story. It is the governance infrastructure working as designed.

Closing - What Comes Next

Escaping pilot purgatory is not a technology challenge. It is a governance and sequencing challenge. The organisations that make it to production consistently are not the ones with the best models -- they are the ones that selected the right first use case, defined success criteria before they built anything, named a single accountable owner, and made the kill decision when the evidence required it.

If you are building or scaling an enterprise AI programme in 2026, the following resources are your next steps:

Enterprise AI Transformation Playbook The 5-phase framework covering Phase 3 (Pilot and Prove) in full detail.

AI Transformation Roadmap 2026 The 90-day onboarding framework including the Day 60 Success Contract gate.

AI Centre of Excellence The CoE structure that builds the shared infrastructure making every subsequent use case cheaper to deploy.

And if you want to know where your organisation stands before committing to the next phase: take the AI Maturity Assessment 5 questions, instant result, no email required. It will tell you exactly which dimension of readiness your organisation needs to address before your next pilot is approved.

About the Author

Matthew Bulat is the Founder of Expert AI Prompts and a 20+ year technology and AI strategy executive. Former CTO, Federal Government Technical Operations Manager for national infrastructure across 20 cities and 4,000 users, and 8+ year University Lecturer in IT and engineering at CQUniversity. The framework in this article is derived from the AI Use Case Prioritisation Matrix -- the same methodology applied in the Expert AI Prompts live platform: 30 industries, 1,500+ domain-specific prompts, 15 AI workflow systems, near-zero daily operations.