Enterprise AI Platform Architecture: The Six-Layer Decision Framework Every CAIO Needs in 2026

The enterprise AI platform architecture decision is not made once. It is made six times -- once at each layer of the AI stack. The organisation that treats it as a single choice produces an architecture that is either too rigid to adapt or too fragmented to govern. The organisation that makes it six times, correctly, with a consistent design principle threading through each layer, produces an architecture that compounds rather than accumulates debt.

The six layers are not equal in strategic importance, not equal in how the buy vs build decision lands, and not equal in how much damage the wrong decision causes at each level. Understanding where the enterprise consensus has settled by 2026 -- at each layer, not as a generalisation across all of them -- is the starting point for any AI architecture decision.

This article maps each layer, identifies the 2026 enterprise consensus position and its rationale, explains the model-agnostic design principle that must thread through layers 4, 5, and 6, and covers MCP -- the open standard that changed the orchestration layer decision in 2025 and whose implications are still working through most enterprise AI architectures.

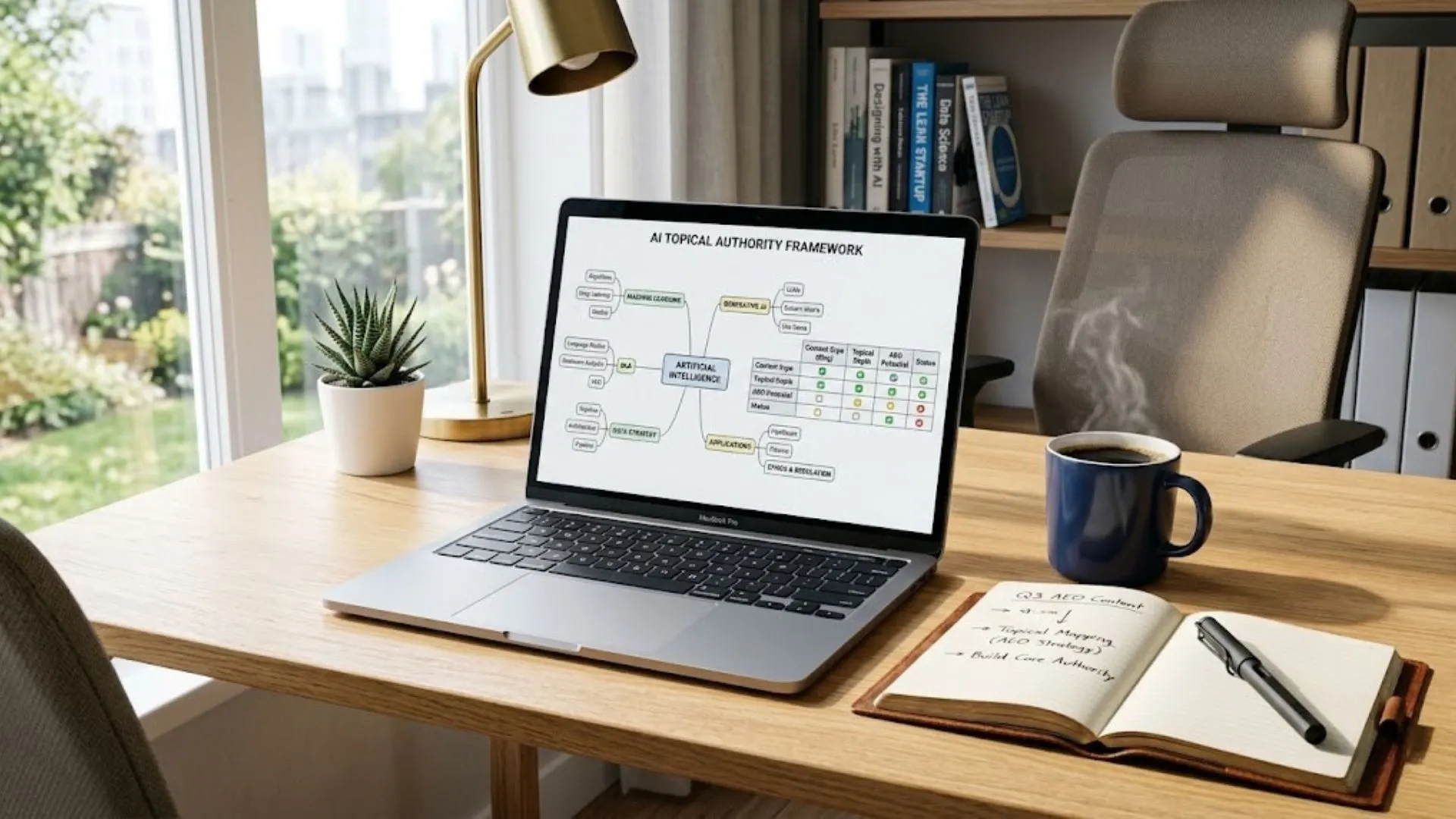

AI Buy vs Build Decision Framework

The architecture decision follows from the investment decision. The AI Buy vs Build Decision Framework is the structured process for making the investment decision at each layer before architecture design begins.

Section 1 - The Six-Layer Enterprise AI Stack: Why Each Layer Requires a Separate Decision

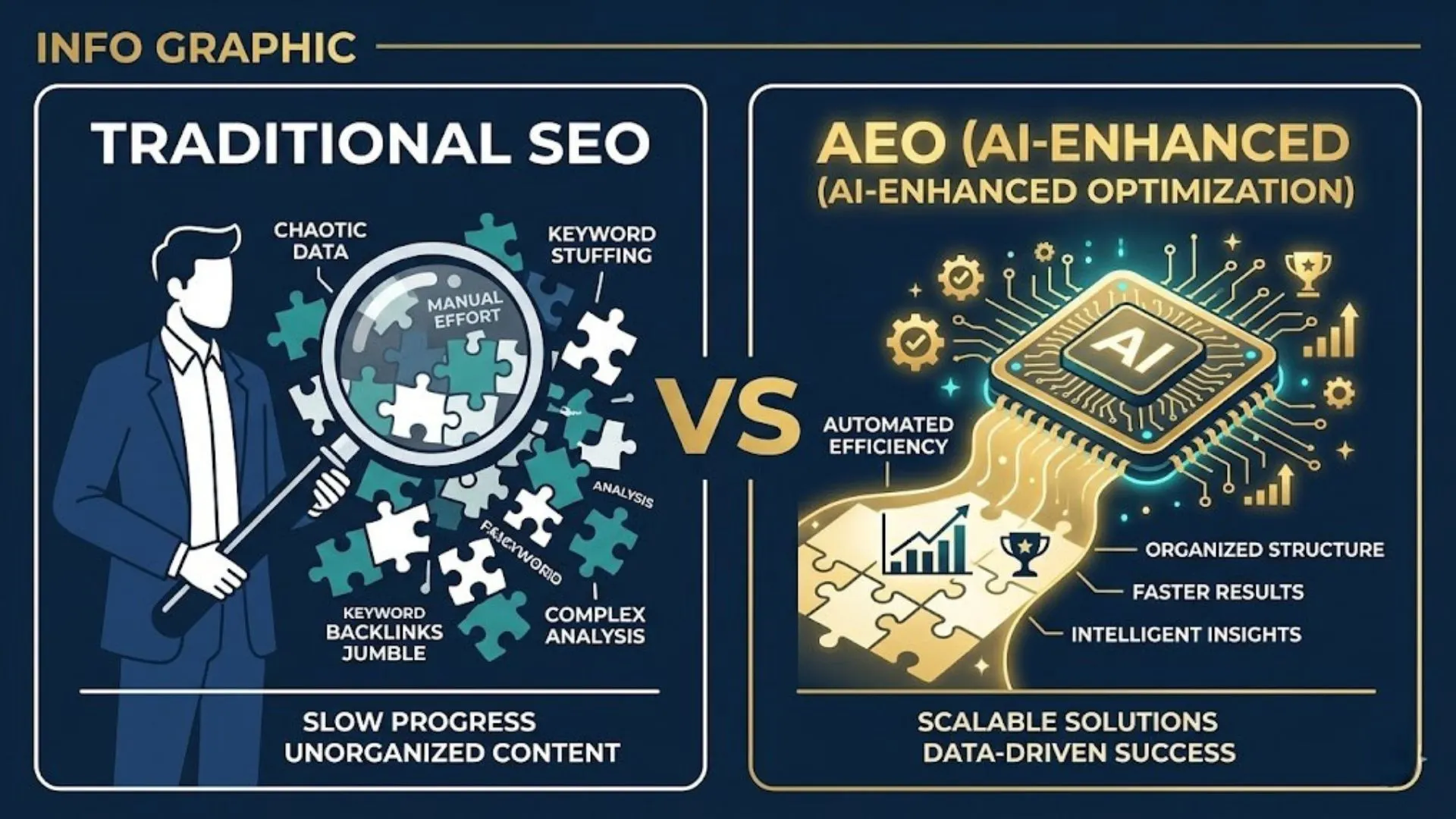

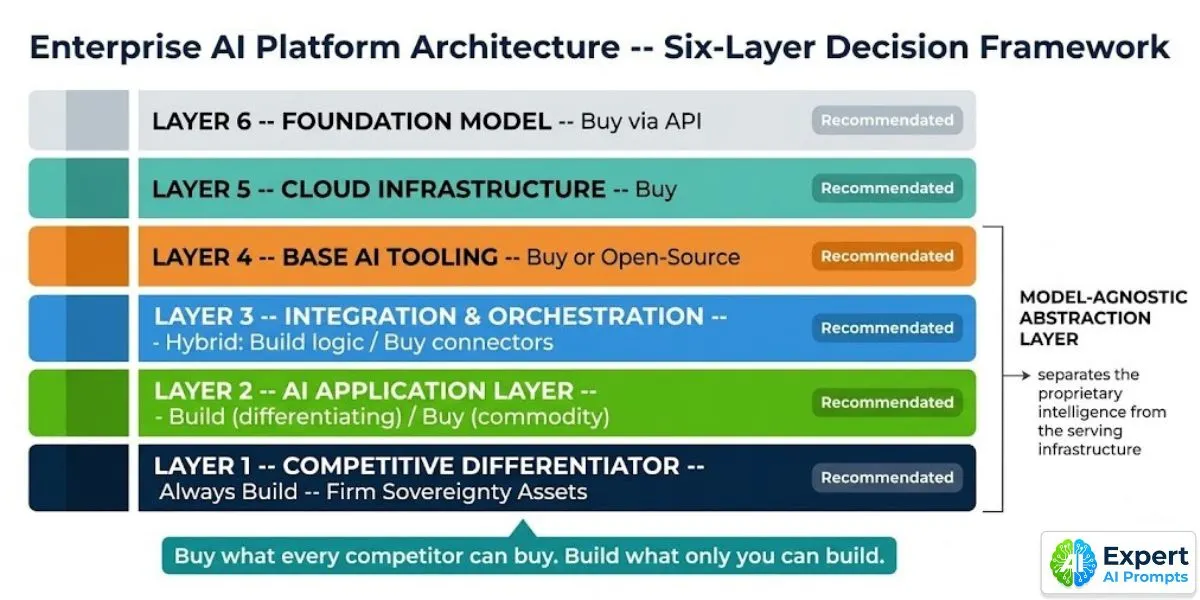

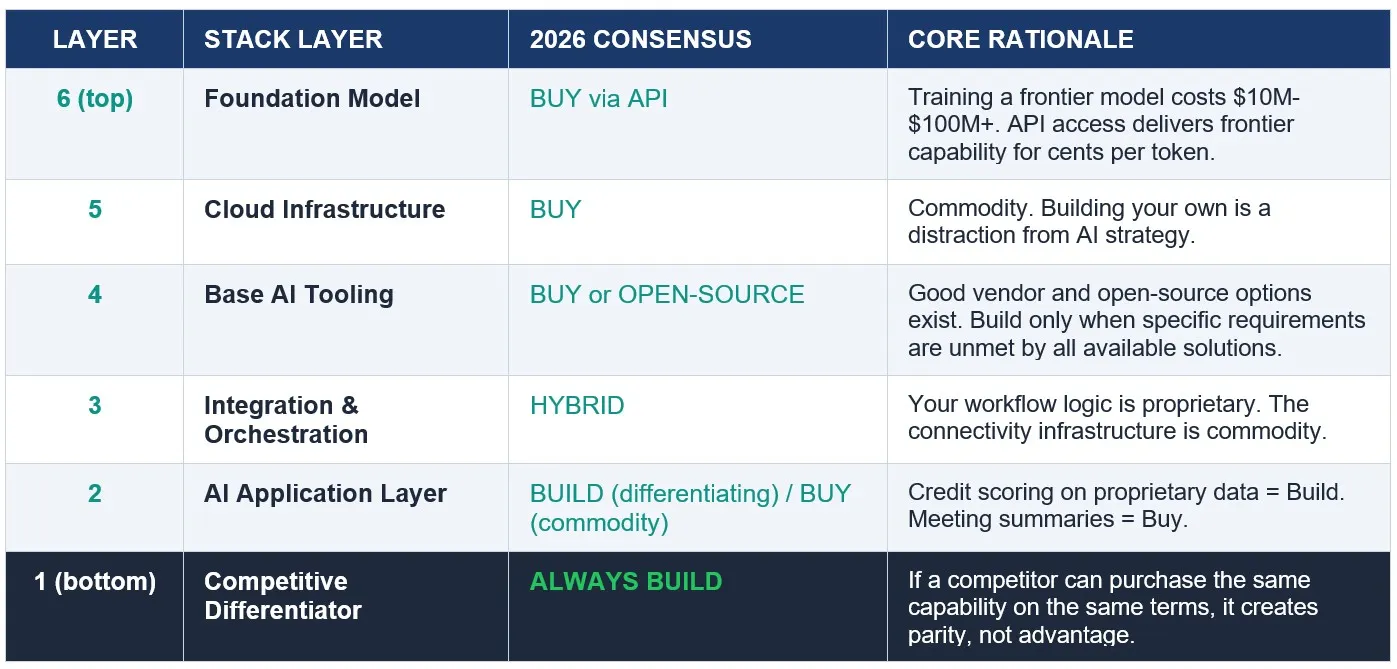

The most common AI architecture error is applying a single buy-or-build logic to the entire stack. The logic that correctly governs the foundation model layer (always buy) produces the wrong answer at the competitive differentiator layer (always build). The logic that correctly governs the cloud infrastructure layer (commodity, always buy) produces the wrong answer at the orchestration layer (hybrid -- the business logic is yours, the connectors are commodity).

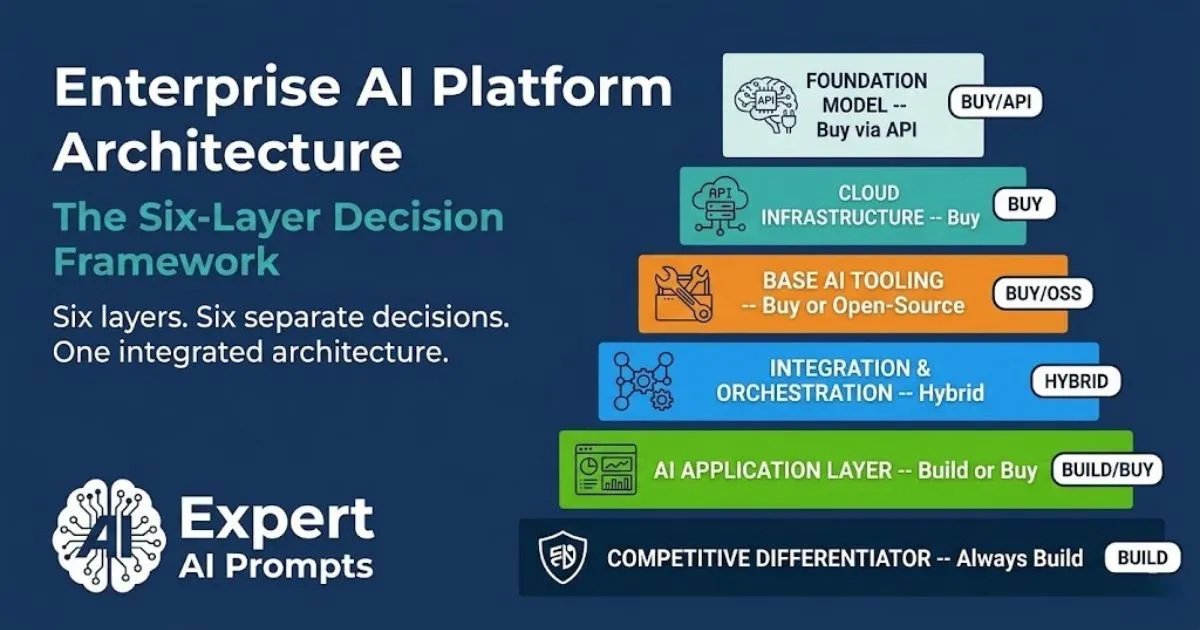

The six layers and their consensus positions in 2026:

The table reads bottom to top: commodity at the top, proprietary at the bottom. The architecture principle that holds across all six layers: buy what every competitor can buy. Build what only you can build.

Section 2 - Layer 1: Foundation Model -- Buy via API. No Exceptions for Most Enterprises.

The foundation model layer is the clearest decision in the enterprise AI stack. Training a frontier model from scratch costs $10M-$100M+ and requires dedicated research infrastructure and talent that essentially no enterprise outside the hyperscalers will ever have. The correct enterprise position is to access frontier model capability via API, apply governance controls to that access, and focus differentiation investment on the layers below.

The decision that remains at the foundation model layer is not build-or-buy. It is which provider to access and on what commercial terms. The organisation that has made a model-agnostic architecture investment (covered in Section 8) has preserved the ability to switch providers when the market moves -- which it does, reliably, every 12-18 months.

The Model Selection Decision: Criteria That Actually Matter

• Performance on your specific task distribution, not on benchmark scores. Evaluate candidate models against a dataset that represents your actual production workload -- not the vendor's published benchmarks.

• Data residency and processing location. For EU or AU regulated workloads, confirm that inference processing can be contractually restricted to specific geographic zones.

• API stability and version pinning. Production systems break when model behaviour changes silently on update. Require contractual model version pinning or deprecation notice periods of not less than 6 months.

• Pricing at production volume. Evaluate pricing at 5x, 10x, and 50x current usage volume, not at pilot volume. The model that is cost-efficient at pilot often is not at scale.

• Commercial terms for fine-tuning. Who owns the fine-tuned weights produced by training on your proprietary data? This must be contractually specified before fine-tuning begins.

Section 3 - Layer 2: Cloud Infrastructure -- Commodity by Design

Cloud infrastructure for AI workloads -- GPU compute instances, vector databases, blob storage for model artefacts, serving infrastructure, networking -- is commodity in 2026. AWS, Azure, and Google Cloud all provide it. The correct enterprise position is to purchase it from the provider that already serves the majority of the organisation's non-AI workloads, to simplify data residency management, security controls, and commercial negotiation.

The only exception to this consensus is organisations operating at hyperscale whose inference volumes are large enough that running dedicated GPU clusters is commercially viable. For the vast majority of enterprise AI programmes, this threshold is never reached. GPU inference cost continues to decrease at approximately 50% per year; the economics of dedicated infrastructure improve and then deteriorate as cloud pricing adjusts. Most enterprise AI programmes should never make this calculation, because their scale does not approach the inflection point.

Cloud infrastructure is not the competitive advantage. The intelligence layer is. Invest in the layer that creates the advantage, not the layer that hosts it.

Section 4 - Layer 3: Base AI Tooling -- Buy or Open-Source First

Base AI tooling -- agent frameworks, embedding infrastructure, RAG pipeline components, observability and monitoring tooling, evaluation frameworks, model registry -- has a well-populated vendor and open-source ecosystem in 2026. The correct enterprise position is to purchase or adopt open-source solutions for all base tooling, and to build only when no available solution serves the specific requirement.

The build-versus-buy evaluation for base tooling differs from the application layer evaluation in one important respect: the build trigger is specific, unmet requirements -- not competitive differentiation. Building a custom RAG pipeline because the available open-source options require more configuration than the team prefers is not a valid build trigger. Building a custom evaluation framework because the specific evaluation criteria for your use case are not supported by any available tool may be.

When to Build Base Tooling Instead

Build base tooling only when all of the following are true: the specific requirement is not met by any available vendor or open-source solution after a structured evaluation; the requirement is unlikely to be met within the next 12 months as the ecosystem evolves; the build cost is demonstrably lower than the workaround cost over a 3-year horizon; and the built tooling will not become a maintenance burden that distracts engineering from application-layer differentiation.

The standard at which base tooling build decisions should be evaluated in 2026 is higher than it was in 2023. The tooling ecosystem has matured significantly. Most requirements that justified custom base tooling builds in 2023-24 are now served by available solutions. Re-evaluate every existing custom base tooling build against the current ecosystem before committing to its ongoing maintenance.

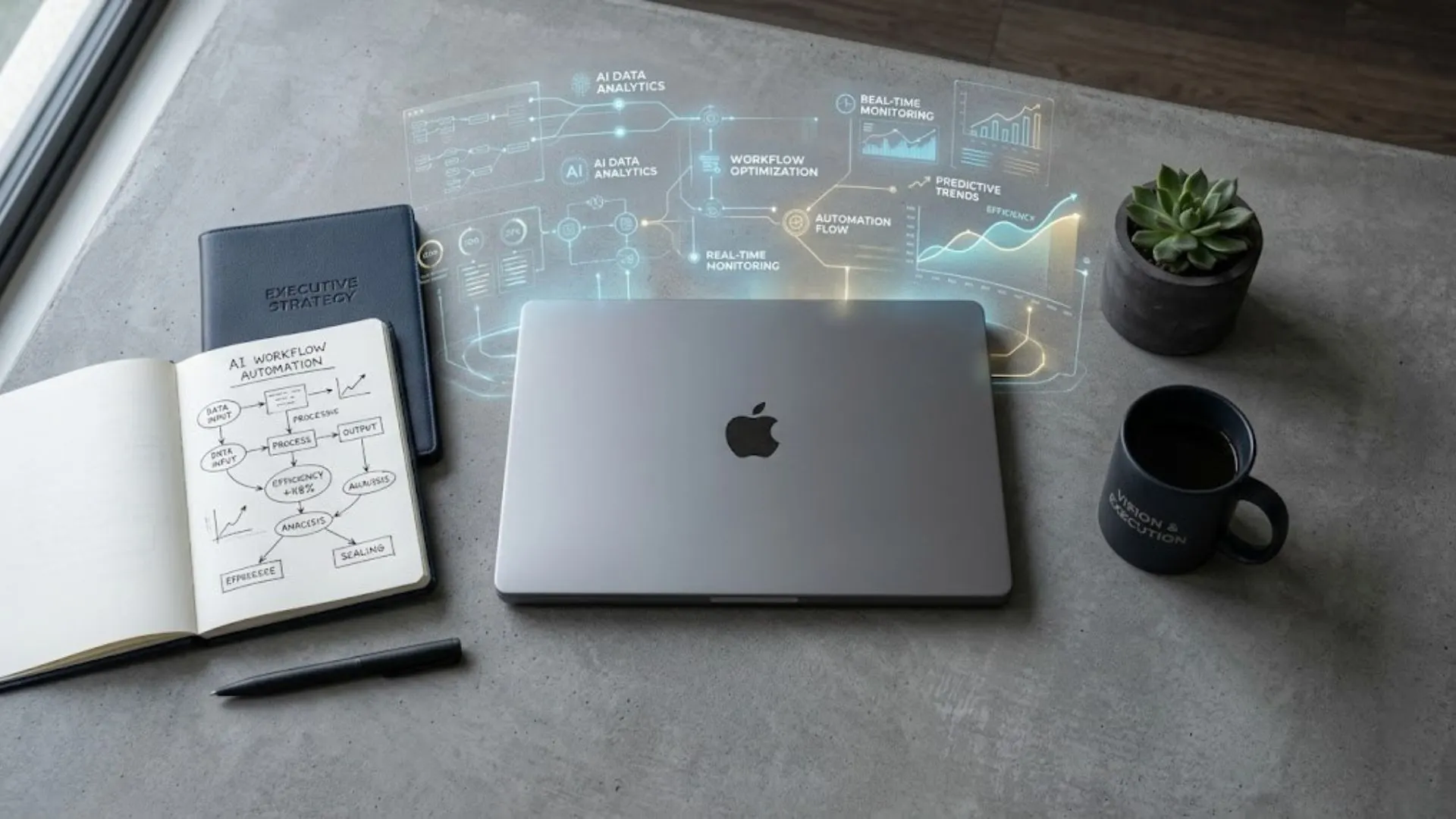

Section 5 - Layer 4: Integration and Orchestration -- The Hybrid Default

The integration and orchestration layer is where the enterprise AI architecture decision is most nuanced -- and where the most expensive mistakes are most common. The layer covers connecting AI systems to enterprise data sources, multi-agent workflow coordination, business process automation, tool access management, and context management across complex AI pipelines.

The correct enterprise position is Hybrid: build the workflow logic and business process encoding that is uniquely yours; purchase or adopt open-standard connectors for the infrastructure that connects AI to enterprise systems. The workflow logic is the encoded intelligence of your organisation's processes. The connectivity infrastructure -- the connectors, APIs, and tool interfaces -- is commodity that any competitor can purchase on the same terms.

What Belongs in the Build Layer vs the Buy Layer of Orchestration

Build (proprietary workflow logic): Business rules and decision logic encoded in AI workflows. Process-specific routing, escalation, and exception handling. Domain-specific context management. Organisation-specific agent behaviours. These encode how your organisation works -- they create competitive advantage when built correctly, and vendor dependency when outsourced.

Buy (commodity connectors): Standard enterprise system connectors (CRM, ERP, HRIS). MCP-compatible tool interfaces. Authentication and authorisation middleware. API gateway infrastructure. These are available from multiple vendors. Buying them is not creating a competitive moat -- it is acquiring the plumbing that allows the moat to function.

Enterprise AI Governance Framework

The governance framework for the orchestration layer -- the approved tool register, the workflow documentation standard, and the change management process -- is covered in the Enterprise AI Governance Framework.

Section 6 - Layer 5: AI Application Layer -- The Decision That Varies Most

The AI application layer -- the specific AI product built for a defined use case -- is where the buy vs build decision produces the most varied outcomes across different organisations and use cases. Meeting summaries, email drafting assistants, customer support triage, document search, HR screening: these are commodity use cases where purchased solutions from multiple vendors will outperform anything a mid-size enterprise could build in the same time frame, at lower total cost of ownership.

Credit scoring models trained on 10 years of proprietary transaction data. Client recommendation engines that encode 20 years of relationship management knowledge. Document analysis tools trained on proprietary contract libraries. These are differentiating use cases where a purchased solution cannot produce the required behaviour -- because the required behaviour depends on data and institutional knowledge that only the organisation possesses.

The Commodity-or-Differentiator Test

Apply the commodity-or-differentiator test to every AI use case before committing to a build or buy path: Can a competitor purchase the same capability from the same vendor on the same terms within the next 12 months? If yes, it is a commodity use case -- buy, deploy quickly, and invest the engineering capacity you saved into the differentiating use cases below.

If no -- if the capability depends on your organisation's unique data, institutional knowledge, or process intelligence that cannot be replicated from vendor tooling -- it is a differentiating use case. The investment is in building a system that encodes something no one else can purchase. That investment is justified by the moat it creates. The commodity use case investment is justified only by operational efficiency, not by competitive advantage.

The AI Commodity Trap is the systematic error of investing Build-level resources in use cases that should be commodity Buy decisions -- and expecting strategic advantage from the result.

Section 7 - Layer 6: Competitive Differentiator -- Always Build. No Exception.

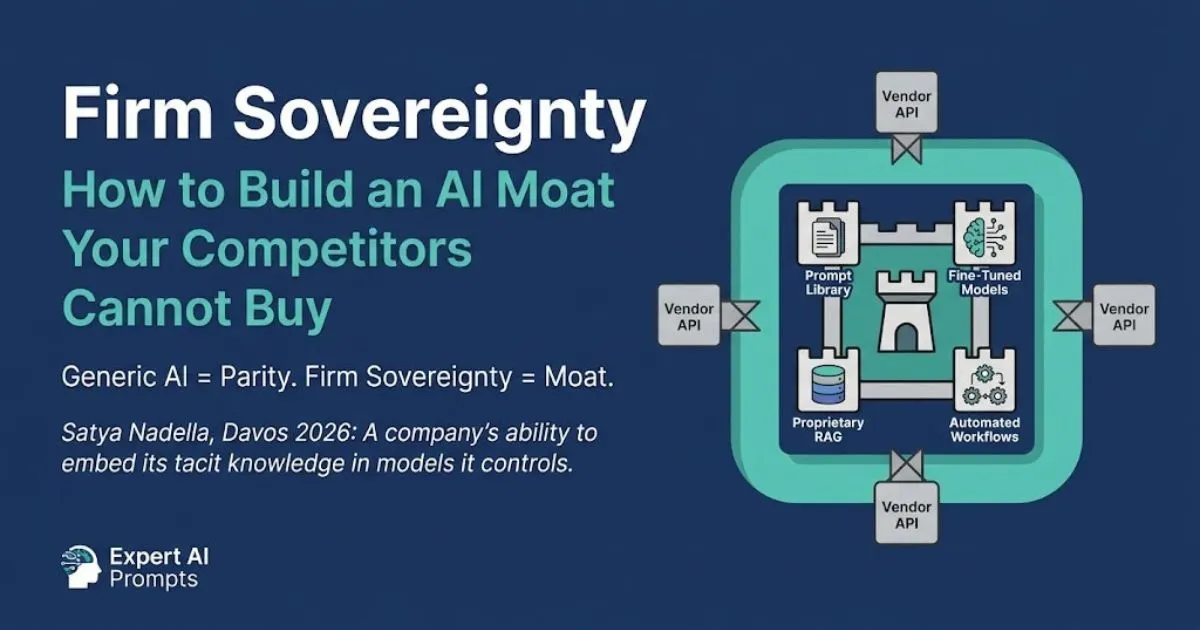

The competitive differentiator layer is the bottom of the stack -- the foundation of your AI architecture, not the commodity scaffolding above it. It contains the assets that encode your organisation's unique intelligence in a form that AI systems can access, apply, and compound over time: the governed proprietary prompt library, fine-tuned models trained on unique organisational data, RAG systems built on proprietary document estates, automated workflows that encode institutional knowledge.

These assets cannot be purchased because they do not exist outside your organisation. A competitor can purchase the same foundation model API on the same terms tomorrow. They cannot purchase your 20 years of domain knowledge encoded in a governed prompt library. The competitive differentiator layer is what Satya Nadella described at Davos 2026 as Firm Sovereignty: a company's ability to embed its tacit knowledge in models it controls. You cannot buy Firm Sovereignty. You build it, one asset at a time, and it compounds.

The governed prompt library is the first Firm Sovereignty asset most organisations can build without significant engineering investment. It is version-controlled, domain-specific, access-governed, and CoE-owned. Each refinement improves every AI application that uses it. Start building it before the sector reset occurs.

Firm Sovereignty: Building an AI Moat

The full architecture of Firm Sovereignty assets -- prompt library, fine-tuned models, RAG on proprietary data -- is in Firm Sovereignty: Building an AI Moat.

The process of building a governed, version-controlled, production-grade prompt library -- the first Firm Sovereignty asset -- is covered in Industrialising Prompts at Enterprise Scale.

Section 8 - Model-Agnostic Architecture: The Single Most Important Design Principle

Model-agnostic architecture is not a feature of a specific platform or a vendor capability. It is a design principle: the proprietary intelligence layer (governed prompt library, fine-tuned models, RAG on proprietary data, workflow logic) is separated from the model serving infrastructure by an abstraction layer. The abstraction layer handles model-specific API calls, output format normalisation, and failover routing -- so the intelligence layer can be served by multiple model providers without rebuilding it from scratch.

The competitive case for model-agnostic architecture is primarily about leverage, not flexibility. An organisation whose proprietary intelligence layer is tightly coupled to a single model provider's API has given that provider the right to reprice the relationship at renewal, because the switching cost is high and the vendor knows it. An organisation whose intelligence layer is model-agnostic retains the ability to move, which changes the pricing negotiation completely.

What Model-Agnostic Architecture Actually Requires

1. A stable internal interface for AI capabilities -- the calling system specifies what it needs (summarise, classify, generate, extract) and the abstraction layer routes to the appropriate model provider. The calling system is not aware of which model is serving the request.

2. Output format normalisation in the abstraction layer. Different models return different response formats. The normalisation layer translates all of them to the internal format that downstream systems expect, so a model change does not break downstream consumers.

3. Prompt library entries written to be model-agnostic where possible, with provider-specific variants maintained for capabilities that differ significantly between providers. Not all prompts can be made fully model-agnostic -- but the library should document which entries have provider dependencies.

4. Failover routing logic. When a provider API is unavailable, rate-limited, or behaviourally changed by an update, the abstraction layer routes to an alternative provider. This is both an availability benefit and a model update risk mitigation.

5. Governance tracking at the abstraction layer. Every AI request, its routing decision, its provider, and its output is logged for audit and drift detection, regardless of which provider served it.

Model-agnostic architecture is the primary technical mitigation for model lock-in -- Layer 1 of the five AI vendor lock-in layers covered in Own vs Orchestrate: The 2026 Enterprise Guide to Avoiding AI Vendor Lock-In.

Section 9 - MCP and the Orchestration Standard Shift

Model Context Protocol (MCP) is the open standard for how AI agents connect to tools, data sources, and external systems. In early 2025 it was an Anthropic specification. By late 2025 it was under Linux Foundation stewardship as the Agentic AI Foundation standard. By mid-2026 it is the reference implementation that enterprise AI architects use when evaluating orchestration platform choices.

The importance of MCP for enterprise AI architecture is specific: it changes the orchestration lock-in calculation. Before MCP, an enterprise AI programme built on a vendor's proprietary orchestration framework had workflow automation, tool integrations, and agent architecture that was fully coupled to that vendor. Migration was not just an API switch -- it was a complete rebuild of every tool connector and workflow definition. After MCP, an AI programme built on MCP-standard connectors can migrate workflow logic to a different execution platform without rebuilding every tool integration from scratch.

What MCP Changes -- and What It Does Not Change

What MCP changes: The tool-calling interface between AI agents and external systems. MCP-standard tool definitions are portable between MCP-compatible orchestration platforms. An AI agent's tool integrations -- the connections to databases, APIs, enterprise systems, and external services -- can be migrated to a different execution framework without rebuilding each connector individually.

What MCP does not change: The workflow logic encoded in your agent programmes. If the business rules and decision logic of your agents are tightly coupled to the execution model of a specific orchestration platform, MCP does not help you migrate that logic. The portability MCP provides is at the tool interface layer, not the workflow logic layer. Workflow logic portability requires that your business rules are documented as a standard, exportable format in version control you own -- which is a documentation and architecture discipline, not a protocol.

The practical architecture implication: adopt MCP-standard tool connectors everywhere possible. For orchestration platforms that are not yet MCP-compatible, assess their roadmap before committing. For workflow logic: document it in your own version control, in a format that is independent of the orchestration platform's proprietary syntax, regardless of what standard the platform uses.

Section 10 - The Five Most Expensive AI Architecture Mistakes

1. BUILDING THE FOUNDATION MODEL LAYER. Training a frontier model from scratch is not a competitive strategy for most enterprises -- it is a distraction that consumes capital that should be building the competitive differentiator layer. The foundation model layer is commodity. Invest the capital where it creates durable advantage.

2. MODEL-COUPLED PROMPT LIBRARIES. Prompt libraries that are calibrated entirely to a single model's specific behaviour accumulate switching cost with every entry added. Each entry becomes harder to migrate as the library grows. Build model-agnostic prompt libraries from the start. The switching cost you avoid at Year 3 is far larger than the additional rigour required at Year 1.

3. PROPRIETARY ORCHESTRATION WITHOUT EXIT PLANNING. Enterprise AI programmes built on a single vendor's proprietary orchestration platform without MCP compatibility and without documented workflow logic in exportable format have committed to that vendor for the life of the programme. The Builder.ai collapse in 2024 demonstrated what happens when the commitment cannot be honoured from the vendor's side. Document workflow logic independently of the execution platform.

4. BUYING AI APPLICATION LAYER SOLUTIONS FOR DIFFERENTIATING USE CASES. Purchasing a vendor's credit scoring model, client recommendation engine, or document analysis tool when your organisation has the proprietary data to build a genuinely superior version of each is the AI Commodity Trap. The purchased solution creates parity. The built solution creates advantage. The use cases that justify Build investment are exactly the use cases where the proprietary data advantage is greatest.

5. TREATING THE COMPETITIVE DIFFERENTIATOR LAYER AS A FUTURE PRIORITY. The governed prompt library, the fine-tuned models, the RAG systems on proprietary document estates: these take time to build, and they compound only from the moment they start accumulating. Every week spent deploying commodity AI tools before starting to build the proprietary intelligence layer is a week of compounding foregone. Start building the competitive differentiator layer before the sector reset occurs.

Closing - Architecture as Strategy

The enterprise AI platform architecture decision is not a technology decision with strategic consequences. It is a strategy decision that is expressed through technology choices. The six layers of the AI stack each represent a specific strategic choice about where to compete, where to commoditise, and where to invest in compounding advantage. The organisations that treat it as a technology procurement decision consistently produce architectures that are expensive to maintain, difficult to govern, and impossible to differentiate.

The organisations that treat it as a strategy decision -- buying what every competitor can buy, and building what only they can build -- produce architectures that get more valuable over time. The foundation model and cloud infrastructure layers are commodity. The competitive differentiator layer is the strategic asset. Everything in between is the architecture that connects them.

Your next steps:

AI Buy vs Build Decision Framework

The seven-factor decision matrix that applies the buy vs build decision correctly at each stack layer.

Firm Sovereignty: Building an AI Moat

The full architecture of the competitive differentiator layer: what Firm Sovereignty is, what it contains, and how to build it

The contractual protections and architecture decisions that prevent lock-in at each of the five stack layers where it accumulates.

About the Author

Matthew Bulat is the Founder of Expert AI Prompts and a 20+ year technology and AI strategy executive. Former CTO, Federal Government Technical Operations Manager for national infrastructure across 20 cities and 4,000 users, and 8+ year University Lecturer at CQUniversity. The six-layer architecture framework in this article reflects the stack design used in the Expert AI Prompts live platform -- 30 industries, 1,500+ prompts, 15 AI workflow systems.