Industrialising Prompts: How SMB Tactics Scale into Enterprise AI Architecture

A prompt used by one person is a tactic. A prompt library used by 200 people, version-controlled, access-governed, categorised by business function, and maintained as a living organisational asset -- that is a competitive moat. The difference between these two things is not the quality of the individual prompt. It is the architecture around it.

This is the bridge between what Expert AI Prompts does at SMB scale -- 1,500+ domain-specific prompts across 30 industries, 15 integrated AI workflow strategies, near-zero daily operational intervention -- and what enterprise AI architecture looks like when the same principles are applied at 200, 2,000, or 20,000 users. The methodology is identical. The architecture is different. And the three structural adaptations that make the difference are the subject of this article.

Section 1 - The Problem With Treating Prompts as Personal Tactics

Most enterprise AI programmes treat prompts as the personal property of whoever wrote them. An individual discovers that a well-constructed prompt dramatically improves their output quality. They use it. They refine it. They share it informally with one or two colleagues. The prompt improves again. Nobody documents it. Nobody versions it. Nobody knows who owns it. When that person leaves, the prompt goes with them.

This is the prompt as personal tactic -- and it represents the majority of enterprise AI activity in 2026. It is valuable at the individual level. It produces no compounding organisational advantage. The tenth use of a personal tactic delivers the same value as the first use. The tenth use of a properly industrialised, governed, domain-specific prompt library delivers more value than the first -- because each use, each refinement, and each domain adaptation makes the library more accurate, more comprehensive, and more specifically calibrated to the organisation's actual work.

What Deloitte 2026 Found -- and What It Means

Deloitte's 2026 State of AI research found that fewer than 60% of employees with approved AI tools use them regularly. The headline interpretation is that change management has failed. The more accurate interpretation is that the AI tools on offer are not useful enough to compete with existing work habits.

A generic AI tool given to a legal team produces generic AI outputs. A domain-specific prompt library calibrated to the legal team's actual document types, jurisdiction-specific language, client communication patterns, and internal precedent creates outputs that are immediately useful and demonstrably better than what a generic tool produces. The difference in adoption is not a training problem. It is a relevance problem. Industrialising prompts is how you solve the relevance problem.

The Enterprise AI Transformation Playbook

The full five-phase framework -- including Phase 4 where prompt library industrialisation becomes an enterprise-wide capability -- is covered in The Enterprise AI Transformation Playbook.

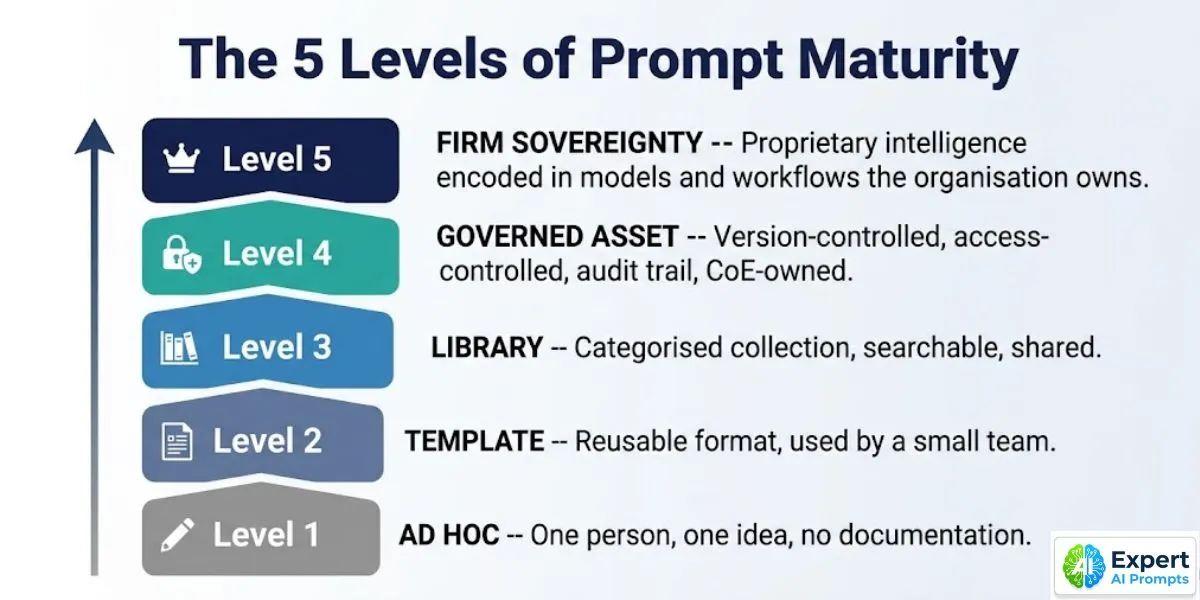

Section 2 - The Five Levels of Prompt Maturity

Enterprise prompt engineering does not begin at Level 5. It begins at Level 1 and compounds deliberately toward Firm Sovereignty. Understanding where your organisation currently sits determines which investment to make next.

Level 1: Ad Hoc -- The Tactic

What it looks like: Individual employees discover and use AI prompts independently. Prompts live in personal notes, browser bookmarks, or private chat histories. Output quality varies entirely by individual AI literacy. No sharing, no documentation, no version history.

The ceiling: Value is individual and non-transferable. When the person leaves, the prompt goes with them. The organisation has AI activity but no AI asset.

Level 2: Template -- The Reusable Format

What it looks like: Teams develop shared prompt templates -- structured formats with variable placeholders that multiple people adapt for their specific use cases. Templates live in shared drives or wikis. Informally maintained. No version control. No access control.

The ceiling: Templates drift over time as individuals modify them without coordination. By Month 6, the 'shared template' exists in 12 different versions across the team's personal storage. The organisation has shared AI activity but no shared AI standard.

Level 3: Library -- The Searchable Collection

What it looks like: A structured, searchable collection of prompts categorised by function, use case, or domain. Curated by someone. Accessible to a defined group. Most enterprise AI programmes that describe themselves as having a prompt library are operating at Level 3.

The ceiling: Without version control, access governance, and a defined owner, the library decays. New prompts are added without quality review. Old prompts are never retired. The library grows but its average quality declines. It becomes the place where prompts go to exist, not the place where the best AI capability in the organisation lives.

Level 4: Governed Asset -- The Controlled Resource

What it looks like: The prompt library is version-controlled (each change is tracked), access-controlled (role-based permissions determine who can view, use, modify, or approve prompts), quality-reviewed (new prompts are tested against defined accuracy and safety standards before publication), and owned by the AI Centre of Excellence. The CoE is the custodian; business functions are the contributors and users.

The outcome: Every user accesses the best available version of each prompt. Improvements are captured and propagated. The library improves with use rather than decaying with time. The organisation has its first true AI knowledge asset.

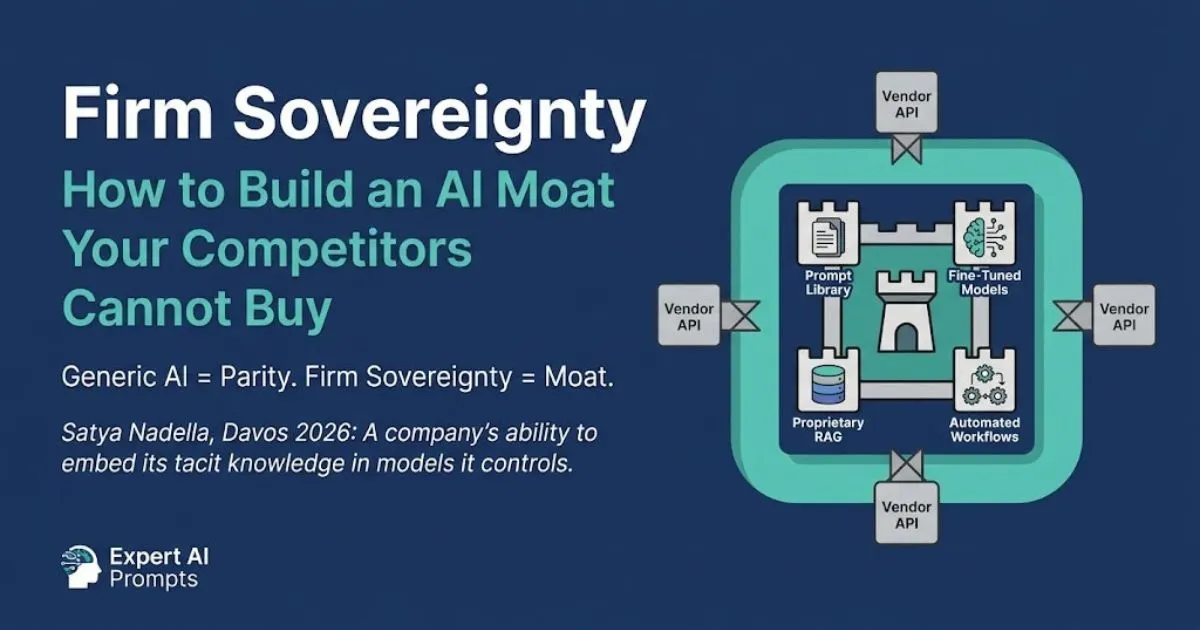

Level 5: Firm Sovereignty -- The Competitive Moat

What it looks like: The prompt library is not just a collection of prompts -- it is the encoded institutional knowledge of the organisation. Domain-specific language trained on the organisation's own precedents. Workflow prompts that encode 20 years of accumulated process knowledge. Fine-tuned outputs calibrated to the organisation's quality standards, communication style, and client relationships. This cannot be replicated by a competitor buying the same AI platform. It is built, not purchased.

Satya Nadella, Davos 2026: 'A company's ability to embed its tacit knowledge in models it controls.' Level 5 is Firm Sovereignty. Competitors can buy the same technology stack. They cannot buy your organisation's embedded institutional intelligence.

Section 3 - Scaling Adaptation 1: Industrialise the Prompt Library

The Expert AI Prompts methodology was built on a cross-niche multiplier principle: identify the structural logic of a highly effective prompt, then systematically adapt it across different domains, contexts, and use cases. Across 30 industries and 1,500+ prompts, this produced a library where each prompt is documented, tested, domain-specific, and reusable -- not as an informal collection, but as a structured, productised asset. The same principle, applied at enterprise scale, produces the governed prompt library.

What 'Industrialised' Actually Means for a Prompt

Industrialisation converts a prompt from a personal tactic into a documented, tested, governed, version-controlled organisational asset. An industrialised prompt has six attributes that a personal tactic lacks:

1. Documented intent and scope -- what this prompt is designed to produce, what inputs it requires, what use cases it is tested for, and what use cases it is not designed for. Users know what they are selecting before they use it.

2. Production test results -- accuracy and quality measured against real production data, not sandbox conditions. The prompt's output quality is validated under the same conditions users will encounter. False positive rate, output consistency, and edge case behaviour are documented.

3. Version history -- every change to the prompt is tracked with a date, the author of the change, the reason for the change, and the test results before and after. Any user can see the prompt's evolution and the evidence supporting the current version.

4. Role-based access -- some prompts are organisation-wide resources; others contain sensitive commercial, legal, or proprietary context that should only be accessible to defined roles. Access governance prevents prompts containing IP from being used outside their intended context.

5. Domain categorisation -- prompts are categorised by business function (Legal / Finance / HR / Operations / Marketing / Customer Service), by use case type (drafting / analysis / summarisation / translation / decision support), and by risk tier (routine / sensitive / regulated). Users find what they need; governance ensures appropriate use.

6. Retirement protocol -- a governed library has a defined process for retiring outdated prompts: when a model update changes behaviour, when a regulatory change makes a prompt non-compliant, or when a better prompt supersedes the existing one. Retired prompts are archived, not deleted -- they remain available for audit but are removed from active use.

AI Centre of Excellence

The AI Centre of Excellence is the governance owner of the enterprise prompt library -- the structure that makes Level 4 and Level 5 operationally sustainable is covered in the AI Centre of Excellence guide.

A well-constructed prompt library is a Gate 2 requirement -- monitoring tooling for the AI system requires documented, version-controlled prompts to track drift against a known baseline.

Section 4 - Scaling Adaptation 2: Federate the Workflow Architecture

A prompt in isolation produces a single output. A workflow system connects prompts in sequence to produce a compounding result -- where the output of one prompt becomes the structured input to the next, and the combination produces something that neither prompt could produce alone. Expert AI Prompts runs 15 integrated AI workflow systems that operate this way: each system is a sequence of domain-specific prompts connected through a defined workflow architecture, producing outputs that a single prompt cannot generate.

At enterprise scale, the 15 workflow systems become the template library that business functions adapt within the CoE's architecture standards. The CoE defines the workflow structure, the integration standards, the data handling requirements, and the governance controls. Business functions own the domain-specific prompt content within those structures, adapted to their specific context, client base, and process requirements. The result is a federated workflow architecture: centrally governed standards and infrastructure, locally owned and contextualised implementations.

From 15 Workflows to Enterprise Workflow Operating System

The Expert AI Prompts automation architecture -- content generation, outreach, and sales pipeline systems operating in concert -- demonstrates the compounding advantage of connected workflow systems at SMB scale. Each system reinforces the others: content generated by System 1 feeds the outreach sequences in System 2; the conversion data from System 3 informs the content strategy of System 1. The systems are not independent; they are interconnected and compounding.

At enterprise scale, this architecture becomes the AI Operating System -- a set of interconnected workflow systems that handle the repeatable, high-volume cognitive work of the organisation: contract review, proposal generation, data analysis, regulatory monitoring, customer communication, research synthesis. Each workflow system is documented, governed, and connected to shared data pipelines. Each produces outputs that feed downstream systems. The enterprise AI Operating System does not just automate individual tasks -- it compounds the value of every AI deployment by connecting them into a coherent operational architecture.

The reason workflow systems reach production more reliably than isolated use cases is directly related to the Success Contract and use case selection principles covered in Escaping Pilot Purgatory.

Section 5 - Scaling Adaptation 3: Formalise the Transformation Methodology

The Expert AI Prompts customer journey -- from overwhelmed operator to confident AI strategist -- is the individual-level proof of concept for the enterprise transformation journey. The methodology that takes one person from generic AI use to domain-specific strategic AI deployment is the same methodology that takes an entire workforce from AI awareness to AI proficiency. The difference is not the methodology. It is the delivery infrastructure.

At enterprise scale, the three-tier AI skills programme delivers the methodology at workforce scale. Tier 1 (Literacy) establishes AI awareness and the ability to use approved tools for basic tasks -- the equivalent of a new Expert AI Prompts customer engaging with their first prompt pack. Tier 2 (Proficiency) develops the ability to construct, evaluate, and adapt prompts for domain-specific use cases -- the equivalent of an intermediate user building their own prompt workflows. Tier 3 (Mastery) produces employees who can design and govern prompt libraries for their function, train others, and identify new automation opportunities -- the equivalent of a power user building a complete AI workflow system.

The AI Champion Network is the delivery mechanism. Champions are not a hire -- they are developed from the existing workforce, typically employees who have already progressed to Tier 2 or 3 independently. One Champion per major business function serves as the peer-level demonstration of what AI mastery looks like in practice. Peer demonstration is more effective than any corporate training mandate, because Champions show colleagues the specific, domain-relevant benefit of AI proficiency in their shared work context -- not the generic benefits that a corporate communications programme describes.

Section 6 - Prompt Governance: The Architecture That Makes the Library Stick

A prompt library without governance is a documentation project. It produces an initial collection, receives contributions for the first quarter, loses active maintenance by Month 6, and becomes the place where outdated prompts live while users go back to personal tactics. The governance architecture is what separates a library that decays from a library that compounds.

The Four Governance Requirements for an Enterprise Prompt Library

1. Designated CoE ownership -- the AI Centre of Excellence is the custodian of the prompt library. One named role (the Governance and Compliance Lead, or a delegated Prompt Library Manager) is personally accountable for library quality, version currency, and retirement protocol. Without a named owner, the library has shared responsibility, which means no responsibility in practice.

2. Contribution workflow with quality gate -- a defined process for contributing new prompts to the library: submission, review, testing against production data, quality approval, and publication. The review process is documented. The quality standards are specific. Generic prompts that produce generic outputs are not published -- the library standard is domain-specific, production-tested accuracy.

3. Quarterly library audit -- a scheduled review of every prompt in the library: still accurate? Still relevant? Still compliant with current regulatory requirements? Has a model update changed the behaviour? Has a better prompt superseded it? The quarterly audit is a CoE responsibility, not an individual one.

4. Incident trigger for immediate review -- any production incident involving an AI output that used a library prompt triggers an immediate review of that prompt. If the prompt produced a harmful, incorrect, or non-compliant output, it is suspended from the library pending investigation and remediation. The incident response process treats a library prompt failure with the same urgency as any other AI system failure.

Enterprise AI Governance Framework

The complete governance architecture -- including the AI Centre of Excellence structure and incident response protocol -- is covered in the Enterprise AI Governance Framework.

Section 7 - The Firm Sovereignty Outcome: Why Competitors Cannot Buy What You Build

Every AI platform vendor sells the same foundation model capabilities to every customer on identical terms. Microsoft Copilot, Google Gemini Enterprise, Anthropic Claude for Enterprise -- your competitors have access to all of them, at the same price, with the same feature set. The commodity layer of enterprise AI creates parity, not advantage.

The competitive advantage is in the proprietary layer built on top: the domain-specific prompt library calibrated to your organisation's actual documents, processes, and client relationships; the workflow systems that encode your accumulated institutional knowledge; the fine-tuned outputs that reflect your quality standards, communication style, and domain expertise. This layer cannot be purchased. It is built through disciplined prompt engineering, version-controlled improvement, and the structured deployment of institutional knowledge into AI systems that the organisation controls.

This is Firm Sovereignty. And it compounds in a way that commodity AI access cannot. Every refined prompt makes the library more accurate. Every new workflow system makes the AI Operating System more capable. Every Champion who reaches Tier 3 mastery makes the organisation's AI advantage harder to replicate. The organisation that builds this layer in 2026 and 2027 will be operating from a fundamentally different competitive position in 2029 than the organisation that deployed the same AI platform access without building the proprietary layer on top of it.

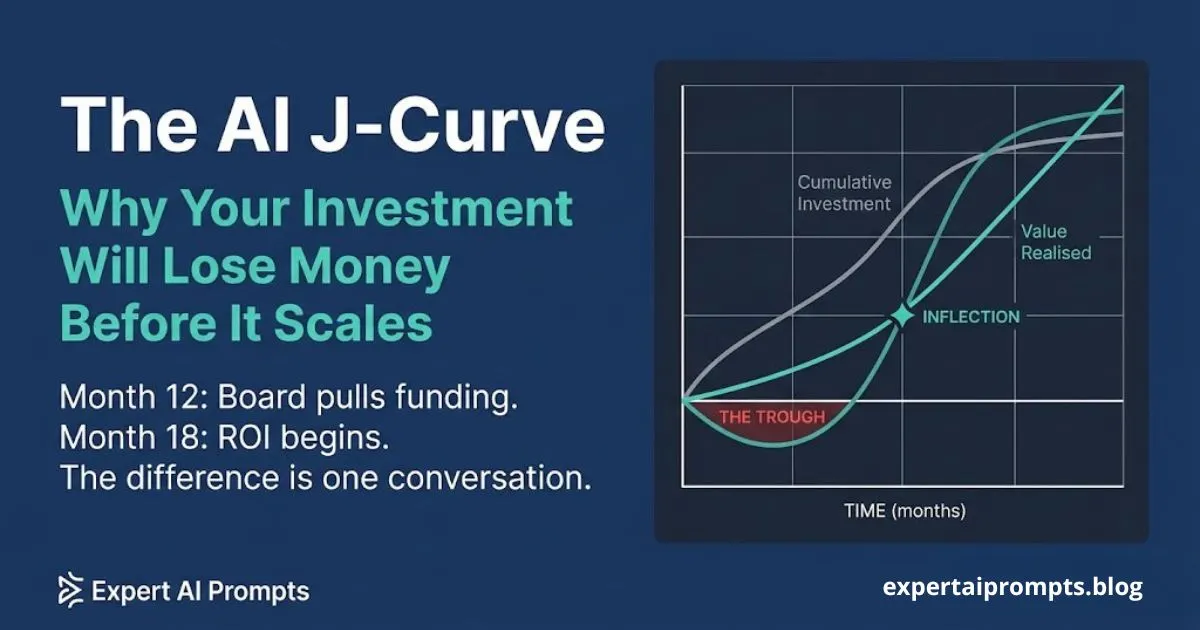

The Firm Sovereignty outcome is why the Phase 3-5 productivity acceleration in The AI J-Curve exceeds the initial investment by 3x by Year 3 -- the proprietary intelligence layer is the compounding mechanism.

Closing - The Bridge From Tactic to Asset

The Expert AI Prompts methodology was designed as a live demonstration of this bridge. 1,500+ domain-specific prompts across 30 industries, maintained as governed, versioned, productised assets. 15 integrated workflow systems operating as a compound AI Operating System. Near-zero daily operational intervention within 60 days -- proof that the 4x speed with quality benchmark is not aspirational when the prompt architecture is right.

The enterprise scaling challenge is not to create new methodology -- it is to take what already works at individual and SMB scale and build the governance, access control, version management, and delivery infrastructure that makes it work at 200, 2,000, or 20,000 users. The three adaptations in this article are that infrastructure.

Your next steps:

Enterprise AI Readiness Checklist

The 25-question assessment includes the AI capability dimension that maps directly to prompt maturity level. Free PDF, no email required.

AI Centre of Excellence

The CoE structure that owns, governs, and evolves the enterprise prompt library.

The Enterprise AI Transformation Playbook

Phase 4-5 of the transformation framework covers the Firm Sovereignty build in full.

About the Author

Matthew Bulat is the Founder of Expert AI Prompts and a 20+ year technology and AI strategy executive. Former CTO, Federal Government Technical Operations Manager for national infrastructure across 20 cities and 4,000 users, and 8+ year University Lecturer at CQUniversity. The prompt industrialisation methodology in this article is not theoretical -- it is the operating architecture of the Expert AI Prompts live system: 1,500+ domain-specific prompts across 30 industries, 15 integrated AI workflow systems, near-zero daily operational intervention.