Own vs Orchestrate: The 2026 Enterprise Guide to Avoiding AI Vendor Lock-In

In 2024, Builder.ai entered administration. Enterprise clients running production AI systems on its infrastructure lost access to those systems. Governance documentation was unavailable for regulatory audit. Source code was inaccessible. The rebuild cost -- where rebuilding was even possible -- ran to 18 months or more. The clients had not been reckless. They had evaluated the vendor, integrated carefully, and built genuine production capability. The problem was that the capability existed inside the vendor's infrastructure, not inside theirs.

Builder.ai is the extreme case. Vendor insolvency is not the most common form of AI vendor lock-in -- it is just the most visible one. The more common form is slower and less dramatic: a pricing change at Year 2 that the organisation cannot respond to because switching cost has accumulated to an unacceptable level. A model update that changes output behaviour in production systems the organisation cannot migrate. An acquisition that changes the vendor's data handling policies in ways the organisation's contracts do not protect against.

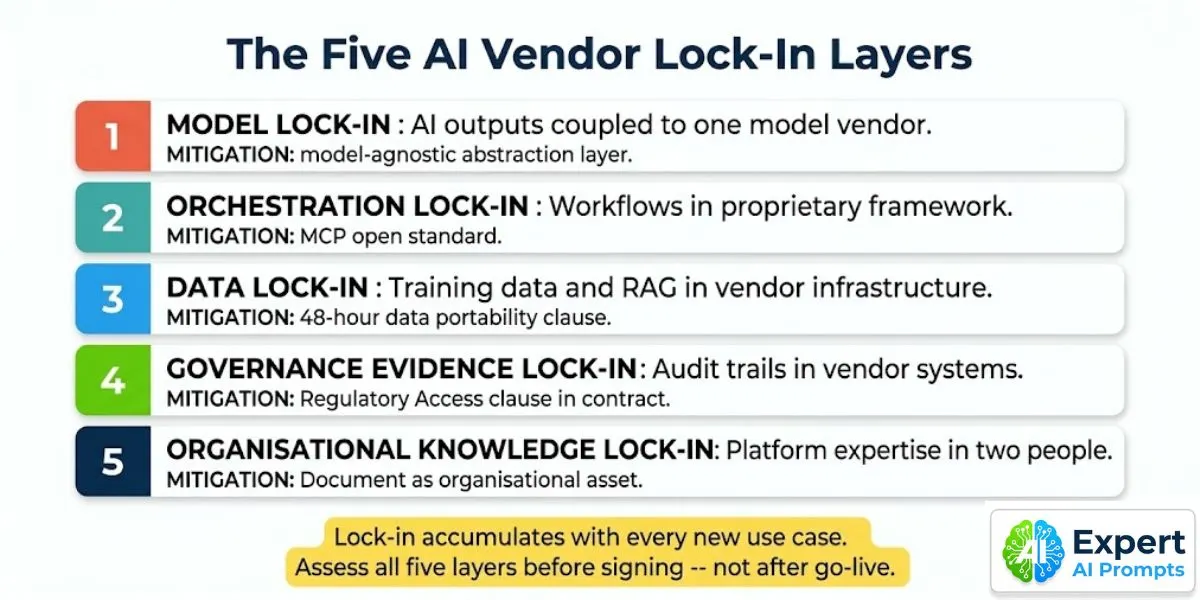

AI vendor lock-in is not a single risk. It operates across five simultaneous layers of the stack, each of which accumulates independently. An organisation that has actively managed lock-in at the model layer may still be completely trapped at the data and governance evidence layers. This article maps all five, explains the accumulation mechanism, and provides the ten pre-contract questions and three mandatory contract clauses that prevent it.

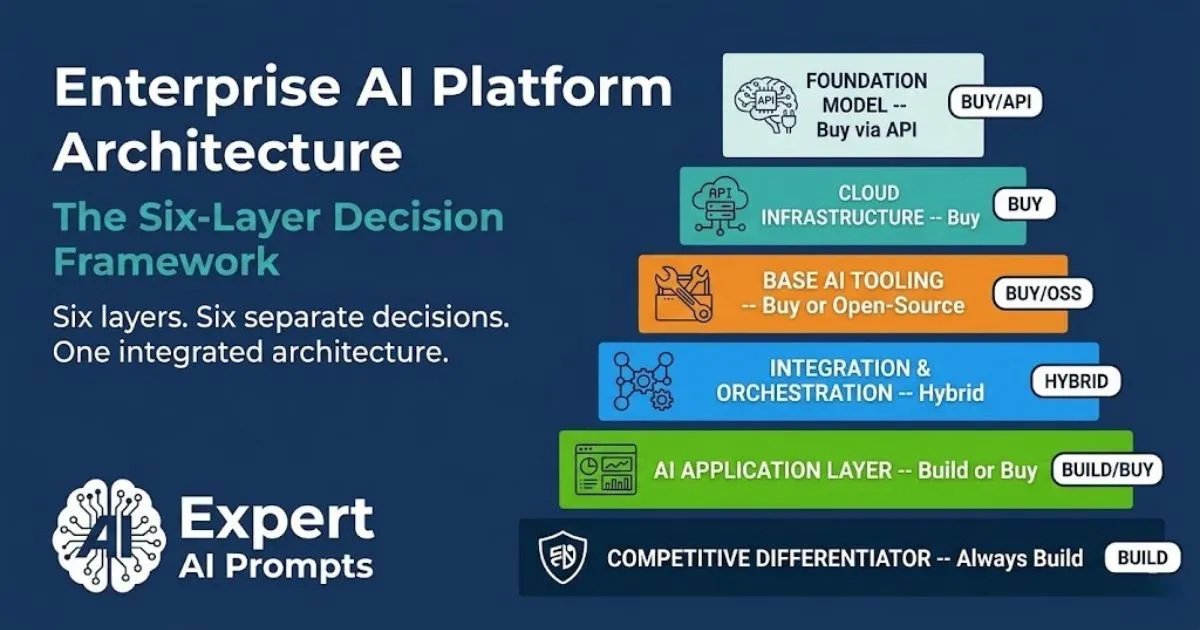

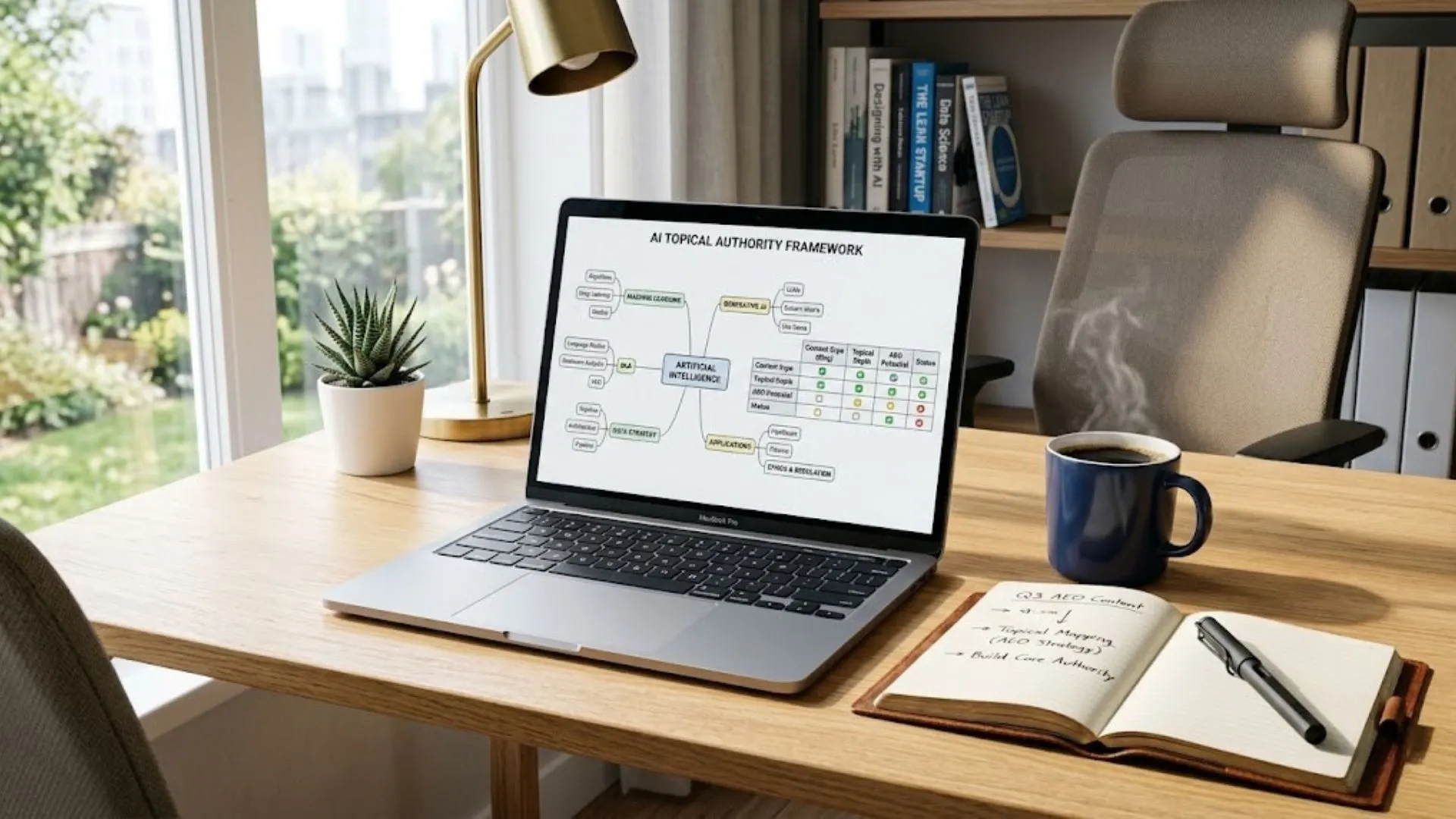

AI Buy vs Build Decision Framework

Vendor lock-in risk is Factor 6 in the seven-factor AI investment decision matrix -- scored before any contract is signed. The full framework is in the AI Buy vs Build Decision Framework.

Section 1 - Why AI Vendor Lock-In Is Different -- and More Dangerous -- Than Traditional SaaS Lock-In

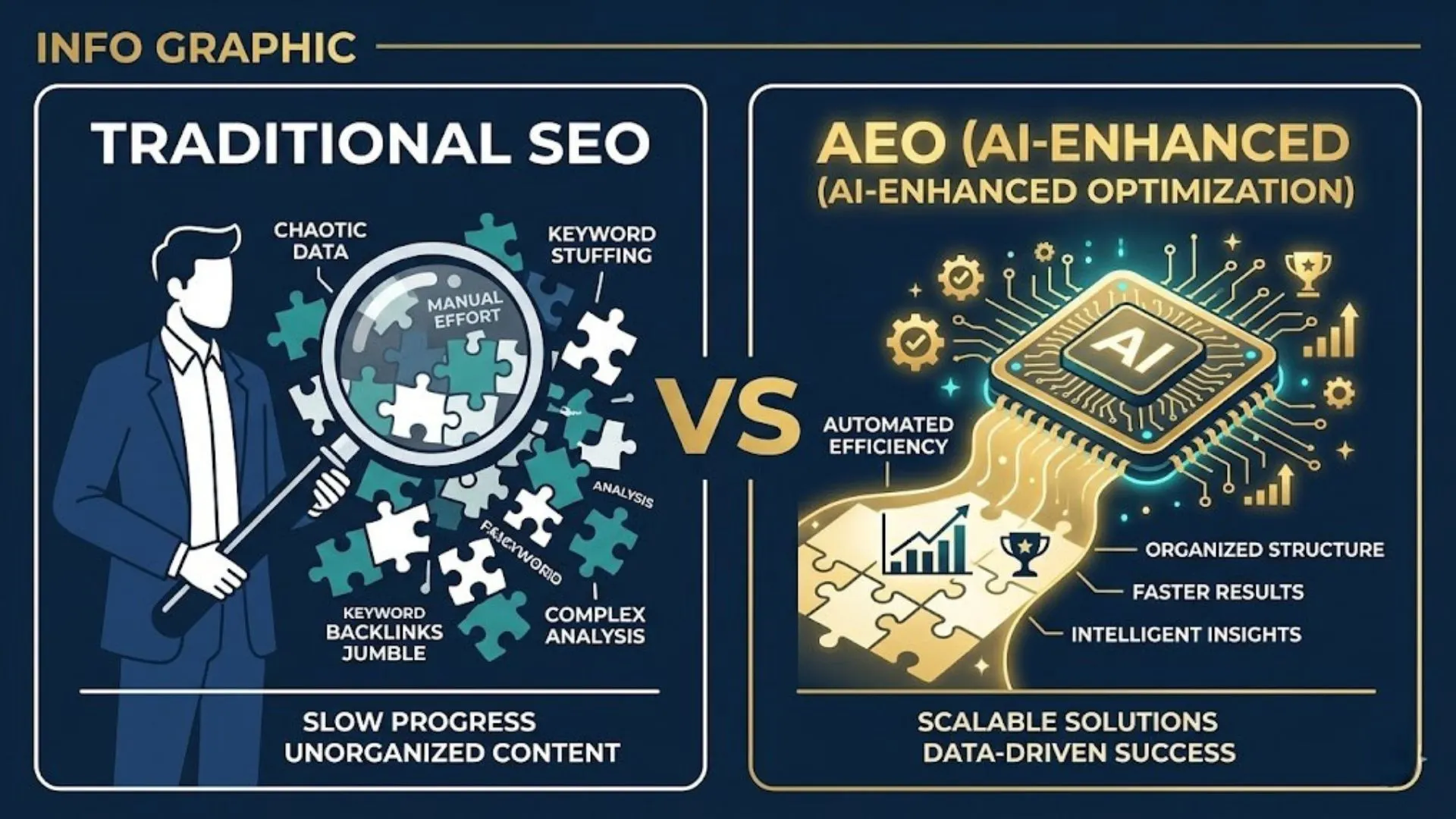

Traditional enterprise SaaS lock-in is a data portability problem: your data is in the vendor's database, and migrating it requires schema mapping, data transformation, and downtime. It is solvable -- expensive and painful, but technically well-understood and contractually manageable with standard data export clauses.

AI vendor lock-in is qualitatively different because it is not a single dependency problem. It is a compound dependency problem that operates at every layer of the AI stack simultaneously. Your model behaviour, your workflow automation, your training data, your compliance evidence, and your operational expertise can all be entangled with a single vendor at the same time -- each creating an independent switching cost that compounds with the others.

The Depth Problem: AI Lock-In Operates at Five Layers Simultaneously

'The choice of foundation model vendor and the choice of agent framework are not independent decisions. If agents run on a vendor's proprietary orchestration layer, lock-in compounds at every layer of the stack.' -- Kai Waehner, April 2026

The compound nature of AI lock-in means that the switching cost is not additive -- it is multiplicative. An organisation that is 60% locked in at the model layer, 70% at the orchestration layer, and 80% at the data layer does not have a combined switching cost of 70% (the average). It has a switching cost that reflects the intersection of all three dependencies simultaneously. In practice, organisations that have deployed AI at scale without actively managing all five lock-in layers often find that the effective switching cost makes migration practically impossible within any reasonable commercial timeframe.

Section 2 - The Five AI Vendor Lock-In Layers

Assess all five before signing any AI vendor contract. The layers accumulate independently -- managing one does not protect against the others.

Section 3 - Layer 1: Model Lock-In -- The Most Visible Layer

What gets trapped: AI outputs tightly coupled to a single model vendor's specific behaviour, tokenisation, and capability profile. System prompts, prompt engineering libraries, and output post-processing code all calibrated to a specific model's response patterns. Fine-tuned model weights hosted exclusively on the vendor's infrastructure.

Why it accumulates: Every prompt library entry, every fine-tuning dataset, and every integration built to consume a specific model's output format is an investment that becomes harder to migrate as it grows. A team that has spent six months optimising a production AI system for GPT-4o's output format has accumulated switching cost that is not visible on any vendor ROI calculation.

How Model Lock-In Accumulates

Model lock-in accelerates through three specific mechanisms. The first is prompt optimisation drift: prompt engineering effort accumulates toward the specific strengths and weaknesses of the current model. As the prompt library grows, each entry is increasingly calibrated to how the current model responds -- making migration to a different model increasingly expensive because all prompt calibration must be redone.

The second is fine-tuning dependency: fine-tuned model weights are typically stored in the vendor's infrastructure, and the fine-tuning process itself is optimised for the vendor's framework. Fine-tuning another model provider's base model requires a complete rebuild of the fine-tuning pipeline, not just a data export.

The third is output format dependency: downstream systems that consume AI outputs are typically built around the specific response format of the current model. When the model changes -- and foundation models are updated frequently -- downstream systems break. The deeper the integration, the more expensive the remediation.

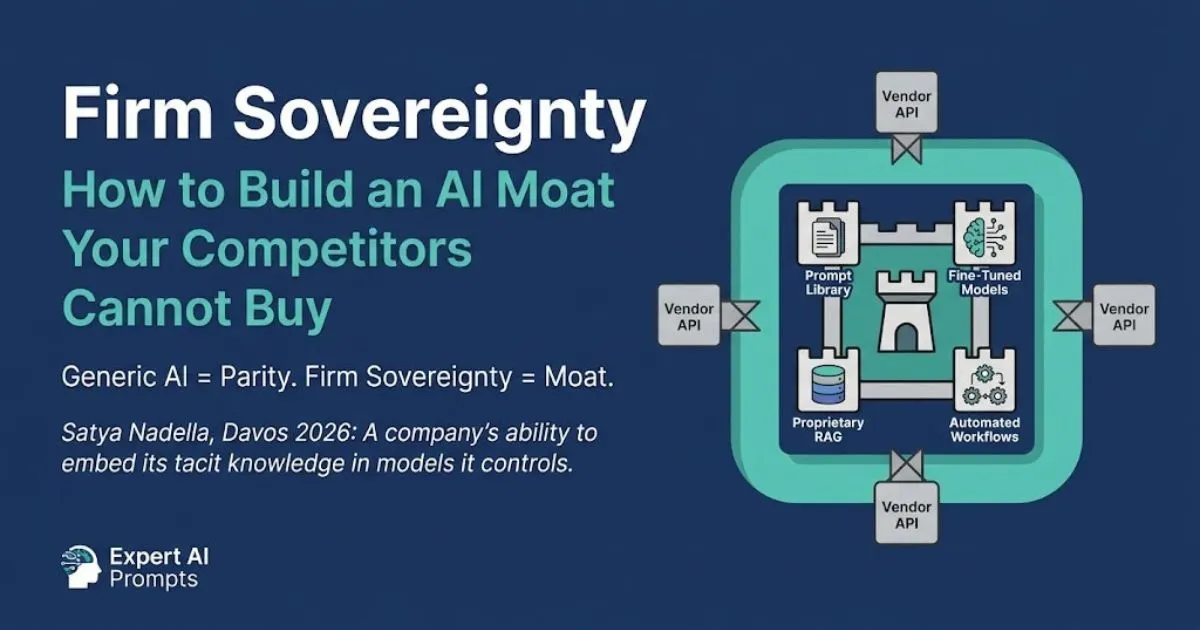

Mitigation: Model-agnostic abstraction layer. The proprietary intelligence layer (prompt library, fine-tuning, RAG) is designed to be portable across model providers. The abstraction layer handles model-specific API calls, output format normalisation, and failover logic -- so the intelligence layer can be served by multiple providers without rebuilding it from scratch.

Firm Sovereignty: Building an AI Moat

The model-agnostic architecture is a prerequisite for Firm Sovereignty: the proprietary intelligence layer must be portable across model providers to maintain negotiating leverage.

Section 4 - Layer 2: Orchestration Lock-In -- and Why MCP Matters

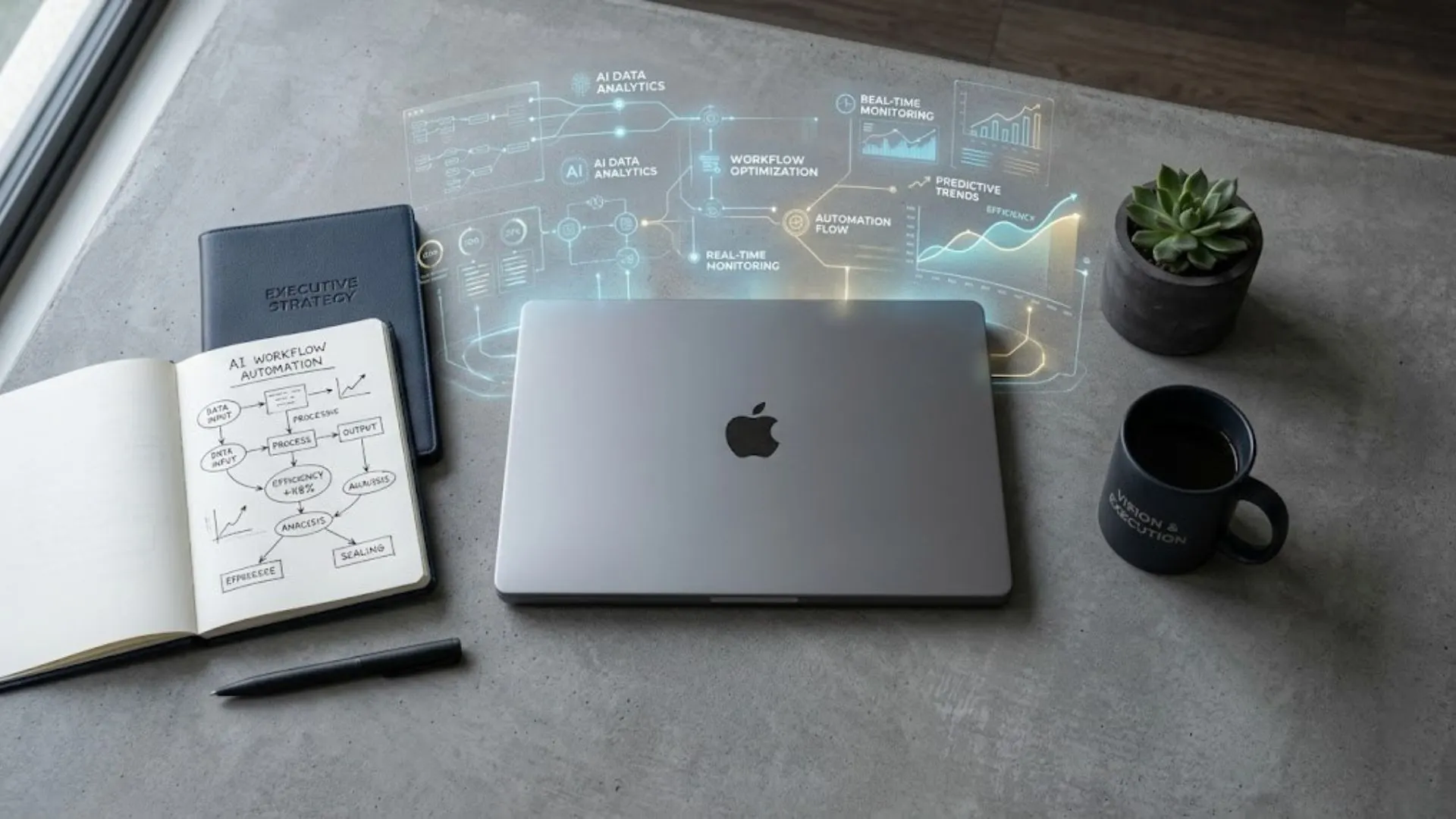

What gets trapped: AI workflow automation, agent architectures, tool integrations, and memory systems built on a vendor's proprietary orchestration framework -- with workflow formats and API structures that cannot be exported or replicated on a different platform.

Orchestration lock-in is the fastest-growing category of AI dependency risk in 2026. As organisations move from isolated AI use cases to connected agentic workflows -- agents that use tools, access external systems, maintain memory, and pass outputs to other agents -- the orchestration layer becomes the architectural core of the AI programme. An organisation whose agent architecture is built on a proprietary orchestration platform has made a structural commitment to that vendor that is far harder to exit than a simple model API subscription.

What MCP (Model Context Protocol) Actually Solves

Model Context Protocol (MCP) is the open standard, now under Linux Foundation stewardship, that creates a vendor-agnostic interface for AI agents to connect to tools, data sources, and external systems. An AI agent built on MCP-standard connectors can run on any model provider and any orchestration framework that supports the standard. An AI agent built on a vendor's proprietary tool-calling API is locked to that vendor's infrastructure.

MCP does not eliminate orchestration lock-in entirely -- it reduces it from a complete architecture rebuild to a migration of workflow logic. But the difference matters significantly: MCP-compatible workflow logic can be migrated to a different orchestration platform without rebuilding every tool integration from scratch. Proprietary orchestration workflows cannot.

Mitigation: Build on open-standard orchestration frameworks where MCP-compatible tooling exists. For proprietary orchestration that cannot be replaced, document all workflow logic in a format that is independent of the vendor's API syntax. Maintain all workflow definitions in version control the organisation owns.

Section 5 - Layer 3: Data Lock-In -- The Layer With the Highest Exit Cost

What gets trapped: Training data, fine-tuning datasets, RAG knowledge bases, vector embeddings, conversation history, evaluation datasets, and any proprietary data used to train or calibrate AI systems -- all stored in vendor-controlled infrastructure with no guaranteed export mechanism.

Data lock-in is often the most expensive form of AI lock-in to exit, because proprietary data is frequently the largest single investment in an AI programme. An organisation that has spent 12 months curating, cleaning, labelling, and structuring a proprietary training dataset has a data asset worth far more than the model it trains. If that dataset is stored in vendor infrastructure without contractual data portability rights, the organisation does not fully own its most valuable AI asset.

What Proper Data Portability Looks Like

Data portability is not satisfied by 'data can be exported on request.' It requires three specific commitments: a defined export format (open standard, not proprietary), a defined timeline (48 hours from written request, not 'within a reasonable period'), and a survival clause (the portability right survives contract termination, including termination for cause by the vendor).

Test the export process before you commit to the vendor. Request a sample export of a production-representative dataset during the evaluation phase. If the vendor cannot or will not demonstrate a clean export to a portable format during pre-contract evaluation, treat this as a binding indicator of what the exit experience will look like post-contract.

Mitigation: Contractual data portability clause with specific format, timeline, and survival provisions. Regular local backups of all proprietary data assets held in vendor infrastructure. Tested export process before contract signature. Data residency clause if regulatory requirements restrict cross-border data processing.

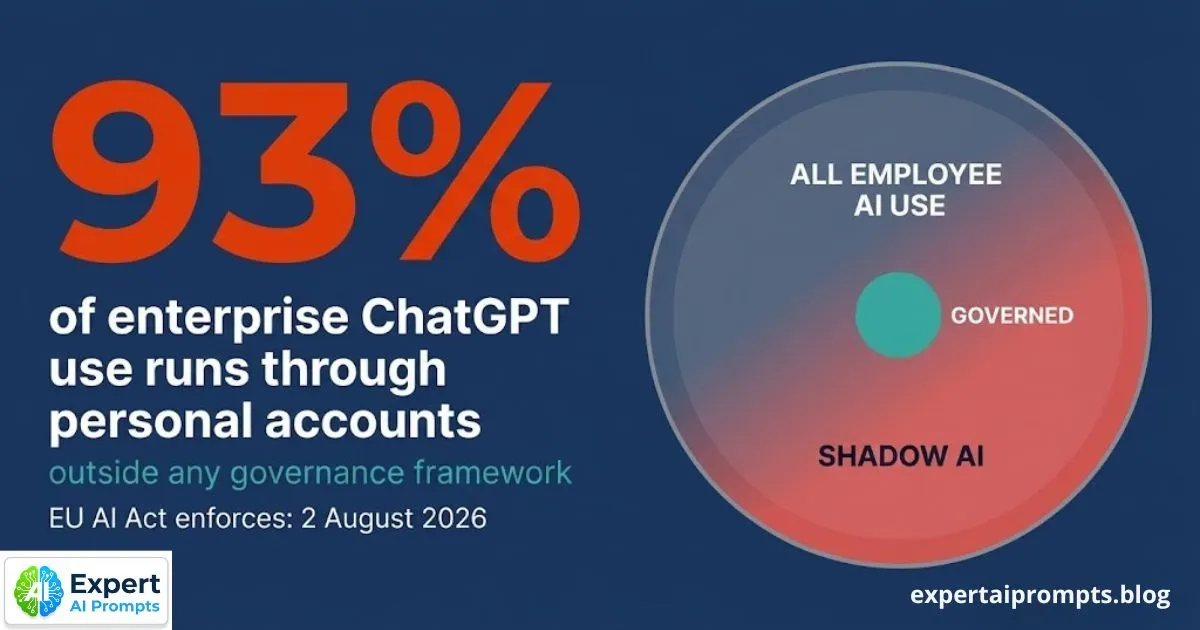

Section 6 - Layer 4: Governance Evidence Lock-In -- The EU AI Act Exposure

What gets trapped: Audit trails, model documentation, EU AI Act Annex IV technical documentation, SOC 2 compliance evidence, incident response records, and governance workflow history -- all held in vendor-managed systems that may not be accessible after contract termination.

This is the least-discussed lock-in layer and, from a regulatory compliance perspective, one of the most consequential. The EU AI Act requires that organisations deploying high-risk AI systems maintain comprehensive Annex IV technical documentation -- covering training data provenance, system architecture, performance metrics, human oversight design, and incident response records. This documentation must be available to regulators on demand.

If the documentation is stored in the vendor's compliance tooling -- as it is in most standard vendor deployments -- and the organisation cannot export it, the organisation faces a regulatory compliance gap that exists independently of anything else it has done correctly. The governance evidence lock-in is silent during normal operations and catastrophic during a regulatory audit or vendor exit.

Builder.ai: enterprise clients who needed to demonstrate EU AI Act compliance for production systems built on the platform found that the governance documentation was in Builder.ai's systems. When the company entered administration, access to that documentation was unavailable. The regulatory exposure continued beyond the vendor relationship.

Mitigation: EU AI Act Annex IV documentation maintained in organisation-controlled systems -- not exclusively in vendor compliance tooling. Regulatory Access clause in every vendor contract: the organisation retains full access to all system logs, model documentation, and audit trails for EU AI Act and SOC 2 compliance, at no cost, surviving contract termination for not less than seven years.

Enterprise AI Governance Framework

'The full governance architecture -- including Annex IV documentation standards and the Regulatory Access clause template -- is in the Enterprise AI Governance Framework.

AI Governance Framework Template

The AI Governance Framework Template contains the Regulatory Access clause wording and the Annex IV documentation checklist for download.

Section 7 - Layer 5: Organisational Knowledge Lock-In -- The Invisible Layer

What gets trapped: Configuration knowledge, optimisation techniques, undocumented behavioural patterns, integration quirks, and system management expertise about the vendor's specific platform -- all concentrated in a small team of two to four people who built and maintain the AI deployment.

Organisational knowledge lock-in is the invisible layer because it does not appear on any vendor contract and cannot be resolved by any contract clause. It is created by the natural concentration of expertise that occurs in any specialist system deployment: the team that built the AI programme on Platform X knows Platform X deeply. They know where its edge cases are, how to work around its limitations, and which undocumented behaviours to avoid. This knowledge is not documented anywhere -- it exists in the heads of the team.

When the vendor relationship ends -- whether through exit, repricing, or acquisition -- this knowledge does not transfer to the new platform. It must be rebuilt from scratch. The rebuild cost is not just technical: it is the accumulated learning that took 12-24 months to develop, now needing to be developed again for a different platform, under time pressure, with a team that may no longer be intact.

Mitigation: Systematic documentation programme: all configuration decisions, optimisation discoveries, and integration workarounds are documented as structured organisational knowledge -- not held in individual memory. This documentation is reviewed and updated quarterly. It is stored in the organisation's systems, not the vendor's. When team members who hold platform expertise leave, a formal knowledge transfer process captures their operational knowledge before departure.

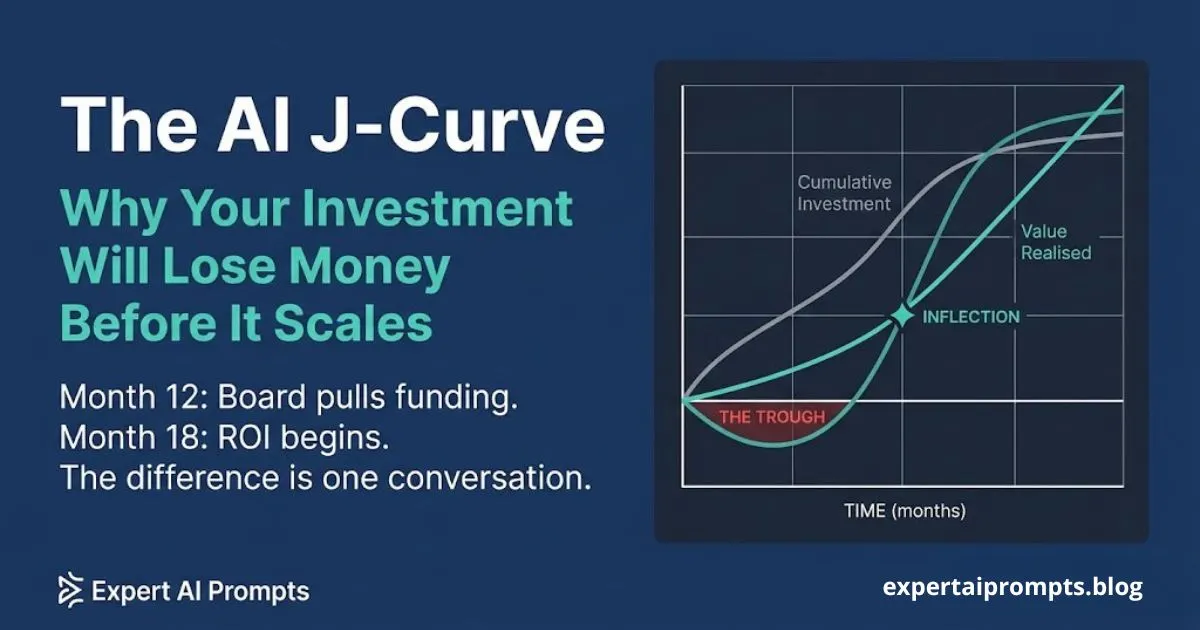

Section 8 - The Accumulation Mechanism: Why Every New Use Case Increases the Risk

The most important structural characteristic of AI vendor lock-in is its accumulation mechanism: every new use case deployed on the same vendor platform increases the switching cost at all five layers simultaneously.

Use Case 1 deployment creates modest lock-in: a prompt library calibrated to one model, one orchestration workflow, one data pipeline, limited compliance documentation, one team with basic platform expertise. The switching cost is manageable. Use Case 5 deployment, all on the same platform, creates compound lock-in: five interconnected prompt libraries, five workflow automations with shared orchestration infrastructure, five data pipelines feeding shared vector databases, Annex IV documentation for five separate systems, and a team that has now spent two years developing deep expertise in one vendor's tooling. The switching cost is no longer manageable -- it has become the de facto vendor selection for all future deployments.

The vendor knows this. Aggressive pricing at low-volume deployment stages is rational vendor strategy when the trajectory leads to compound lock-in at scale. By the time the vendor adjusts pricing to reflect the true market rate, the organisation has no credible migration alternative.

The accumulation mechanism makes escaping the vendor the operational version of the AI Commodity Trap -- both become progressively harder to exit the longer the dependency grows.

Section 9 - The 10-Question Pre-Contract Vendor Assessment

Walk every AI vendor through these ten questions before contract signature. The inability or unwillingness to answer any of them clearly is itself the finding -- it tells you what the exit experience will look like.

1. Can we export all data -- training data, fine-tuning datasets, RAG indices, conversation history -- in a portable open format within 48 hours of written request, including on contract termination? Describe the export format and process.

2. Who owns the fine-tuned model weights produced by training on our proprietary data on your infrastructure? Provide the specific IP ownership clause from your standard contract.

3. What happens to our data, model weights, workflow configurations, and system documentation if your company is acquired, enters administration, or discontinues this product?

4. Is your orchestration architecture MCP-compatible or proprietary? If proprietary, what does migration to an open-standard equivalent require, and what is the estimated migration timeline?

5. What are your pricing terms at 5x, 10x, and 50x our current usage volume? What contractual price protection exists beyond the initial term?

6. What is your model update policy? Can we pin production workloads to a specific model version for stability? What advance notice is provided before a model behaviour change?

7. What access do we retain to system logs, model documentation, audit trails, and EU AI Act Annex IV technical documentation after contract termination? Specify the format, timeline, and duration of that access.

8. Does your current SOC 2 Type II certification explicitly cover AI model inference workloads, or only the underlying infrastructure? What are your contractual notification obligations if your SOC 2 certification lapses?

9. What is your data residency policy? Can you contractually commit to restricting processing and storage of our data to specific geographic regions for regulatory compliance?

10. Provide two reference clients who have migrated away from your platform. Describe the exit timeline, process, and challenges they encountered.

Section 10 - Three Mandatory Contract Clauses

These three clauses are non-negotiable for every AI vendor contract. Any vendor that refuses to include them has disclosed their lock-in intention. Read each vendor's standard MSA before the pitch meeting -- not after the commercial terms are agreed. A vendor whose standard terms conflict with all three clauses is a vendor whose pricing at scale will reflect their lock-in advantage.

Clause 1: Data Portability

Required language: All data transferred to or created using the vendor's infrastructure -- including but not limited to training data, fine-tuning datasets, vector embeddings, RAG knowledge bases, conversation histories, system logs, and evaluation outputs -- is exportable in a portable, open, non-proprietary format within 48 hours of written request. This right is perpetual and survives contract termination, including termination for cause by the vendor. The vendor retains no licensing rights over the organisation's data following the export.

Why this exact language matters: 'Data exportable on request' is not data portability. 'Within 48 hours' is not 'within a reasonable period.' 'Portable open format' is not 'a format we provide.' 'Surviving contract termination' is not implied by the standard SaaS data handling clause. Each of these distinctions becomes critical during an adversarial exit.

Clause 2: IP Ownership of Fine-Tuned Models

Required language: All model weights, fine-tuned models, custom evaluation datasets, and model adaptations produced through the processing or training of the organisation's proprietary data on the vendor's infrastructure are the organisation's intellectual property. The vendor acquires no licensing rights, no training rights for other customers' use, and no commercial rights over these outputs. This IP ownership clause survives contract termination.

The risk without this clause: Several major AI vendors' standard terms include provisions that allow them to use customer data and custom model outputs for platform improvement and training of future models. Without explicit IP ownership language, the proprietary fine-tuned models the organisation funds may be improving the vendor's commercial product rather than remaining exclusively in the organisation's AI portfolio.

Clause 3: Regulatory Access

Required language: The organisation retains full, unrestricted access to all system logs, model documentation, Annex IV technical documentation, audit trails, SOC 2 evidence, and governance records required for regulatory compliance under the EU AI Act, AU Privacy Act, and any applicable data protection legislation. This access is provided in a portable format within 48 hours of request, at no additional cost, and survives contract termination for a period of not less than seven years from the date of contract termination.

Why seven years: The EU AI Act requires organisations to maintain technical documentation for the operational lifetime of the AI system plus ten years, or a minimum of seven years where the system has been decommissioned. Regulatory access that expires at contract termination creates a compliance gap that cannot be remediated after the vendor relationship ends.

Closing - Architecting for Portability From Day One

The organisations that successfully manage AI vendor lock-in are not the ones that negotiate better exit terms after they have committed to a platform -- they are the ones that design portability into the architecture from the first deployment decision.

The three decisions that create portability by design: a model-agnostic abstraction layer that separates the proprietary intelligence from the serving infrastructure; open-standard orchestration that separates workflow logic from execution platform; and contractual data portability, IP ownership, and regulatory access that protect the organisation's data and compliance position regardless of what happens to the vendor relationship.

None of these decisions significantly increases the cost of the initial deployment. All of them significantly reduce the cost of every future deployment, every contract renegotiation, and every vendor relationship management decision for the life of the AI programme.

Your next steps:

AI Buy vs Build Decision Framework

Vendor lock-in risk is Factor 6 in the seven-factor decision matrix. The full framework scores all four investment paths against vendor dependency risk.

Enterprise AI Governance Framework

The governance architecture that protects against governance evidence lock-in, including the Regulatory Access clause standard.

AI Transformation Roadmap 2026

Portability-by-design is embedded in the Phase 2 governance deliverables -- established before the first production deployment.

About the Author

Matthew Bulat is the Founder of Expert AI Prompts and a 20+ year technology and AI strategy executive. Former CTO, Federal Government Technical Operations Manager for national infrastructure across 20 cities and 4,000 users, and 8+ year University Lecturer at CQUniversity. The lock-in framework in this article is derived from the vendor assessment process applied in every Expert AI Prompts enterprise deployment -- 30 industries, 1,500+ prompts, 15 AI workflow systems.