AI Governance in 2026: Why Compliance Is Your New Competitive Advantage

The conventional wisdom about AI governance is wrong. Governance is not the overhead that slows AI programmes down. It is the infrastructure that allows the best-run AI programmes to move faster, close more enterprise deals, and attract better talent than competitors who are deploying without it. The evidence from 2026 enterprise AI programmes is consistent, and it contradicts the governance-as-friction narrative at every level.

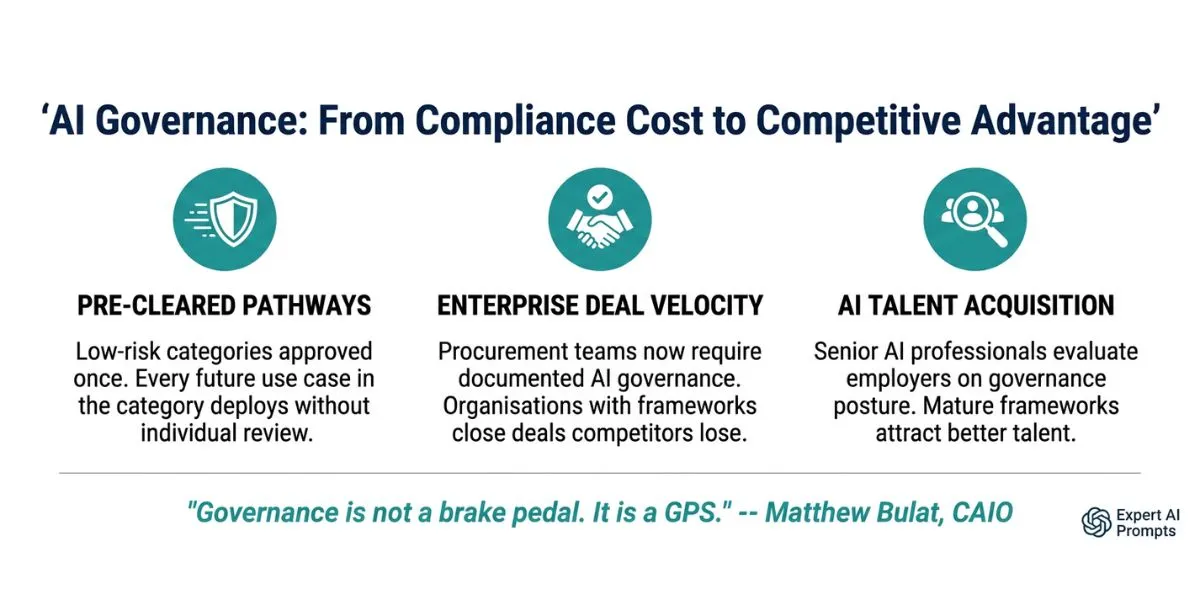

The organisations winning enterprise AI deals, attracting top AI talent, and deploying use cases at the highest velocity in 2026 are not those with the lightest governance footprint. They are those with the most mature governance architecture. This is not coincidence. It is three specific commercial mechanisms that mature governance creates -- and that governance-avoidant organisations are systematically failing to access.

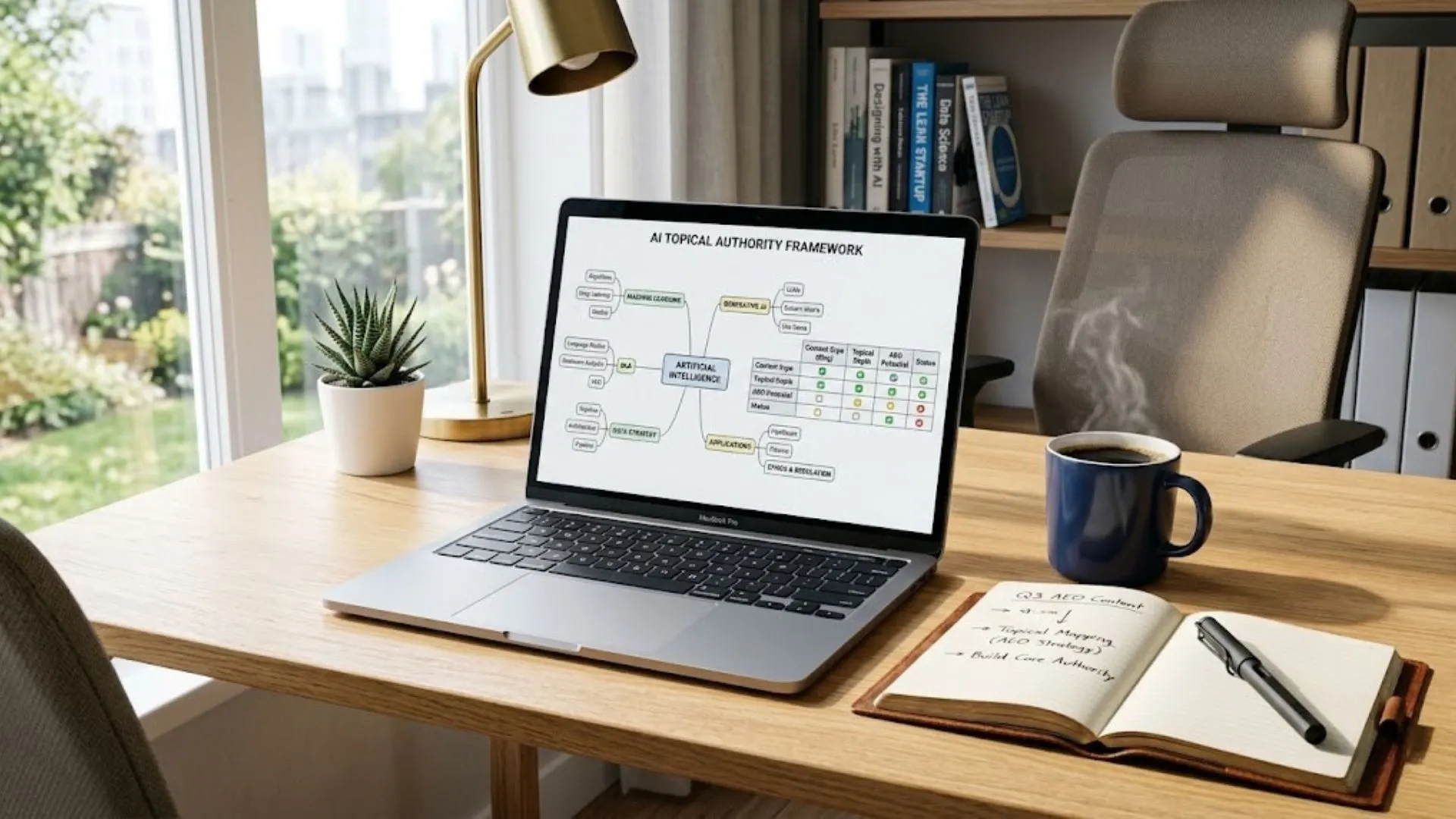

Enterprise AI Governance Framework

The full enterprise AI governance architecture is in the Enterprise AI Governance Framework.

Section 1 - The Counterintuitive Evidence: Governance Leaders Deploy AI Faster

The argument against AI governance investment is usually framed in terms of speed: 'We cannot afford to slow down for governance when our competitors are deploying AI right now.' This argument has a factual error at its centre. Organisations with mature AI governance frameworks do not deploy AI slower than those without governance. They deploy AI faster.

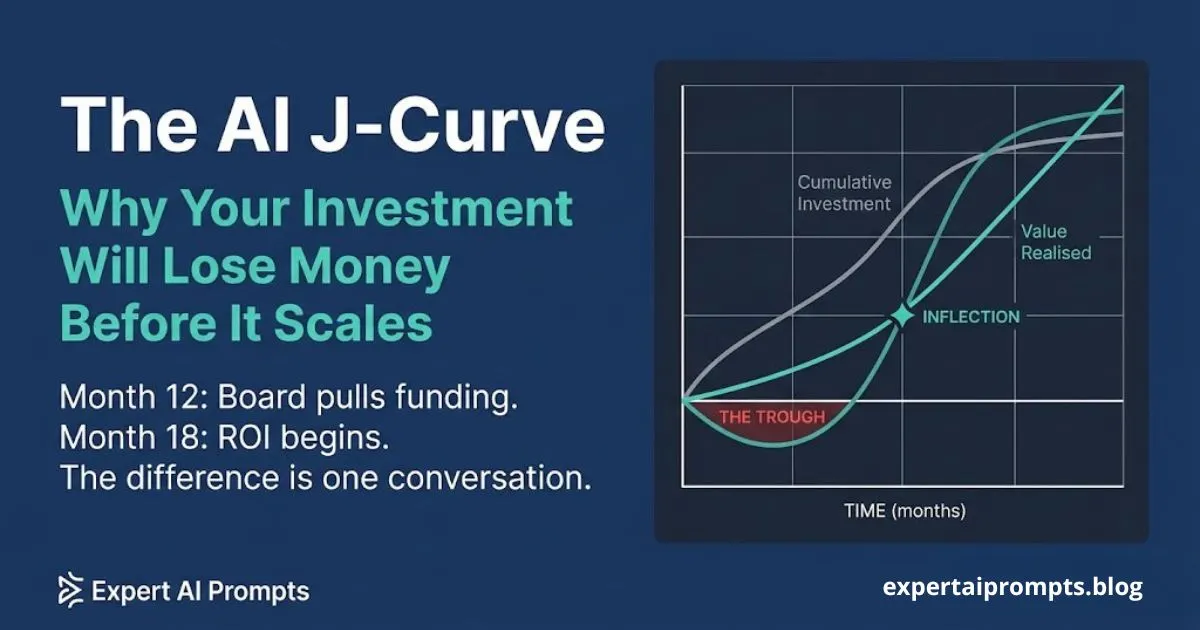

IBM's 2026 AI research is explicit on this point: centralised or hub-and-spoke AI operating models -- which require governance infrastructure to function -- yield 36% higher ROI than decentralised approaches. The ROI advantage comes partly from lower marginal deployment costs on each new use case (shared infrastructure, reusable pipelines, governance pre-clearance), and partly from the revenue mechanisms that governance creates. The governance-avoidant approach does not produce faster deployment. It produces bespoke deployment: each use case built from scratch, each vendor relationship managed independently, each governance question answered anew for every project.

Why the 'Governance Slows Us Down' Argument Is Empirically Wrong

The confusion between governance as friction and governance as infrastructure comes from comparing two different things. An organisation in Phase 1 of AI transformation -- with no governance framework, no shared infrastructure, and no pre-clearance decisions -- can deploy a single AI use case quickly because there are no processes to navigate. An organisation in Phase 4 -- with a mature governance framework, shared pipelines, and a pre-cleared category register -- deploys the same use case faster because it inherits pre-existing approvals, infrastructure, and documentation.

The governance-avoidant organisation produces one fast deployment followed by nine slow ones, as each subsequent use case rebuilds the same infrastructure. The governance-mature organisation produces a first deployment at normal pace followed by nine progressively faster ones, as shared foundations compound. The comparison point that matters is not Day 1. It is Year 3.

Section 2 - Governance Is Not a Brake Pedal -- It Is a GPS

I have seen organisations use governance as a brake pedal. My approach uses it as a GPS -- it tells you the fastest safe route, not whether you can move at all. -- Matthew Bulat, CAIO

The GPS metaphor is not rhetorical. A GPS does four specific things that a brake pedal does not. It shows you the current position. It calculates the fastest route to the destination. It identifies hazards that would slow or stop progress. And it reroutes dynamically when conditions change. A well-designed AI governance framework does exactly these things: it tells you what AI you have running (current position), the fastest path to production for each use case (pre-clearance and gate criteria), the compliance hazards that would create costly remediation or enforcement (EU AI Act, AU Privacy Act), and the process for adapting when the landscape changes (quarterly re-audit, regulatory horizon scanning).

What the GPS Metaphor Actually Means in Practice

An AI governance framework functioning as a GPS produces three observable outcomes: use cases in pre-cleared categories deploy without individual review cycles, compliance hazards are identified at Gate 1 before engineering investment is committed, and the programme navigates regulatory changes without emergency remediation. All three outcomes are faster and cheaper than the alternative -- which is no map at all.

Section 3 - Commercial Mechanism 1: Pre-Cleared Deployment Pathways

The most direct way that governance creates deployment speed is through pre-clearance. When the AI Governance Committee classifies a category of use cases as low-risk and pre-approved -- content generation tools for internal use, document summarisation within approved data boundaries, standard customer service chatbots with disclosure in place -- any new use case within that category deploys without an individual review cycle.

This is not a minor operational convenience. For an organisation running 20+ use cases per year across multiple business functions, pre-clearance converts an 8-week review cycle into a 3-day deployment check. The mathematics compound: if 60% of the use case pipeline falls within pre-cleared categories, and the organisation is deploying 20 use cases per year, pre-clearance eliminates approximately 12 eight-week review cycles annually. That is 96 weeks of review overhead converted into production deployment velocity.

How Pre-Clearance Compounds Over Time

The compounding logic of pre-clearance is why the governance-mature organisation's deployment velocity increases over time while the governance-avoidant organisation's velocity stays constant or decreases. Every category classification decision made in Year 1 pre-clears every future use case in that category. The Year 1 governance investment in classifying content generation, data analysis, and customer communication categories produces deployment benefits in Year 2, Year 3, and Year 4 without additional governance cost.

The governance-avoidant organisation, by contrast, faces the same review overhead for the twentieth use case as it did for the first. There is no accumulated pre-clearance, no shared governance infrastructure, no reusable documentation. Every use case is a governance event. The organisation gets no deployment speed benefit from its history of deployments because that history is not formalised into reusable governance decisions.

'The AI Centre of Excellence owns the pre-clearance register and the category classification decisions that make compound deployment speed possible.'

'Pre-clearance is also the primary mechanism that makes the official AI programme faster and more accessible than shadow AI alternatives -- covered in The Shadow AI Problem.'

Section 4 - Commercial Mechanism 2: Enterprise Deal Velocity

The second commercial mechanism of mature AI governance is one that most organisations have not yet priced into their governance investment calculation: enterprise deal closure. In 2026, AI governance has become a standard component of enterprise procurement qualification. Organisations selling into enterprise markets are being asked to demonstrate documented, audited AI governance as a condition of advancing in procurement processes.

This is not a niche requirement. It applies across financial services, healthcare, professional services, government contracting, and any enterprise market where the purchasing organisation has its own AI governance obligations to satisfy. A financial services firm purchasing AI-powered analytics from a vendor cannot demonstrate their own AI vendor risk management to their regulators if the vendor has no documented AI governance framework. They do not buy from vendors who cannot demonstrate governance. They buy from vendors who can.

What Enterprise Procurement Is Actually Asking in 2026

Procurement processes for enterprise AI products and services now routinely include a vendor AI governance qualification stage. The questions are specific, they require documented evidence, and they are evaluated before commercial terms are discussed. Organisations without documented governance frameworks fail this stage and are removed from the evaluation process -- often without being told why.

The Seven AI Governance Questions Now in Enterprise RFPs

1. What is your AI governance policy, and how recently was it reviewed and updated?

2. How do you classify AI risk? Show us your risk classification framework.

3. What is your data handling standard for AI workloads, including retention, processing boundaries, and access controls?

4. How do you handle EU AI Act compliance for AI systems deployed in EU-facing operations?

5. What are your human oversight protocols for AI-generated outputs used in decisions?

6. Have you achieved or are you pursuing ISO 42001 certification? If not, what is your position on this standard?

7. Who is the named accountable officer for your AI governance programme, and what is their seniority and authority?

Organisations with documented, audited answers to all seven questions advance in the procurement process. Organisations without them are typically removed -- not because they have bad AI, but because their procurement risk profile cannot be resolved. The AI governance framework is the document that allows a CISO or procurement officer at the purchasing organisation to close their vendor risk file.

Section 5 - Commercial Mechanism 3: AI Talent Acquisition

The third commercial mechanism is the least quantifiable and possibly the most strategically significant over a 36-month horizon: AI talent acquisition. Senior AI professionals at the CAIO, AI Architect, AI Governance Lead, and AI Product Manager levels now evaluate prospective employers on the seriousness of their AI ethics and governance posture. This evaluation happens before compensation negotiation, and it eliminates candidates from the process who would otherwise be competitive on compensation grounds.

A CAIO or AI Governance Lead who has spent years building responsible, regulated AI programmes at the enterprise level will not accept a position at an organisation that treats governance as a compliance checkbox. The work they would be required to undo, or to paper over, or to explain away to the regulators who will eventually ask -- that work is professionally incompatible with the reputation they have built. They will take the position that matches their professional standards, not the one that offers the highest base salary.

Why Senior AI Professionals Evaluate Governance Posture Before Compensation

The AI talent market at the senior level has three characteristics that make governance posture a first-order evaluation criterion, not a secondary consideration.

First, reputational risk. Senior AI professionals are personally associated with the governance decisions of the programmes they run. A governance failure at a previous employer -- regulatory breach, data incident, EU AI Act violation -- becomes part of their professional narrative. They evaluate prospective employers on the governance risk the role carries as a personal reputation issue, not just an employment concern.

Second, technical incompatibility. An AI Architect who designs model-agnostic, compliance-aware production architectures cannot produce equivalent work in an organisation that has no governance framework. The work is technically different, and the output is less technically distinguished. Governance-mature environments allow senior AI professionals to do the quality of work they want to be professionally known for.

Third, career velocity. The AI governance and strategy talent market is growing faster than the supply of experienced practitioners. Senior AI professionals who choose their employers wisely -- joining programmes that are building genuine governance capability rather than theatrical compliance -- develop more rapidly, build stronger credentials, and command higher compensation in subsequent roles. The employer's governance posture is therefore a career investment decision for the candidate.

Section 6 - The Regulatory Timing Advantage: 12 to 24 Months of Competitive Head Start

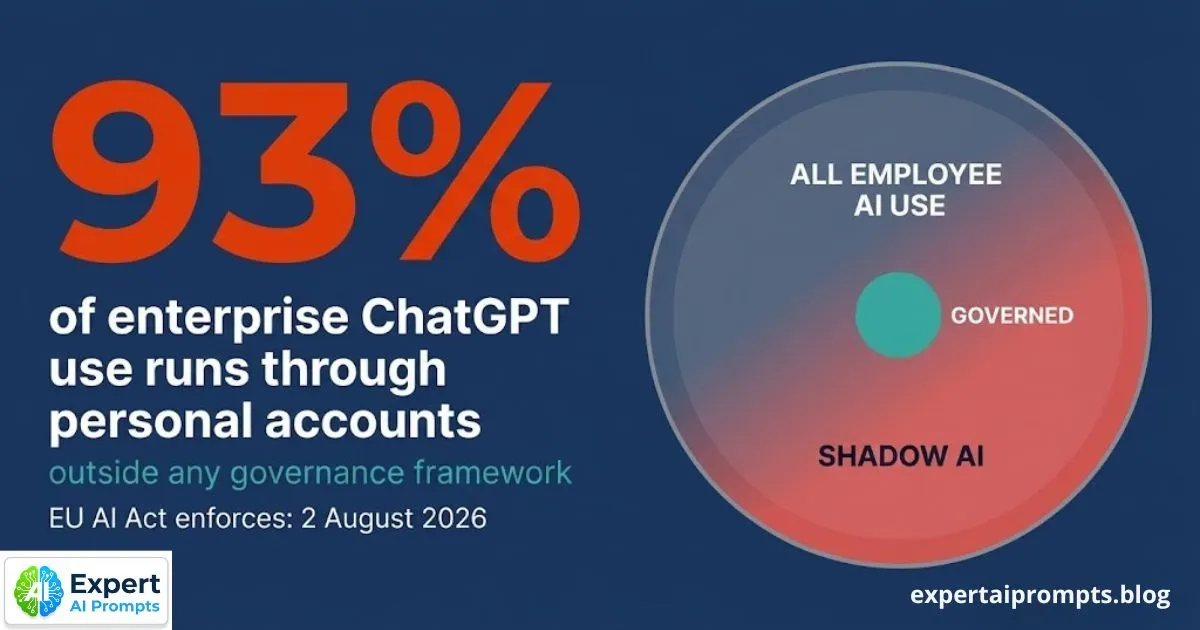

The EU AI Act enforces fully on 2 August 2026. The Australian Privacy Act APP 1.7 obligations activate in December 2026. The organisations that complete their governance infrastructure before these deadlines do not merely avoid compliance penalties -- they gain 12 to 24 months of competitive operating advantage over competitors who are still building governance in response to enforcement.

During those 12 to 24 months, the governance-complete organisation is deploying AI through pre-cleared pathways while competitors are navigating first-time compliance reviews. It is closing enterprise deals with documented governance frameworks while competitors are being removed from procurement shortlists. It is attracting senior AI talent who want to join a programme with genuine governance capability while competitors are losing candidates to governance-mature employers.

The August 2026 Window

The organisations that will be best positioned in January 2027 -- six months after EU AI Act full enforcement -- are not the ones that start their governance build in August 2026 in response to enforcement. They are the ones that started in 2025 and completed their governance build by mid-2026. The 12-month lead time required to build a functional AI governance framework -- charter, risk classification, acceptable use policy, CoE structure, four-layer governance model, compliance mapping -- means that the competitive window is now.

AI Transformation Roadmap 2026

The 90-day onboarding framework for building the governance infrastructure before the August 2026 deadline is in the AI Transformation Roadmap 2026.

Section 7 - The ISO 42001 Procurement Signal

ISO/IEC 42001:2023 is the international standard for AI management systems. It is currently voluntary. It is fast becoming a commercial expectation in enterprise procurement, particularly in EU-facing contracts, financial services, and healthcare vendor qualification processes.

The trajectory of ISO 42001 mirrors the trajectory of ISO 27001 over the previous decade. In 2015, ISO 27001 certification was a differentiator that some enterprise vendors held. By 2020, it was a standard procurement qualification threshold in most enterprise cybersecurity vendor evaluations. By 2025, organisations without ISO 27001 are eliminated from enterprise cybersecurity procurement shortlists before commercial terms are discussed. ISO 42001 is on the same trajectory, accelerated by the EU AI Act creating a regulatory foundation that the standard aligns with.

An organisation that designs its AI governance architecture to EU AI Act standards -- the highest-common-denominator approach -- gains significant ISO 42001 alignment as a side effect. The Annex IV technical documentation, human oversight design, risk classification, and quality management requirements of the EU AI Act substantially overlap with ISO 42001's AI management system requirements. The organisation that builds to EU AI Act standards is simultaneously building toward ISO 42001 certification readiness. The first mover in the ISO 42001 certification race in their sector gains the same commercial advantage that early ISO 27001 adopters gained in cybersecurity.

Section 8 - Governance as the Infrastructure of Firm Sovereignty

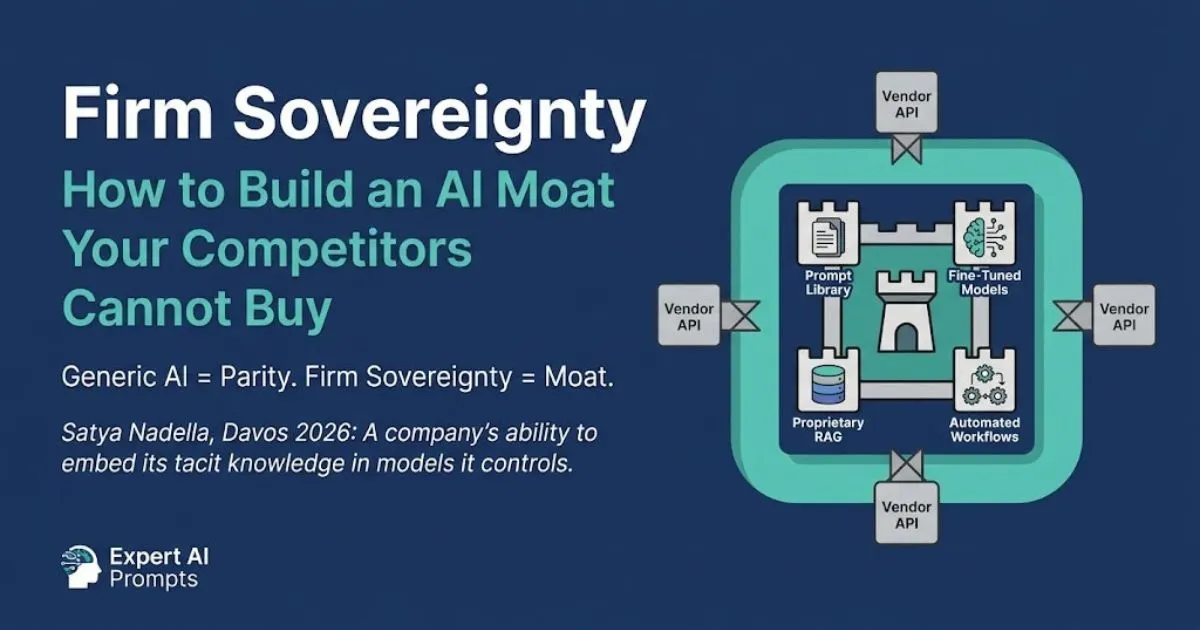

The three commercial mechanisms in this article -- pre-cleared pathways, deal velocity, talent acquisition -- are the near-term competitive advantages of mature AI governance. But there is a fourth mechanism that operates over a longer horizon and produces the most durable competitive advantage of all: Firm Sovereignty.

Satya Nadella at Davos 2026: 'A company's ability to embed its tacit knowledge in models it controls.' Firm Sovereignty is the AI outcome that cannot be purchased from a vendor. It is built through the governed accumulation of proprietary training data, domain-specific prompt libraries, fine-tuned models, and automated workflows that encode the organisation's unique institutional intelligence. It compounds over years, not quarters. And it requires governance infrastructure to build safely.

An organisation without AI governance cannot build Firm Sovereignty AI assets. It cannot control the data that trains its models, because there is no data governance standard. It cannot maintain proprietary prompt libraries, because there is no version control or access management. It cannot fine-tune models on proprietary data, because there is no framework for determining which data is safe to train on and which carries regulatory or IP risk. The governance infrastructure is not just a compliance requirement. It is the technical prerequisite for building the kind of AI that competitors cannot replicate.

Firm Sovereignty -- Building an AI Moat

The full argument for Firm Sovereignty as the long-term governance dividend is in Firm Sovereignty -- Building an AI Moat.

Closing - The Governance Dividend

The organisations that are best positioned for enterprise AI in 2028 are building their governance infrastructure today. Not because they fear enforcement, though enforcement is real. Not because they want to signal values, though signalling matters. But because mature AI governance is the foundation of four compounding competitive advantages: deployment velocity through pre-clearance, revenue through enterprise deal qualification, talent through the governance posture signal, and Firm Sovereignty through the proprietary intelligence that only governed AI programmes can build.

Governance is not a cost that responsible organisations pay. It is an investment that high-performance AI programmes make -- and compound over the years that follow.

Your next steps:

Enterprise AI Governance Framework

The full governance architecture: four-layer model, compliance mapping, shadow AI, board reporting.

AI Governance Framework Template

Download the template that satisfies all seven enterprise procurement qualification questions.

Enterprise AI Transformation Playbook

The five-phase framework that produces the governance dividend.

About the Author

Matthew Bulat is the Founder of Expert AI Prompts and a 20+ year technology and AI strategy executive. Former CTO, Federal Government Technical Operations Manager for national infrastructure across 20 cities and 4,000 users, and 8+ year University Lecturer at CQUniversity. The GPS vs brake pedal governance framework in this article is the same architecture applied in the Expert AI Prompts live platform -- 30 industries, 1,500+ prompts, 15 AI workflow systems, near-zero daily operational intervention.