4 Gates to Production: How to Move AI From Pilot to Scale Without Failing

The reason most enterprise AI pilots never reach production is not that the technology failed. It is that nobody defined what production readiness actually looked like before the pilot began. The gates were never built -- so there was no way to know when a use case was ready to advance, and no permission to kill one that was not.

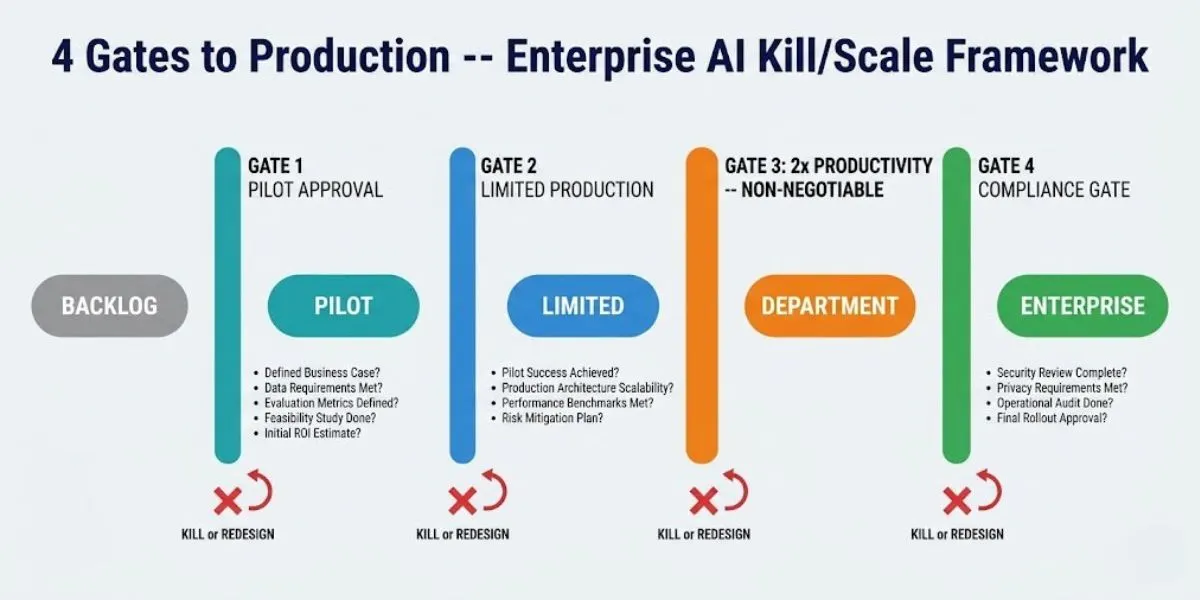

The four-gate production framework solves this. It creates four sequential checkpoints that every AI use case must pass before advancing to the next deployment stage. Each gate has specific, binary criteria -- met or not met. There is no 'mostly met.' There are no exceptions at Gate 3. And the kill decision made at any gate is a governance win, not a failure.

Section 1 - Why Most Organisations Deploy Without Gates -- and Pay for It

Without explicit deployment gates, enterprise AI programmes follow a predictable default process: a pilot is launched, it produces promising results, stakeholder enthusiasm builds, and the use case advances to broader deployment on the strength of the demo quality and the executive champion's confidence. No pre-defined success threshold is tested. No production integration is confirmed. No named owner has accepted operational accountability.

The pattern that follows is equally predictable. The broader deployment encounters legacy system integration issues that were not scoped during the pilot. Accuracy degrades at production volume with real-world edge cases. There is no monitoring tooling to detect the degradation. The business function that was supposed to operate the system discovers it was not involved in the design. The use case that looked like a success in the demo is quietly shelved within six months of deployment.

The four-gate framework does not make deployment harder. It makes deployment faster by removing the surprises that kill deployment momentum. Every criterion that is verified at a gate is a production failure that was prevented rather than discovered.

Escaping Pilot Purgatory The root causes of pilot purgatory -- and why they are all preventable -- are covered in Escaping Pilot Purgatory.

The Enterprise AI Transformation Playbook The full five-phase framework, including Phase 3 deployment architecture, is covered in The Enterprise AI Transformation Playbook.

Section 2 - Gate 1: Pilot Approval -- The Backlog to Pilot Gate

Stage: Backlog to Pilot | Gate owner: CAIO / CoE Director | Decision: Approve pilot or return to backlog with documented conditions

Gate 1 is the investment decision gate. It determines whether a use case has sufficient evidence to justify the engineering resource of a pilot. A use case that passes Gate 1 has a business case, a data foundation, a technical feasibility assessment, a named owner, and a signed Success Contract. A use case that fails Gate 1 is returned to the backlog with a documented list of specific conditions required for resubmission -- not declined, rescheduled with conditions.

The Five Gate 1 Criteria

• Business impact quantified in dollars -- not 'improved efficiency' but a specific dollar figure representing the value the use case will deliver if the Success Contract threshold is met. This is the number the board will evaluate. It must be specific, realistic, and signed off by the business owner before the pilot begins.

• Data readiness score of 3 or above -- assessed across availability, lineage, integration, accuracy, and governance. A score below 3 means the data is not ready for production and the pilot will either fail or produce sandbox results that do not replicate in production. Data readiness gaps must be documented and a remediation plan committed before Gate 1 is passed.

• Technical feasibility assessed including production integration requirements -- not just 'can the model do this?' but 'can the model do this integrated with our production systems, at our production data volume, in our production environment?' Legacy system integration requirements must be scoped at Gate 1, not discovered at Gate 3.

• Named business owner confirmed in writing -- one person, by name and role, who will operate the system in production and whose performance will be measured against its outcomes. Not the AI team. Not the steering committee. The person who is accountable when the system is live.

• Success Contract signed by all stakeholders with veto authority -- and kill date set. See Section 3.

Enterprise AI Readiness Checklist

The data readiness dimension of Gate 1 maps directly to the Enterprise AI Readiness Checklist -- 25 questions including the full data readiness assessment.

Section 3 - The Success Contract: What Makes Gate 1 Non-Negotiable

The Success Contract is signed before any pilot engineering begins. It is the single document that most reliably separates organisations that make it to production from those that stay in pilot purgatory. Without it, every stakeholder can redefine success at Month 6 to match whatever the pilot actually achieved. With it, success criteria are specific, measurable, and non-negotiable before the first line of code is written.

The Success Contract converts Gate 3 -- the 2x Productivity Gate -- from a subjective judgement into a mechanical decision. The baseline was measured before the pilot began. The threshold was agreed before the results were known. The kill date was set before the first sprint. None of these elements can be renegotiated after the fact.

The Five Elements Every Success Contract Must Contain

1. Success criteria defined in production terms -- not sandbox accuracy. Not 'improve efficiency' but 'reduce manual contract review time from 4 hours to under 90 minutes, with an error rate below 2%, across the full production document volume.' The criteria must be testable with real users and realistic production data, not curated pilot data.

2. The go/no-go threshold -- the exact numeric improvement required to authorise production deployment. Defined and agreed before results are known. Not negotiated after results are in. A threshold that moves to match the outcome is not a threshold -- it is retrospective rationalisation.

3. The named deployment authority -- one person, by name. The business owner who will operate the system in production and whose performance will be measured against its outcomes. They sign the Success Contract. They make the Gate 3 decision.

4. What happens if the threshold is missed -- agreed before the pilot begins. Options: kill the initiative and return to backlog; return to redesign with a specific revised hypothesis and new threshold; extend by a defined period with a specific new threshold. The decision is mechanical, not discretionary. Sunk cost does not override the kill clause.

5. The kill date -- a specific calendar date by which the go/no-go decision is made and acted upon. A pilot without a kill date does not end -- it drifts into purgatory. The kill date converts the decision from an ongoing discussion into a scheduled event.

Section 4 - Gate 2: Pilot to Limited Production

Stage: Pilot to Limited deployment with real users | Gate owner: AI Product Manager | Decision: Advance to limited production or extend pilot maximum 30 days with specific revised criteria -- or invoke kill clause

Gate 2 is the evidence gate. It verifies that the use case performs as the Success Contract requires -- not in curated pilot conditions, but with real users generating real data on real production systems. This gate is passed when the pilot has produced the evidence needed to justify the change management and operational investment of a live deployment.

The Four Gate 2 Criteria

• All Success Contract metrics met with real users operating on realistic production data -- not curated pilot data. If the pilot was run on a cleaned, curated data subset, Gate 2 requires a production data validation run before the gate can be passed. Accuracy achieved on curated data is not evidence of production performance.

• At least one production system integration tested end-to-end -- the integration with the legacy system or operational platform that production users will actually use. Not designed. Not confirmed in principle. Tested and working. Legacy system integration is the number one root cause of AI scaling failure when it is not validated before Gate 2.

• Monitoring tooling deployed and operational -- drift detection, accuracy monitoring, alerting, and incident response procedure. An AI system without monitoring is not a production system; it is a sandbox with real users. Monitoring is a Gate 2 requirement, not a post-deployment aspiration.

• Change management plan active with first training cohort complete -- the users who will operate the system in limited production have been trained and have demonstrated they can use it. ADKAR Awareness and Desire stages are complete before any user sees a live system.

If Gate 2 criteria are not met by the Success Contract kill date, the options are: extend the pilot by a maximum of 30 days with specific revised criteria documented in writing; or invoke the kill clause. No informal extensions. No moving of the goalposts. If 30 days does not produce the evidence needed to pass Gate 2, the use case is not ready for production and extending further costs more in engineering and stakeholder attention than the potential value justifies.

Section 5 - Gate 3: The 2x Productivity Gate -- Why There Are No Exceptions

Stage: Limited deployment to department-wide | Gate owner: Named deployment authority from Success Contract | Decision: Advance to department-wide or KILL -- no other options

Gate 3 is the hard gate. It requires a 2x productivity baseline demonstrated with real production data across the full user population of the limited deployment -- and there are no exceptions. The use case either demonstrates 2x improvement or it is killed. There is no partial credit. There is no advancement on 1.7x with a plan to reach 2x at scale.

Why 2x Is the Threshold, Not 1.5x or 1.8x

The 2x threshold is not arbitrary. It is the minimum productivity improvement that makes the business case for enterprise-scale deployment credible -- to both the workforce absorbing the disruption and the board funding the investment.

Below 2x productivity improvement, the disruption cost of enterprise deployment -- change management, retraining, workflow redesign, support overhead during transition -- exceeds the value delivered. The organisation spends significant capital to achieve a marginal outcome. The workforce absorbs disruption for a productivity gain that is barely perceptible. The board funds a change management programme that the ROI does not justify.

Above 2x, the case is clear: the value delivered outweighs the disruption cost, the workforce sees demonstrable benefit in their daily work, and the board has the evidence to fund the next wave. The 4x speed with quality benchmark -- the Phase 5 production target -- is not achievable without first demonstrating 2x. Any use case that cannot reach 2x in limited production will regress toward the baseline as edge cases and exception handling accumulate at enterprise scale.

The Gate 3 criteria beyond the 2x threshold:

• Operational runbook complete and documented -- every edge case, exception, and failure mode that appeared in limited production is documented with a defined response procedure. The business function can operate the system without the AI team present.

• Escalation paths for AI errors and exceptions documented and tested -- the procedure for what happens when the AI system produces an incorrect, harmful, or unexpected output. Not designed. Tested.

• Named business owner has accepted full operational ownership from the AI Product Manager -- in writing. The transition from AI team ownership to business function ownership is a Gate 3 requirement, not a Phase 4 aspiration.

Section 6 - Gate 4: Enterprise-Wide Compliance

Stage: Department-wide to enterprise-wide | Gate owner: Governance and Compliance Lead | Decision: Advance to enterprise-wide or pause expansion until compliance requirements are fully satisfied

Gate 4 is the compliance gate. It determines whether a use case can be deployed across the entire organisation, including all jurisdictions in which the organisation operates. Gate 4 applies the highest-common-denominator compliance standard: EU AI Act as the primary benchmark, with Australian Privacy Act and SOC 2 requirements verified in parallel.

The most common mistake at Gate 4 is treating compliance as a post-deployment checklist. It is a deployment gate. A use case that has not satisfied Gate 4 criteria cannot be deployed enterprise-wide, regardless of its performance at the department level. Compliance is not the last step -- it is a parallel workstream that must be completed before enterprise deployment is authorised.

The Four Gate 4 Criteria

• EU AI Act risk classification complete -- the use case has been classified against the four EU AI Act risk tiers, and for any HIGH RISK system, Annex IV technical documentation has been produced: system description, design decisions, data governance documentation, human oversight design, and the EU AI database registration (where required). Full enforcement deadline for Annex III high-risk systems: 2 August 2026.

• Australian Privacy Act APP 1.7 disclosures published where applicable -- any AI use case that uses personal information of Australian individuals to make decisions that materially affect them requires a plain-language privacy policy disclosure and the right to request explanation and human review. Published before enterprise-wide deployment.

• SOC 2 controls operational and evidenced -- the five Trust Service Criteria (Security, Availability, Processing Integrity, Confidentiality, Privacy) are verified against the AI workloads in the enterprise deployment. Not designed. Operational and evidenced.

• Board governance reporting template populated and reviewed -- the quarterly AI Governance Dashboard has a row for this use case: production count, value realised, compliance status, and named owner. The board can exercise AI governance oversight of this system before enterprise-wide deployment begins.

Enterprise AI Governance Framework

The complete Gate 4 compliance architecture -- EU AI Act, AU Privacy Act, SOC 2 -- is covered in the Enterprise AI Governance Framework.

AI Governance Framework Template

Download the AI Governance Framework Template for the complete Gate 4 compliance documentation package, including the Annex IV technical documentation template.

Section 7 - The Kill Decision: Why Failing a Gate Is a Governance Win

A gate that a use case fails is functioning correctly. The entire purpose of the gate framework is to identify use cases that are not ready to advance before they consume the enterprise-wide deployment investment of change management, retraining, and stakeholder time at scale.

The kill decision made with discipline -- at Gate 1 before the pilot begins, at Gate 2 before the limited production investment, or at Gate 3 before the enterprise-wide deployment -- demonstrates more AI governance maturity than advancing a use case that has not met the criteria. Every kill decision frees engineering capacity for the next use case in the prioritised pipeline. Every kill decision builds the board's confidence that the AI programme is governed by evidence rather than momentum.

The three conditions that make a kill decision possible:

• Pre-defined criteria -- without them, there is no principled basis for a kill. The Success Contract provides the criteria before the results are known.

• A named decision authority with the mandate to enforce it -- the named deployment authority from the Success Contract. Their signature on the Success Contract is the pre-commitment to act on the kill clause.

• A funded pipeline of next use cases -- without a queue of scored, prioritised alternatives ready to begin, killing one pilot feels like a strategic setback. With a pipeline, it feels like an acceleration.

Closing - What the Gates Actually Build

The four-gate framework is not a checklist. It is an organisational capability. Every use case that passes all four gates leaves behind: a production integration that the next use case can inherit, a governance documentation template that reduces Gate 4 overhead by 60%, a monitoring infrastructure that the next use case deploys at zero marginal cost, and a business function that understands how to operate AI in production.

The gates build the compounding infrastructure that makes the tenth use case deploy in 21 days when the first took six months. They build the board's confidence that AI investment is governed by evidence. They build the organisation's ability to kill a failing programme quickly and redirect the investment rather than stranding it.

Continue building your deployment framework:

The Enterprise AI Transformation Playbook

The full five-phase framework with Phase 3 deployment architecture in detail.

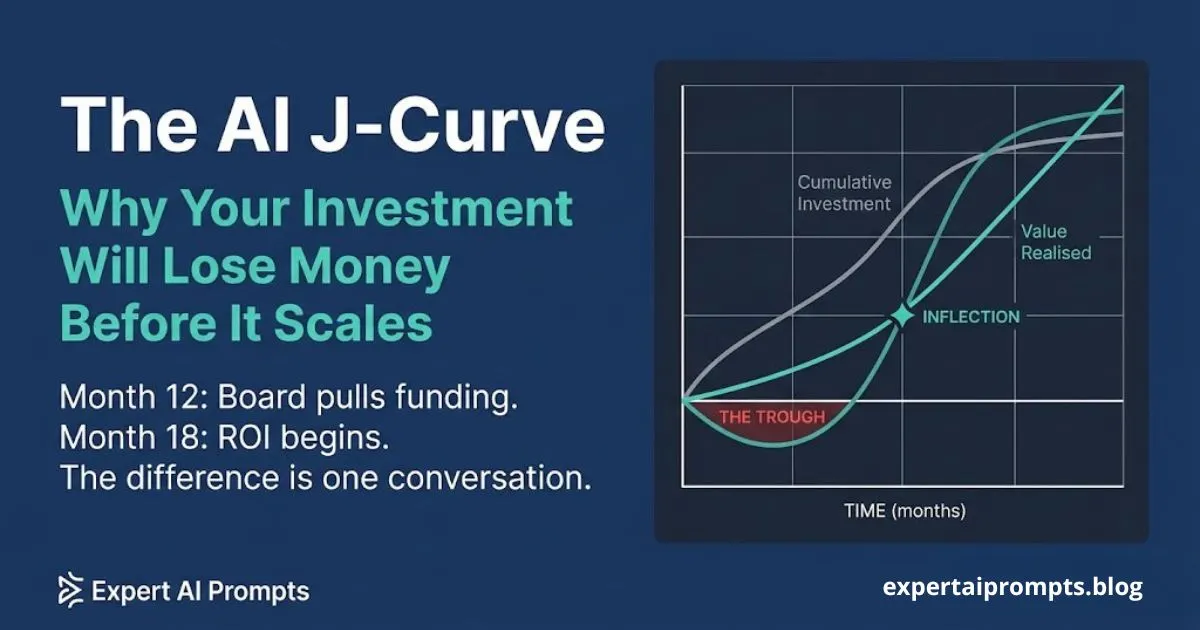

The AI J-Curve: Why Your Investment Will Lose Money Before It Scales

How to set board expectations for the investment pattern that the gates are protecting.

The root causes of pilot failure that the gate framework is designed to prevent.

About the Author

Matthew Bulat is the Founder of Expert AI Prompts and a 20+ year technology and AI strategy executive. Former CTO, Federal Government Technical Operations Manager for national infrastructure across 20 cities and 4,000 users, and 8+ year University Lecturer at CQUniversity. The four-gate framework in this article is the Kill/Scale production gate system applied in the Expert AI Prompts methodology -- 30 industries, 1,500+ prompts, 15 AI workflow systems.