Top AI Consulting Firms in 2026: How to Choose the Right Enterprise AI Partner

The enterprise AI consulting market in 2026 has a discovery problem. Not a quality problem -- most of the major firms are technically competent. Not a commitment problem -- AI is now the largest single growth practice at McKinsey, BCG, Accenture, Deloitte, and IBM Consulting simultaneously. The problem is that every procurement team searching for 'top AI consulting firms' receives a list of the same ten names, evaluated against the same generic criteria, and selects the firm that presented best in the pitch -- without ever asking the question that actually determines whether the engagement succeeds.

The question is not: which AI consulting firm is best? The right question is: for our specific maturity stage, our specific challenge, and our specific internal capability, which type of firm is the right partner -- and should we be engaging a consulting firm at all?

This article is an evaluation from the practitioner side. I have worked with, evaluated, and in several cases built the methodology that consulting firms sell. The four categories, six selection criteria, and five questions in this article will tell you more about your AI consulting decision than any ranked list of firm names.

AI Buy vs Build Decision Framework

If your immediate decision is whether to engage a consulting partner or build internal AI strategy capability, the AI Buy vs Build Decision Framework provides the structured seven-factor process for making that decision.

Section 1 - The Four Categories of AI Consulting Firms

The first structuring insight for any enterprise AI consulting decision is that there is no single 'AI consulting market.' There are four distinct categories of firm, each with a different primary value proposition, a different engagement model, and a different set of use cases where it genuinely outperforms the alternatives.

Evaluating all four against the same criteria is the procurement mistake that produces the most expensive decisions. A strategy-led firm evaluated on implementation speed will look slow. An implementation-led firm evaluated on strategic independence will look constrained. The categories are not better or worse -- they are different responses to different enterprise needs.

Enterprise AI Transformation Playbook

Your current stage in the Enterprise AI Transformation Playbook determines which category of consulting firm is most likely to move your programme forward.

Section 2 - Category 1: Strategy-Led Advisory Firms

Strategy-led advisory firms -- including McKinsey & Company, Boston Consulting Group, Bain & Company, and their equivalents -- enter enterprise AI engagements at the portfolio and investment strategy level. They are not primarily technology implementers. Their value proposition is the application of strategic frameworks to the AI investment decision: which use cases to prioritise, how to structure the AI investment portfolio, how to sequence transformation phases, and how to make the board case for AI investment.

These firms have published extensively on enterprise AI strategy -- BCG's 10-20-70 change management principle, McKinsey's use case prioritisation research, Bain's AI ROI measurement frameworks are all substantive methodological contributions to the field. Their research is reliable, their frameworks are rigorous, and their engagement quality at the senior level is consistently high.

What Strategy-Led Firms Do Well

• Defining the AI investment thesis and portfolio strategy at board level -- the 'where to play and how to win' decisions that set the direction for everything that follows

• Executive alignment work: building consensus among a leadership team that has competing AI priorities and limited budget

• Market benchmarking: comparative data on how competitors and sector peers are deploying AI, which de-risks the internal investment case

• Operating model design: how the AI organisation should be structured, how the CoE should be governed, how the transformation should be phased

• Board communication: building and presenting the AI business case in the language of financial returns, risk management, and competitive positioning

Where Strategy-Led Firms Have Limits

The primary limitation of strategy-led advisory firms for most enterprise AI engagements is the gap between strategic recommendation and operational reality. A McKinsey or BCG engagement produces an excellent AI strategy document and a transformation roadmap. It does not produce a running AI system. The implementation -- the data pipelines, the model deployment, the governance infrastructure, the change management, the Champion Network -- requires a different kind of engagement.

The second limitation is cost-per-insight at the implementation level. Senior partner time at a strategy-led firm is priced for strategic work, not for governance documentation or change management delivery. Using a strategy-led firm to build your AI Acceptable Use Policy or run your Champion Network programme is paying executive advisory rates for operational programme management.

Section 3 - Category 2: Implementation-Led Global Firms

Implementation-led global firms -- Accenture, Deloitte Consulting, IBM Consulting, Capgemini, Cognizant, and Infosys among the largest -- are primarily delivery organisations. Their value proposition is not strategic definition but production deployment: the capacity to move from a defined AI strategy to running production systems at enterprise scale, with the workforce, methodology, and technology practice to do it.

These firms have made very large investments in AI delivery capability. Accenture's AI practice employs tens of thousands of AI-credentialled practitioners. Deloitte's State of AI enterprise research is published annually and cited across the industry. IBM Consulting's AI delivery methodology is deeply mature, with decades of enterprise technology delivery experience as the foundation. At the right engagement stage, these firms offer real and significant delivery value.

What Implementation-Led Firms Do Well

• Large-scale production deployment across multiple business functions simultaneously -- the 'industrial rollout' that requires coordinated programme management at a scale most internal teams cannot staff

• Integration work: connecting AI systems to existing enterprise technology infrastructure, ERP systems, CRM platforms, and data lakes

• Change management at workforce scale: running structured adoption programmes across thousands of employees in parallel

• Regulatory compliance documentation: EU AI Act Annex IV technical documentation, SOC 2 controls, and GDPR mapping as delivery outputs

• Vendor relationships: negotiating enterprise AI platform contracts with Microsoft, Google, Anthropic, and AWS at volumes that produce significantly better commercial terms than individual enterprises can achieve independently

Where Implementation-Led Firms Have Limits

The primary structural tension for implementation-led firms is the relationship between their AI consulting practice and their platform vendor partnerships. Most large implementation firms have significant commercial relationships with one or more major AI platform vendors -- Microsoft Gold Partner status, Google Cloud Premier Partner, AWS Advanced Consulting Partner. These relationships are commercially valuable to the consulting firm. They also create a structural incentive to recommend the platforms where the firm has the deepest practice, regardless of whether that platform is the optimal choice for the client's specific architecture.

This is not a criticism of any specific firm -- it is a structural reality of the consulting industry that procurement teams should price into their selection process. The recommendation is to ask every implementation-led firm directly: what are your current platform vendor commercial relationships, and how do those relationships influence your architecture recommendations? A firm that cannot answer this question clearly is not a firm that should be designing your AI architecture.

Section 4 - Category 3: Technology-Aligned Advisory Practices

Technology-aligned advisory practices are a distinct and growing category: typically smaller firms or boutique practices with deep expertise in a specific AI technology domain or industry vertical. They are not full-service consulting firms -- they bring specialist capability that neither strategy-led nor implementation-led firms can match in their specific domain.

Examples include specialist AI governance practices, domain-specific AI labs (legal AI, healthcare AI, financial AI), model evaluation and safety firms, and independent AI architects who work alongside internal teams without the overhead of a large firm engagement. The Expert AI Prompts methodology practice -- providing validated AI transformation frameworks for enterprise leaders building internal AI capability -- occupies this category.

When Technology-Aligned Firms Are the Right Choice

• Deep domain expertise is required that neither strategy-led nor implementation-led generalists have -- a financial services firm building proprietary credit scoring AI needs a different level of domain specificity than a generalist can provide

• The engagement purpose is capability transfer rather than delivery -- building internal team capability rather than delivering a system

• The required methodology is more specific than what a large firm's standardised delivery framework provides

• Budget constraints make large-firm overhead costs prohibitive, but specialist expertise is still required

• Independence from vendor relationships is specifically required for architecture decision work

Section 5 - Category 4: Governance and Compliance Specialists

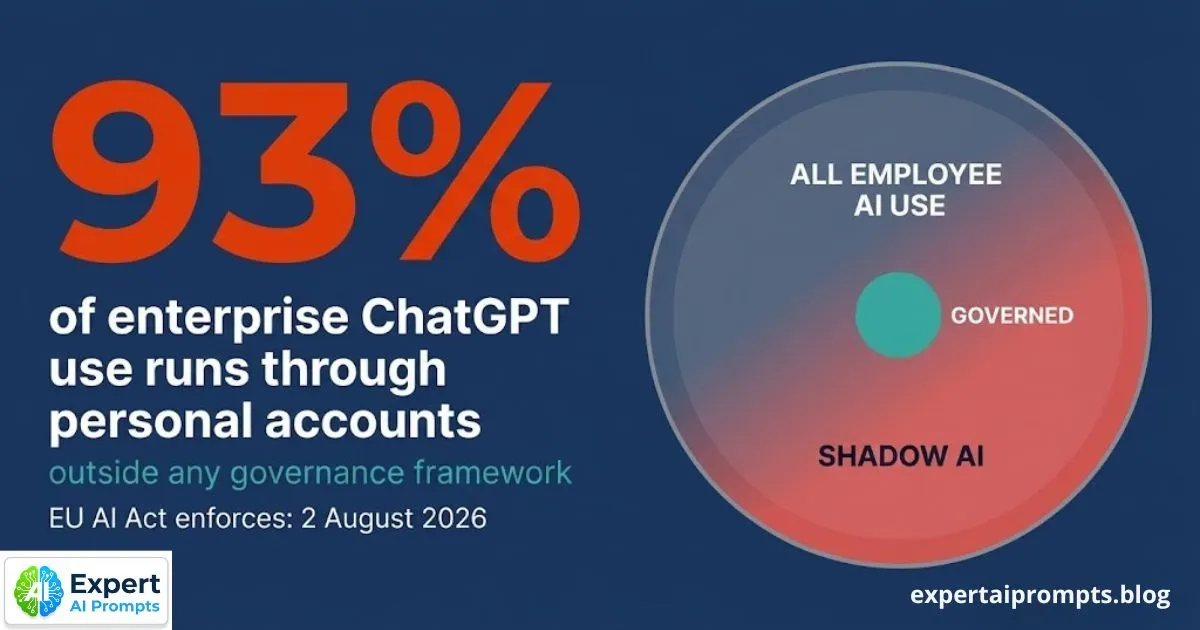

The EU AI Act enforcement deadline of 2 August 2026 has created a specific category of AI consulting demand that none of the preceding three categories addresses as a primary capability: organisations that need EU AI Act compliance documentation, risk classification frameworks, and governance architecture built before the deadline, delivered by practitioners who understand the regulatory requirements at the technical and operational level.

Governance and compliance specialists are typically boutique practices or individual practitioners with deep regulatory expertise in AI governance, data protection law, and technology risk. They do not replace strategy-led or implementation-led firms for their respective primary functions. They provide the specific governance deliverables -- Annex IV technical documentation, risk classification matrix, AI Acceptable Use Policy, incident response framework -- that neither strategy consultants nor implementation firms have historically treated as a primary delivery capability.

The practical test: if you are a CAIO or governance lead at a mid-market enterprise with a 2 August 2026 EU AI Act compliance requirement and a modest engagement budget, a governance specialist who delivers the specific documentation you need in eight weeks is almost certainly a better fit than a large firm engagement that takes six months to mobilise and produces the compliance documentation as a secondary workstream.

Enterprise AI Governance Framework

The Enterprise AI Governance Framework covers the full governance architecture that governance specialist engagements typically deliver.

AI Governance Framework Template

The AI Governance Framework Template is the downloadable governance documentation baseline that many organisations use before engaging a governance specialist -- to reduce engagement cost and scope.

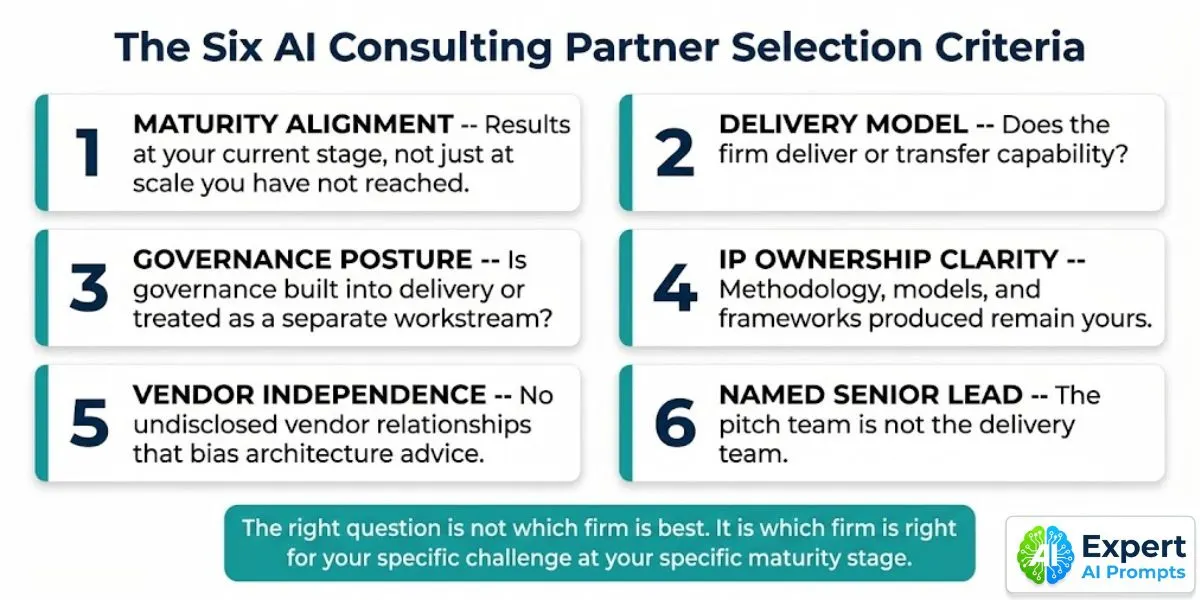

Section 6 - The Six Selection Criteria for an Enterprise AI Consulting Partner

Apply these criteria after you have identified the right firm category for your specific challenge. The criteria are sequence-dependent: Criterion 1 determines whether you are evaluating the right category. Criteria 2-6 determine which firm within the right category is the right partner.

1. Maturity Alignment

The test: Can the firm demonstrate results at your current maturity stage -- not just at the scale you have not yet reached? A firm that has delivered ten Phase 5 Firm Sovereignty AI programmes has limited direct value for an organisation at Phase 1 that needs to build foundational infrastructure and select its first use cases. The methodologies are different, the governance requirements are different, and the change management challenges are different.

Ask: Show me two engagements you have delivered for organisations at our current maturity stage -- not at the maturity stage you are trying to move us toward. What were the specific deliverables at that stage, and what were the measurable outcomes six months after the engagement concluded?

2. Delivery Model -- Deploy or Transfer?

The test: At the end of this engagement, is the AI capability inside the organisation -- in the skills of your people, the documented methodology of your programme, and the governance infrastructure your team can maintain -- or is it inside the consulting firm, and therefore gone when the engagement ends?

Ask: What is your explicit capability transfer methodology? At the end of this engagement, which specific capabilities will our team be able to perform independently that they cannot perform today -- and how will you measure that transfer?

3. Governance Posture

The test: Is AI governance built into this firm's delivery methodology from the start, or is it a separate workstream that gets compressed or dropped when timelines tighten? The firms that treat governance as a parallel track -- something to add before launch -- consistently produce governance documentation that does not reflect the production system, does not satisfy EU AI Act requirements, and is not maintained after the engagement concludes.

Ask: Walk me through how governance requirements -- EU AI Act risk classification, data handling documentation, audit trail design -- are integrated into your standard delivery phases. At what phase do you produce the Annex IV technical documentation, and who owns it after the engagement?

4. IP Ownership Clarity

The test: Every methodology framework, process document, governance template, and technical design produced during the engagement: who owns it? Some consulting firms have standard contract terms that retain IP ownership of frameworks produced during delivery. Read the contract carefully before signature.

Ask: All work product, frameworks, governance documentation, and technical designs produced during this engagement: confirm in writing that these are the property of our organisation, not licensed from your firm's IP library. What standard IP terms appear in your master services agreement, and what amendments are you willing to make?

5. Vendor Independence

The test: The AI architecture recommendations this firm makes during the engagement should be driven by our organisation's specific requirements, not by the firm's commercial relationships with AI platform vendors. Vendor relationships are not disqualifying -- they are ubiquitous in the consulting industry -- but they must be disclosed and their influence on recommendations must be explicitly managed.

Ask: List every AI platform vendor with which your firm has a commercial partnership, preferred partner status, or revenue-sharing arrangement. For each, confirm whether your engagement team's compensation or performance metrics are linked to deployment of that vendor's platform.

6. Named Senior Engagement Lead

The test: The practitioner who leads the pitch presentation -- the Partner or Managing Director who presents the methodology and the firm's track record -- is the practitioner who should be leading the engagement delivery. In large consulting firms, the pitch team and the delivery team are routinely different people. The senior partner closes the deal; the delivery team includes practitioners two to three levels junior to the pitch lead.

Ask: Who specifically will be leading day-to-day delivery on this engagement? Provide their CV. What is the specific commitment -- in hours per week -- of the partner who has presented today, for the duration of the engagement? This commitment should be written into the contract.

Section 7 - The Question Every Procurement Team Gets Wrong

Most enterprise AI consulting procurement processes begin with the wrong question: 'Which AI consulting firm is best?' The question assumes a ranking that does not exist. McKinsey is better than Accenture at board-level AI strategy facilitation. Accenture is better than McKinsey at large-scale production deployment. Neither is better or worse in absolute terms -- they are different tools for different jobs.

The question that produces good consulting engagement decisions is: 'What specific outcome do we need in the next 90 days -- and which type of firm has the deepest demonstrated track record of producing that specific outcome for organisations at our current maturity stage?' This question has a specific, answerable form. It requires you to define the outcome before you evaluate the firm. Most procurement processes invert this sequence: they evaluate firms first, then let the selected firm define the outcome.

The firm that wins your procurement process may not be the firm that is right for your challenge. The best pitch is not the same as the best delivery. Evaluate firms against the specific outcome you need to produce -- not against the generic quality of their AI practice.

The same selection principle applies to AI use cases: defining the outcome before selecting the approach is the discipline that separates programmes that reach production from those that enter Pilot Purgatory.

Section 8 - When Internal Capability Is the Right Answer

The consulting engagement question has a fourth answer that is missing from most procurement processes: do not engage a consulting firm. For a specific set of enterprise AI challenges, building internal AI strategy capability -- using validated methodology rather than external consulting engagement -- is the right answer, and the organisations that make this choice consistently outperform those that outsource their AI strategy.

The conditions under which internal capability beats consulting engagement are:

• The challenge is primarily strategic and governance-focused, not delivery-scale -- the organisation needs AI strategy and governance architecture, not large-scale production deployment

• Internal technical capacity exists for the implementation work, but the strategic and governance framework is absent

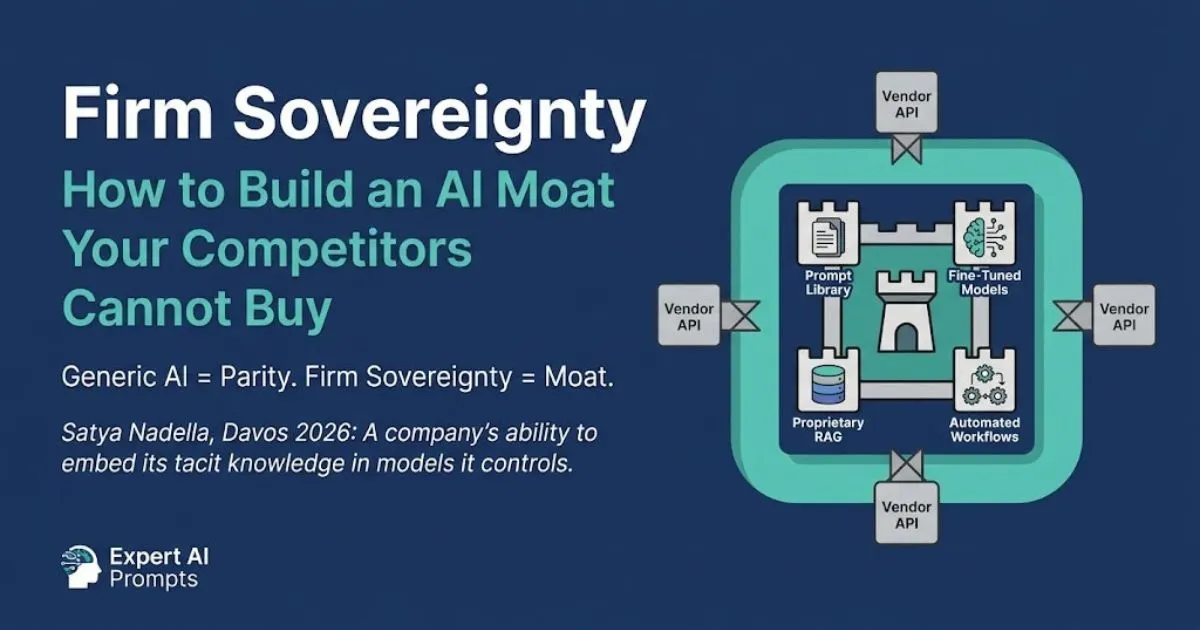

• The priority is building a proprietary internal AI capability that compounds over time -- a Firm Sovereignty outcome that a consulting engagement cannot produce because the IP and knowledge do not remain in the organisation

• The engagement budget is insufficient for a credible large-firm engagement -- a modest consulting budget used for a large firm produces a junior delivery team, which is worse than no engagement

• Speed is critical -- a 90-day governance build using internal resources and a validated framework is faster than a large consulting firm mobilisation that takes 60-90 days before the first deliverable

The specific combination that works: an internal team with technical delivery capacity, working against a validated AI transformation methodology and governance framework, supported by specialist advice for specific domain-expert questions. This is what the Expert AI Prompts enterprise methodology is designed to enable -- the frameworks, templates, and structured processes that allow an internal team to build what a consulting engagement would otherwise deliver.

Building internal AI strategy capability begins with the AI Centre of Excellence structure -- the governance infrastructure that allows the internal team to operate at consulting-quality standards.

Firm Sovereignty: Building an AI Moat

The internal capability route is the path to Firm Sovereignty -- proprietary AI assets that compound into competitive advantage over time, which no consulting engagement can produce.

Section 9 - The Five Questions to Ask Before Signing Any AI Consulting Engagement

These five questions are not negotiating tactics. They are evaluation criteria that reveal whether the engagement is structured to produce a real outcome or to produce an impressive deliverable that does not outlast the consulting team.

1. What specific, measurable outcome will this engagement produce in the first 90 days -- not as a deliverable document, but as a changed capability or operating state in our organisation? Define the 90-day outcome before the engagement begins, and structure the contract around its achievement.

2. Who specifically leads this engagement -- named individuals, not roles -- and what is their contractual commitment in hours per week? The pitch team should be the delivery team. This commitment should appear in the contract as a named-resource obligation, not as a best-efforts clause.

3. At the conclusion of this engagement, what can our team do independently that they cannot do today? Which specific capabilities transfer to our people, and how will you measure whether the transfer was successful?

4. List every AI platform vendor with which your firm has a commercial relationship. For the architecture we are building together, which vendor relationships could influence your recommendations, and how will you disclose that influence?

5. Who owns every work product, methodology document, framework, template, and technical design produced during this engagement? Provide the specific IP ownership clause from your standard MSA. If the standard clause is not acceptable, what amendments will you make?

Closing - Making the Right Partnership Decision

The top AI consulting firms in 2026 are all credible organisations with genuine AI practice capability. McKinsey, BCG, and Bain bring rigorous strategic methodology to AI investment decisions. Accenture, Deloitte, IBM Consulting, and Capgemini bring delivery scale to production deployment. Governance specialists bring the regulatory depth that the EU AI Act enforcement deadline has made urgently relevant. Technology-aligned advisory practices bring domain specificity and independence that generalist firms cannot match.

The decision is not which of these is best. It is which type of firm, at which engagement model, for which specific outcome, is right for your organisation at its current maturity stage. That question has a structured answer. The framework for reaching it is the same framework that applies to every AI investment decision: define the outcome first, then evaluate the partner against their demonstrated ability to produce that specific outcome.

Your next steps:

AI Buy vs Build Decision Framework

The seven-factor framework for deciding whether to engage a consulting partner or build internal AI strategy capability.

Enterprise AI Transformation Playbook

Identify your current maturity stage before selecting the right category of consulting firm.

Enterprise AI Governance Framework

If EU AI Act compliance is your immediate priority, the governance framework covers what a governance specialist engagement delivers.

About the Author

Matthew Bulat is the Founder of Expert AI Prompts and a 20+ year technology and AI strategy executive. Former CTO, Federal Government Technical Operations Manager for national infrastructure across 20 cities and 4,000 users, and 8+ year University Lecturer at CQUniversity. The evaluation framework in this article is derived from direct experience working with, evaluating, and building the methodology that enterprise AI consulting firms deploy.