The 3-Year TCO Reality: What Enterprise AI Actually Costs When You Build vs Buy

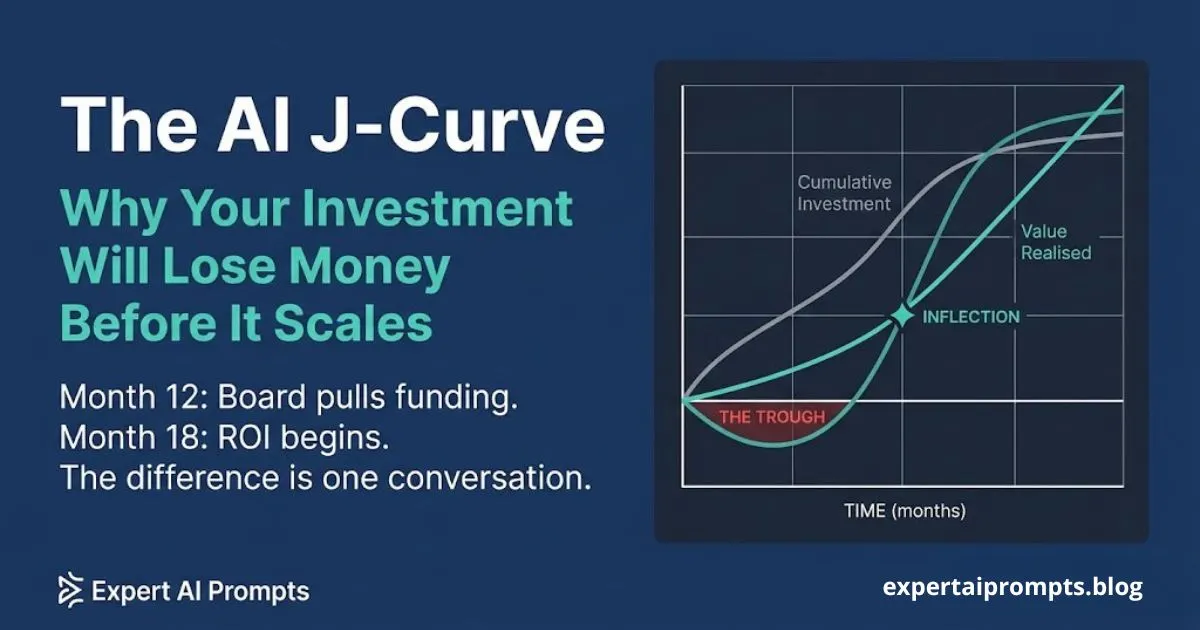

The enterprise AI business case has a structural problem that the enterprise AI industry has a structural incentive to ignore. Vendor ROI slides show Year 1 returns. Engineering teams pitch Build options based on Year 1 engineering availability. Finance approves based on Year 1 numbers. And by Year 2 or Year 3, when the true cost profile is fully visible, the decision has already been made and the budget has already been committed.

Every enterprise AI TCO analysis is made with the same structural error: Year 1 costs are compared, not three-year costs. Build looks cheaper in Year 1 because the two largest build costs -- engineering time and opportunity cost -- are invisible in most business cases. Buy looks expensive in Year 1 because the vendor licence is the only number on the page. By Year 3, both patterns have usually reversed -- but the decision was made at Year 1 with incomplete information, and the organisation is now living with the consequences.

This article is a practitioner's guide to enterprise AI TCO: what each path actually costs across all three years, the five cost categories that are absent from most business cases, and how to build a cost model that will survive contact with Year 3 reality before the decision is made at Year 1.

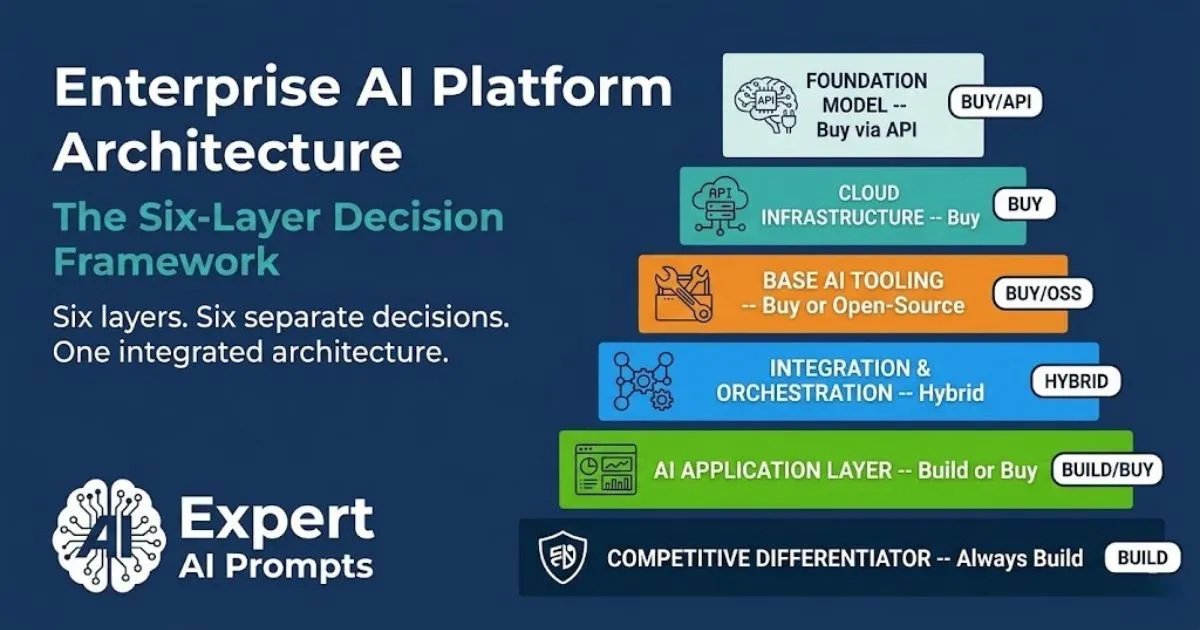

AI Buy vs Build Decision Framework

Total cost of ownership is Factor 5 in the seven-factor AI investment decision matrix. The full framework -- including a TCO comparison template -- is in the AI Buy vs Build Decision Framework.

Section 1 - The Year 1 Anchoring Problem: Why Every AI TCO Starts Wrong

The Year 1 anchoring problem is the most predictable and most consequential financial error in enterprise AI investment. It works as follows: the team responsible for the AI investment decision frames the cost comparison as a Year 1 snapshot -- what does it cost to get this built or bought in the next twelve months? They compare the options on that basis and select the path that looks most cost-efficient at that snapshot.

This is the wrong comparison. Year 1 is not representative of the three-year cost profile for either path. Year 1 Build costs are systematically understated. Year 1 Buy costs are systematically overstated relative to the ongoing cost. The decision made at the Year 1 snapshot consistently produces regret by Year 3.

The Two Invisible Build Costs That Make Year 1 Look Cheap

Two specific cost categories make the Build path look artificially cheap in Year 1:

Engineering time is salaried, not attributed. The 3-4 senior engineers required to build a production AI system are already on payroll. Their time does not appear as a new cost in the Year 1 budget because it is absorbed into existing salary overhead. The $800K-$1.6M in fully-loaded annual engineering cost (senior AI engineers at $200K-$400K+ per year) is invisible in the AI investment analysis, even though it is a real cost that is being incurred and charged against other priorities.

Opportunity cost is never modelled. The engineering team building the AI system is not building something else. The core product features, the technical debt remediation, the infrastructure improvements that were displaced by the AI build decision -- these represent real value foregone that is never included in the Build business case. An organisation that spends three senior engineer-years building an AI system has implicitly chosen not to spend three senior engineer-years on its core product. That choice has a financial consequence that the Build TCO almost universally ignores.

Section 2 - The Full Build Path Cost Model: Year 1, Year 2, Year 3

Year 1: The Costs That Are Visible -- and the Ones That Are Not

The Year 1 Build cost model includes: engineering design, build, test, and deployment (typically the largest single cost, and consistently underestimated by 30-50% on first-time AI builds); foundation model API costs at pilot volume (low in Year 1, but scaling rapidly in Years 2 and 3 as usage reaches production volume); infrastructure establishment (compute, vector databases, serving layer, monitoring tooling); initial governance documentation (EU AI Act risk classification, SOC 2 processing integrity controls); and change management for the initial deployment cohort.

What Year 1 Build business cases exclude: ongoing engineering maintenance budget (typically not estimated because the system has not yet been deployed and the team believes it will require minimal maintenance); model drift remediation costs (unknown because the system has not yet been in production long enough to observe drift); talent retention premium for the two or three engineers whose departure would compromise the system; and all of the opportunity cost described above.

Year 2: When the Real Build Costs Emerge

Year 2 is when the Build path's true ongoing cost profile becomes visible. Engineering maintenance for a production AI system is not zero -- it is typically 15-25% of the initial build cost per year, rising as the system accumulates technical debt and the data distribution it was trained on shifts from the data distribution it encounters in production.

Model drift emerges. AI models that perform well at deployment degrade over time as the underlying data they process evolves. In natural language processing systems, language patterns shift. In predictive systems, the factors being predicted change. In decision support systems, the business context for decisions evolves. Each shift requires prompt recalibration, fine-tuning refreshes, or model retraining -- all of which have real engineering costs that the Year 1 business case did not include.

Talent retention becomes an active cost. The engineers who built the system now have AI production experience -- one of the most sought-after skill profiles in the labour market. Retaining them requires salary progression that was not modelled in Year 1. Losing them requires knowledge transfer, documentation, and rebuilding institutional knowledge -- costs that compound with the organisational knowledge lock-in problem described in the vendor lock-in analysis.

Year 3: The Full Build Cost Load

By Year 3, the fully-loaded Build cost typically includes: ongoing engineering maintenance at 15-25% of original build cost; foundation model API costs at full production volume (often 5-10x the pilot volume that Year 1 modelled); governance maintenance (EU AI Act annual conformity review, SOC 2 annual audit, privacy impact assessment updates); model retraining or major version updates; and the talent retention premium now reflecting two years of market appreciation in AI engineering salaries.

Build path rule of thumb: budget 15-25% of the initial build cost per year for ongoing maintenance. Add 100-200% for infrastructure scaling as usage reaches production volume. Add a talent retention premium of 15-20% per year for the core engineering team. The Year 3 cost of a Year 1 Build decision is almost never in the Year 1 business case.

Section 3 - The Full Buy Path Cost Model: Year 1, Year 2, Year 3

Year 1: Front-Loaded Visibility, Compressed Ongoing Costs

Year 1 Buy costs are front-loaded and highly visible: vendor licence fees (the only cost most Buy business cases model); implementation and professional services (typically 1-3x the first-year software cost for complex enterprise deployments -- frequently excluded from the licence-focused comparison); integration engineering (connecting the vendor product to existing data systems, security controls, and enterprise workflows); and change management ($500-$2,000 per user for meaningful adoption programmes, at enterprise scale this is a significant budget item that frequently appears as a surprise in Year 1 actuals).

The Year 1 Buy cost looks expensive in isolation. Against the Year 1 Build cost that excludes engineering time and opportunity cost, it looks even more expensive. This is the Year 1 anchoring problem operating at its most misleading.

Year 2: The Renewal Risk and Scale-Up Exposure

Year 2 brings the first renewal negotiation. If the vendor's pricing model is consumption-based -- per API call, per user, per processed token -- Year 2 cost at production volume is frequently 2-5x Year 1 cost at pilot volume. The Year 1 business case modelled pilot pricing. The Year 2 invoice reflects production volume.

Year 2 also brings the first signal of the vendor pricing dynamic described in the scale-up risk section below: organisations that have integrated the vendor product deeply into production workflows have reduced switching leverage. The vendor's Year 2 renewal offer will reflect this.

Year 3: The Point Where Buy Either Wins or Traps

By Year 3, the Buy path either demonstrates its advantage or reveals its trap. The advantage case: the vendor has continued to invest in the platform, the product is genuinely better than it was at Year 1, the pricing has been managed through contractual protections, and the total three-year cost is below the equivalent Build path because the organisation has not borne the engineering maintenance, drift remediation, or infrastructure scaling costs internally.

The trap case: the vendor has raised pricing at renewal to reflect the organisation's deep integration dependency; the product has not kept pace with the organisation's evolving requirements; and the exit cost has accumulated to the point where migration is commercially impractical. The Year 3 Buy cost is the Year 3 vendor invoice plus the implicit cost of having no credible alternative -- which is often denominated in the vendor's pricing power, not in any invoice line item.

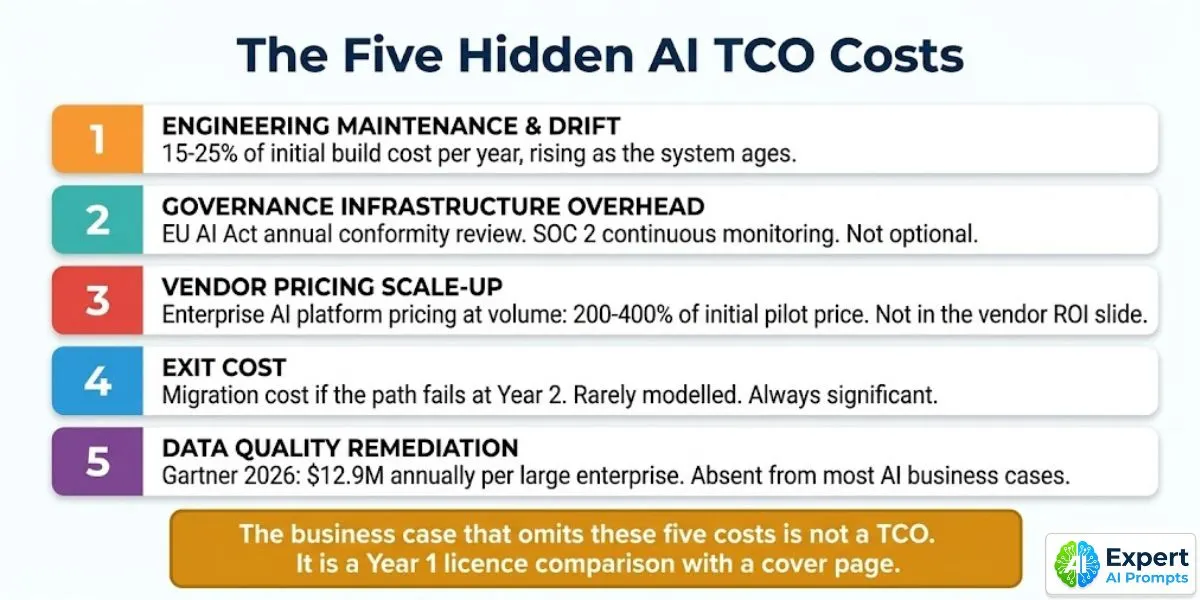

Section 4 - The Five Hidden Cost Categories

These five cost categories are absent from most enterprise AI TCO analyses. The omission of any one of them produces a business case that makes the wrong recommendation.

1. Engineering Maintenance and Drift Remediation

Standard omission: The build business case budgets for engineering to build and deploy the system. It does not budget for engineering to maintain it in production over three years.

What it actually costs: 15-25% of initial build cost per year for ongoing maintenance and drift remediation, rising over time as the system accumulates technical debt and data distribution shifts become more significant. A system that cost $500K to build will require $75K-$125K per year in engineering maintenance, compounding as complexity grows.

2. Governance Infrastructure Overhead

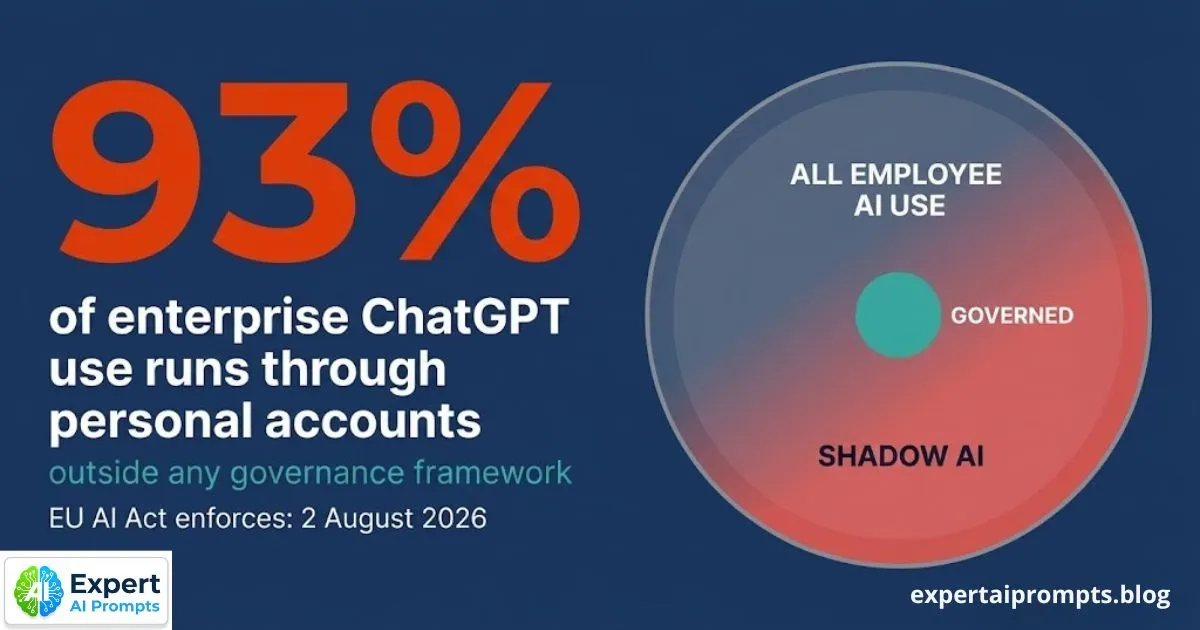

Standard omission: Governance is budgeted as a one-time compliance exercise during deployment. It is not treated as an ongoing operational cost.

What it actually costs: Every production AI system with EU AI Act Annex III risk classification requires annual conformity review -- a real engineering and legal cost. SOC 2 processing integrity controls require continuous monitoring infrastructure and annual audit evidence collection. Privacy impact assessments must be updated when system scope or data handling changes. These costs are real, recurring, and frequently excluded from both Build and Buy TCO models because they are shared infrastructure costs that no single project wants to fully own.

Enterprise AI Governance Framework

The full governance infrastructure cost model -- including EU AI Act annual conformity review requirements -- is in the Enterprise AI Governance Framework.

3. Vendor Pricing Scale-Up

Standard omission: The Buy business case is modelled at pilot pricing. The Year 2 and Year 3 cost at production volume is not modelled.

What it actually costs: Enterprise AI platform pricing at production volume is typically 200-400% of the initial pilot pricing. Consumption-based pricing models mean that the invoice at 10x usage volume is not 10x the pilot invoice -- it is often 20-40x, because the per-unit cost increases at higher volume tiers rather than decreasing. Run the pricing model at 5x, 10x, and 50x current volume before Year 1 commitment.

4. Exit Cost

Standard omission: The initial TCO models the cost of going into the chosen path. It does not model the cost of leaving it if the path fails.

What it actually costs: For Build paths: if the system fails to reach the 2x productivity gate or fails in production at Year 2, the exit cost is the write-off of the engineering investment plus the cost of deploying an alternative. For Buy paths: the exit cost is data migration, retraining users on an alternative, and rebuilding integrations -- plus the business disruption cost of the transition period. A realistic exit cost scenario should be included in every TCO model.

5. Data Quality Remediation

Standard omission: The AI business case assumes the data required for the AI system is available, clean, and AI-ready. It is almost never all three.

What it actually costs: Gartner 2026 benchmark: $12.9M annually per large enterprise for data governance and quality maintenance for AI workloads. This is not a build cost -- it is an ongoing operational cost that exists regardless of whether the AI system is built or bought. The cost is invisible in most AI business cases because it is carried by the data team's budget, not the AI project budget. Gartner's separate finding: 60% of agentic AI projects will fail due to lack of AI-ready data. The data quality remediation cost is the cost of not having AI-ready data, which is almost always higher than the cost of remediating it before the AI project begins.

Section 5 - The Vendor Pricing Scale-Up Risk

The 200-400% vendor pricing scale-up figure deserves a section of its own because it is the most consistently surprising cost in enterprise AI deployments -- despite being entirely predictable at the time the initial contract is signed.

Most enterprise AI vendor contracts are structured around one of three pricing models: per-user subscription (fixed cost per seat, scales linearly with user count), consumption-based pricing (per API call, per processed token, per inference run -- scales with usage, not linearly), or tiered licensing (fixed cost up to a usage threshold, then a step-change to the next tier price).

Consumption-based and tiered pricing produce the scale-up problem. A pilot deployment uses a small fraction of production volume. The per-unit cost at pilot volume may be set aggressively to win the contract. At production volume -- 10x, 50x, or 100x the pilot -- the per-unit cost changes, the tier jumps, and the annual invoice is dramatically different from the pilot economics.

The vendor ROI slide shows the productivity gains at production volume. It does not show the per-unit pricing at production volume. Request both on the same slide. If the vendor declines to provide production-volume pricing before contract signature, treat this as the finding.

The practical protection: require written pricing schedules at 5x, 10x, and 50x current usage volume as a condition of contract execution. Request a pricing cap or price protection provision for the first 36 months. Model the Year 3 cost at 150% of the Year 1 per-unit price as a stress-test scenario. If the Year 3 stress-test cost exceeds the equivalent Build path cost, the decision needs to be revisited.

The mechanism by which scale-up pricing becomes unavoidable when vendor lock-in has accumulated is covered in full in Own vs Orchestrate: The 2026 Enterprise Guide to Avoiding AI Vendor Lock-In.

Section 6 - The Year 3 Reversal: Why the Decision Looks Different in Retrospect

The Year 3 reversal is the consistent pattern that emerges from honest enterprise AI TCO modelling: the Build path, which appeared cheaper in Year 1, has accumulated maintenance costs, model retraining costs, governance overhead, talent retention premiums, and opportunity cost that make its Year 3 fully-loaded cost higher than the Year 1 business case projected. The Buy path, which appeared more expensive in Year 1, has delivered compounding vendor improvements, eliminated internal maintenance overhead, and -- if the contract protections were in place -- maintained a cost profile that is lower than the equivalent Build path by Year 3.

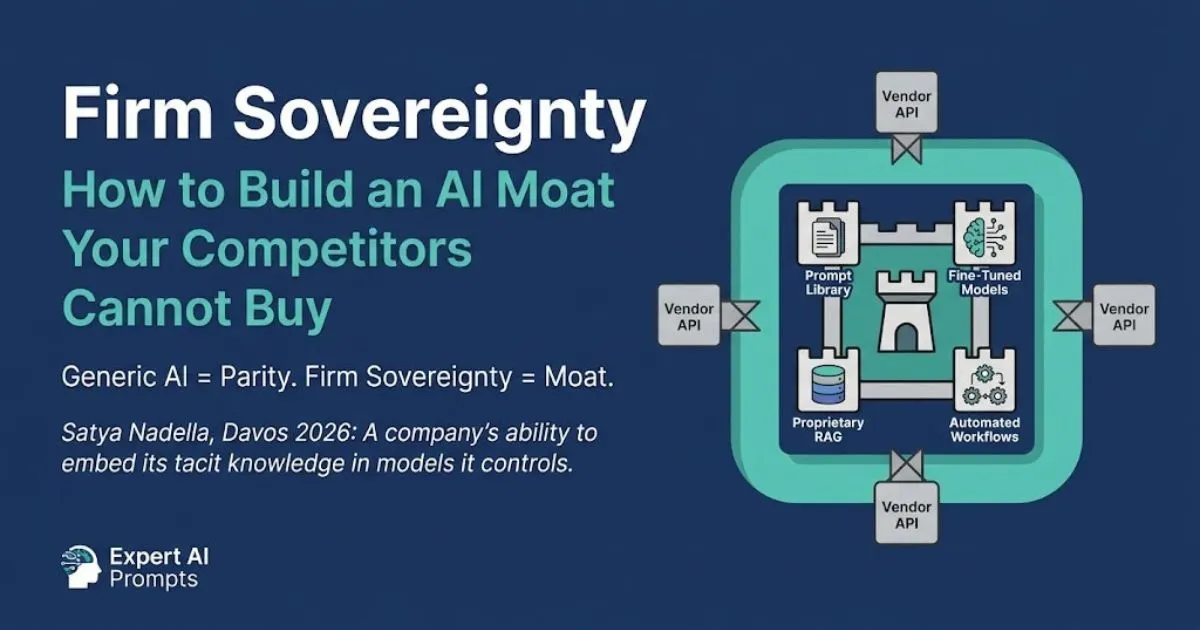

This is not a universal finding. It has exceptions. The Build path is genuinely superior by Year 3 when: the use case requires proprietary competitive differentiation that a vendor cannot provide; the scale of usage is high enough that the per-inference cost of an internal system is materially lower than the vendor's production-volume pricing; or the data sovereignty requirements make a vendor deployment technically impossible. The seven-factor framework is the tool for identifying which case applies.

The Year 3 reversal for Buy paths only holds when the vendor has not trapped the organisation into the pricing power position described in The AI Commodity Trap.

Section 7 - How to Build an Honest AI TCO in Six Steps

1. Run all four paths, not two. Build, Buy, Partner, and Hybrid should all appear in the TCO comparison. The decision to compare only Build and Buy excludes the Hybrid path that most enterprise AI programmes should be on, and the Partner path that is correct for capability gaps that cannot be filled at the required timeline.

2. Use three years, not one. Year 1 is the anchoring problem. Year 2 is when the real cost profile of each path begins to emerge. Year 3 is where the cost curves fully separate. A Year 1 snapshot is not a TCO. Label it accordingly in any board presentation that includes it.

3. Include all cost categories, not the visible ones. Engineering time (fully-loaded at market rate), infrastructure, governance overhead, maintenance, talent retention, change management, data quality remediation, exit cost, and scale-up pricing all belong in the model. Any category excluded should be explicitly noted as excluded, with a rationale.

4. Model the scale-up scenario for Buy options. Project the Year 2 and Year 3 cost at 5x current usage volume. Then model a vendor pricing change scenario at 150% of the Year 1 per-unit price. If the combination produces a Year 3 Buy cost that exceeds the equivalent Build path, the decision needs to be revisited or the contract needs stronger pricing protections.

5. Include an exit cost scenario for every path. What does it cost if this path fails at Year 2? For Build: the write-off of engineering investment plus the cost of deploying an alternative. For Buy: data migration, user retraining, integration rebuild, and transition business disruption. The exit scenario does not need to be the base case -- it needs to be in the model.

6. Stress-test the model against the governance overhead. What is the cost of EU AI Act annual conformity review for this system? What is the cost of SOC 2 continuous monitoring and annual audit evidence for this use case? These costs apply to all paths. They should appear in all paths.

Section 8 - The Hybrid Path TCO Advantage

The Hybrid path -- buy commodity AI infrastructure, build the proprietary competitive intelligence layer -- has a TCO advantage that neither a pure Build nor a pure Buy comparison captures, because it is deliberately designed to capture the cost efficiency of purchased infrastructure while building the value that a purely purchased solution cannot provide.

On the infrastructure side, the Hybrid path benefits from vendor-maintained foundation model capability, infrastructure scaling, and ongoing model improvements -- the same benefits that make the Buy path cost-efficient over three years. On the intelligence layer side, the organisation builds and owns the prompt library, the fine-tuning, and the workflow automation that creates the competitive differentiation -- without paying the full engineering cost of building the underlying infrastructure from scratch.

The Hybrid path's TCO advantage is most pronounced when: the use case requires a mix of commodity capability and proprietary differentiation; the time to value from a pure Build path is too long to meet business requirements; and the organisation has sufficient internal capability to build the intelligence layer but not the full infrastructure. For most enterprise AI programmes in 2026, this describes the majority of production use cases.

Firm Sovereignty: Building an AI Moat

The Hybrid path is the build path to Firm Sovereignty: the intelligence layer that compounds into competitive advantage over time while the commodity infrastructure is maintained by the vendor.

Closing - Running the Model Before the Decision

The enterprise AI investment decision that is made with a Year 1 snapshot and an incomplete cost model is not a decision -- it is an assumption. The assumption may be directionally correct. It may not be. By Year 3, the organisation will know which it was. By Year 3, the budget has been committed and the dependency has been built. There is no remediation of a Year 3 regret that was preventable with a Year 1 honest TCO model.

The six-step process above does not take significantly more time than a standard business case. It takes the same time, but uses it differently: on cost categories that matter over three years rather than categories that look impressive at Year 1. The business case that omits the five hidden costs is not a TCO. It is a Year 1 licence comparison with a cover page.

Your next steps:

AI Buy vs Build Decision Framework

The full seven-factor decision framework including the TCO comparison template across all four investment paths.

The vendor pricing scale-up mechanism and the contract clauses that prevent it becoming the dominant Year 3 cost.

AI Transformation Roadmap 2026

TCO modelling is a Phase 2 governance deliverable in the 90-day onboarding framework.

About the Author

Matthew Bulat is the Founder of Expert AI Prompts and a 20+ year technology and AI strategy executive. Former CTO, Federal Government Technical Operations Manager for national infrastructure across 20 cities and 4,000 users, and 8+ year University Lecturer at CQUniversity. The TCO framework in this article is applied in the AI Buy vs Build Decision Framework and validated against the Expert AI Prompts live platform architecture.