Fear of the Robot: Ensuring Your AI Content Still Sounds Human

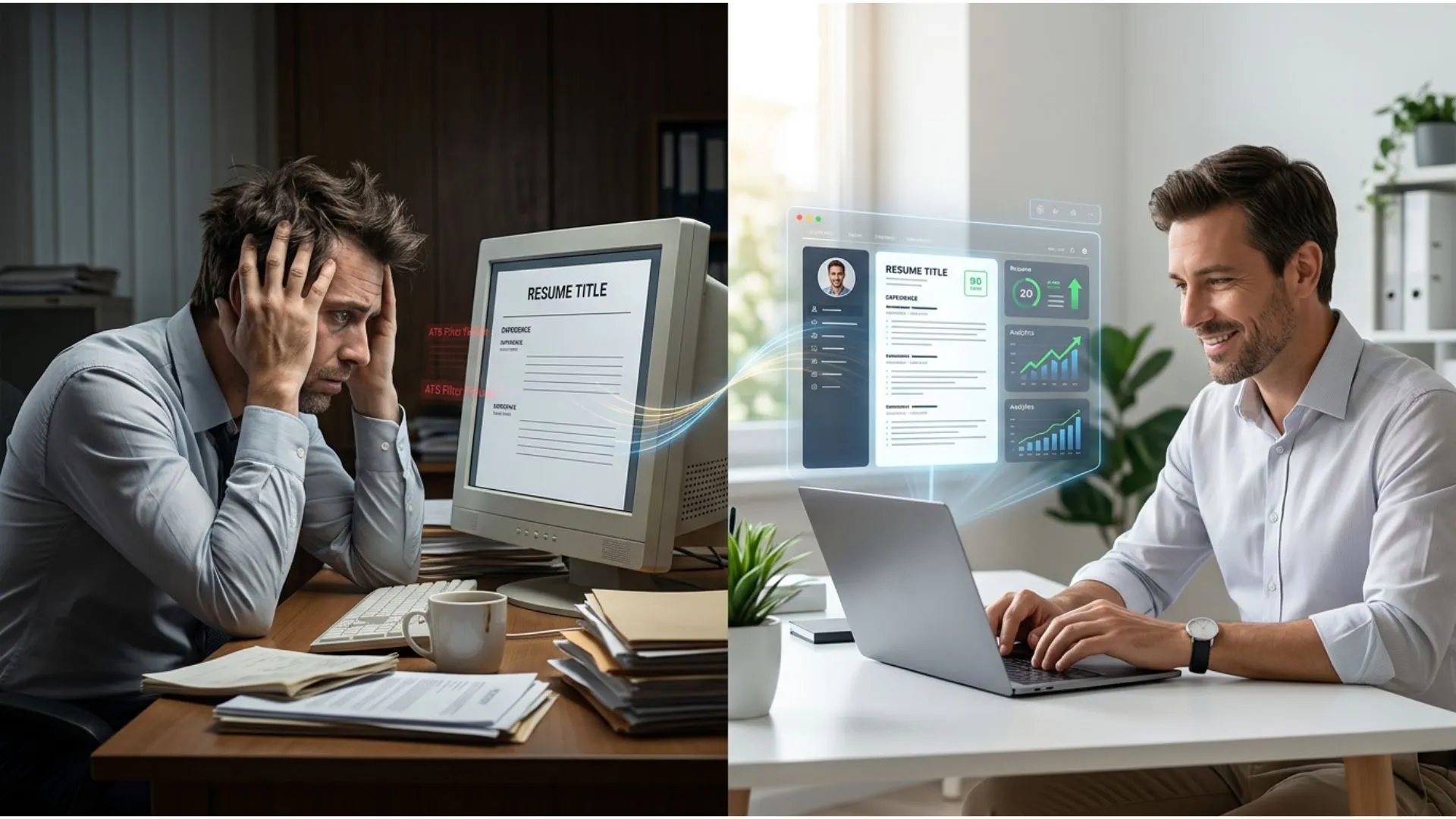

You've experimented with AI content generation. The speed is impressive—what used to take hours now takes minutes. But something feels off. The content reads like it was assembled by committee rather than crafted by someone who understands your business. Your customers might not put their finger on it immediately, but they sense it: the absence of authentic human connection.

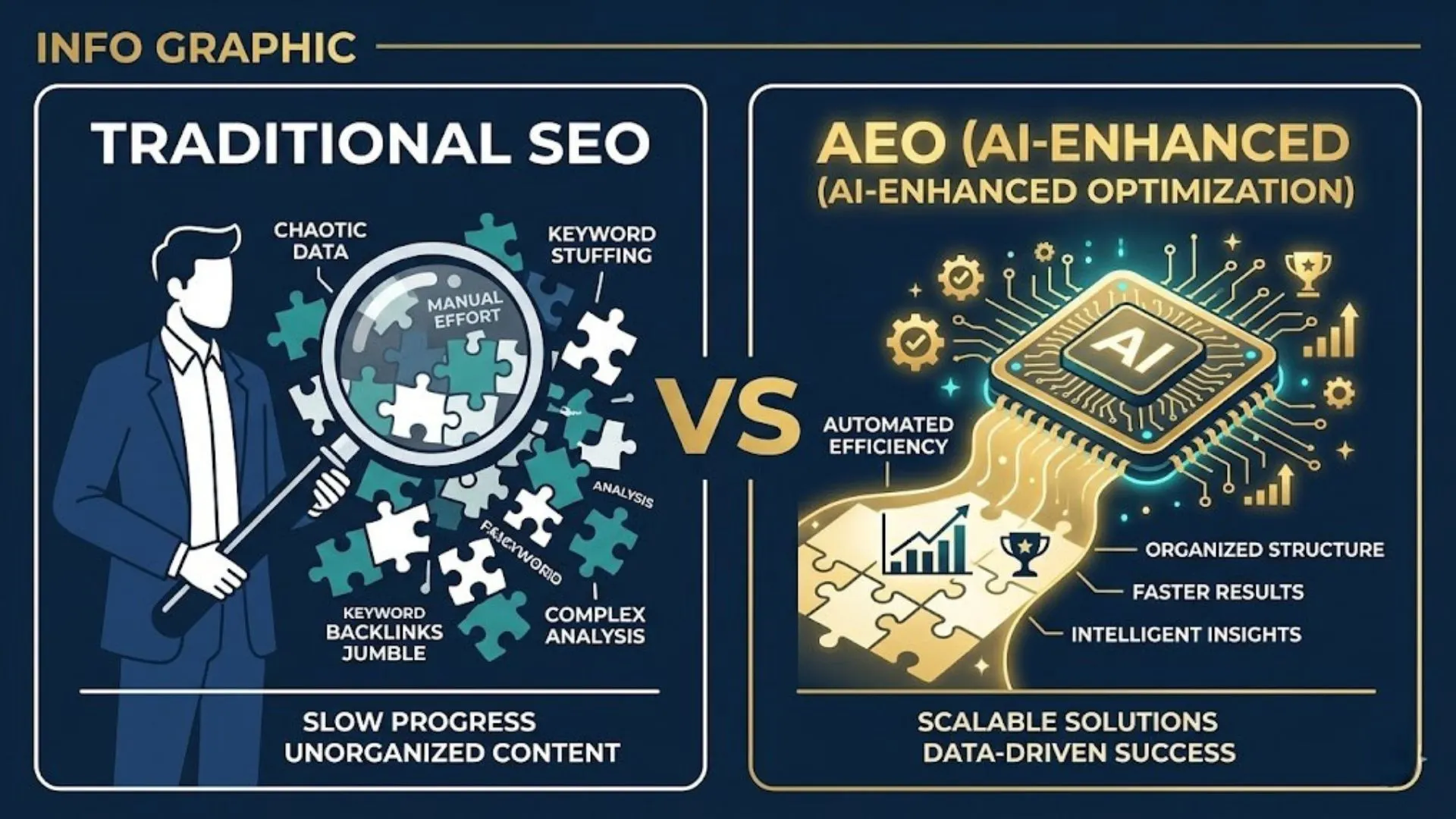

This fear isn't irrational. Generic AI outputs can erode the credibility you've spent years building. When your content sounds like every other AI-generated article flooding the internet, you lose the competitive edge that differentiates your brand. The question isn't whether to use AI—that ship has sailed—but how to maintain quality control that preserves your authentic voice while leveraging AI's efficiency.

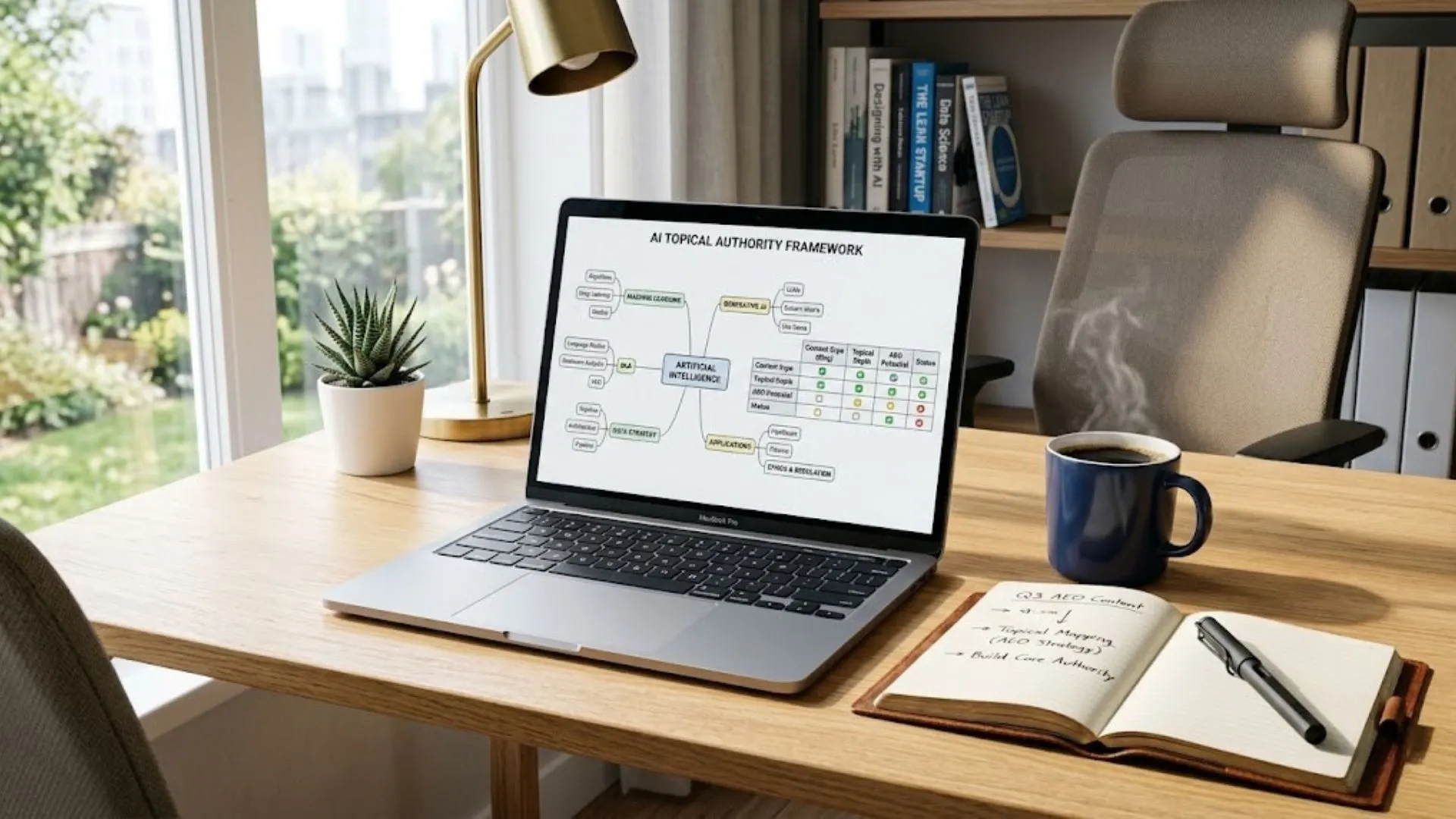

The Entrepreneur's Playbook for Prompt Engineering Entrepreneur's Playbook for Prompt Engineering is the strategic foundation for building AI content systems that consistently sound human.

The businesses winning with AI aren't using it as a replacement for human expertise. They're using strategic quality control processes that ensure every piece of content—AI-assisted or not—reflects their brand's unique perspective, tone, and credibility. This guide shows you exactly how to implement that control.

The Real Cost of Robotic Content

How Audiences Detect AI-Generated Content

Your customers have developed surprisingly sophisticated radar for identifying AI-generated content. They notice the telltale signs: overly formal language in casual contexts, repetitive sentence structures, generic transitions like "in today's digital landscape," and conclusions that feel manufactured rather than earned. These patterns trigger skepticism.

The detection happens subconsciously. Readers don't think "this was written by AI"—they simply feel disconnected. The content lacks the strategic insights, specific examples, and nuanced perspectives that come from genuine expertise. When your content fails to demonstrate deep understanding of industry challenges, customers question whether you truly grasp their needs.

More sophisticated audiences actively look for AI fingerprints. This is the hallmark of what experts call generic voice syndrome — learn how to avoid generic voice syndrome with the 2026 advisory built specifically for small business owners. They scan for hedging language, absence of strong opinions, and the characteristic AI tendency to present all sides equally without taking strategic positions. In competitive markets, this neutrality reads as inexperience or lack of confidence.

Brand Credibility Damage from Generic Outputs

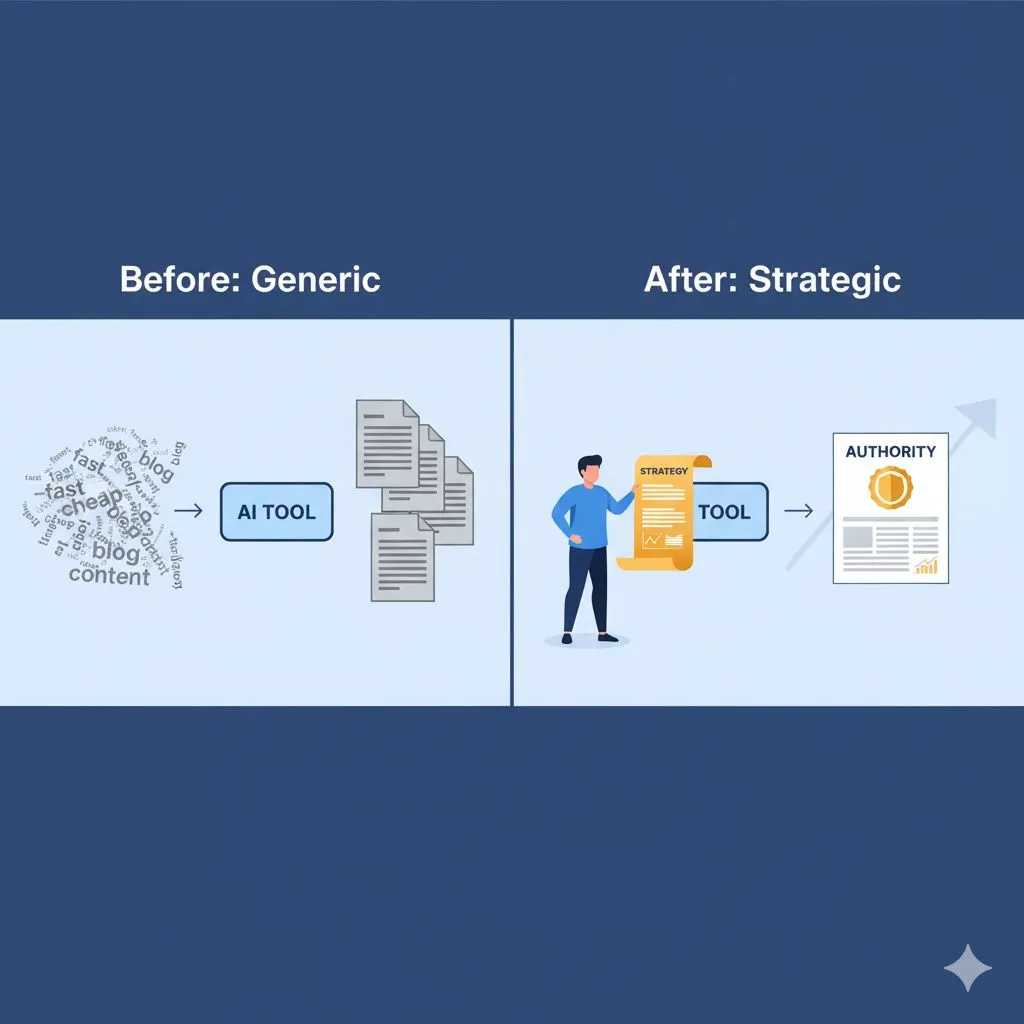

Generic AI content doesn't just fail to build trust—it actively destroys it. The performance gap is stark — see the data-driven comparison of generic vs strategic AI prompts and why one kills brand credibility while the other compounds it. When potential clients read content that could have been written for any company in your industry, they assume you're another commodity player competing solely on price. The polished-but-empty content suggests you lack the expertise to provide unique insights.

This credibility damage compounds over time. Each piece of robotic content reinforces the perception that your business is following trends rather than leading them. Customers seeking expert guidance quickly move on to competitors whose content demonstrates genuine thought leadership. The efficiency gains from rushed AI content become losses in client acquisition and retention.

The financial impact extends beyond lost opportunities. Recovering from damaged brand perception requires significantly more resources than preventing it through proper quality control. You're not just competing against other businesses—you're competing against the flood of mediocre AI content that's training customers to expect more.

The Trust Gap Between Automation and Authenticity

The tension between automation efficiency and authentic connection represents the central challenge for businesses adopting AI. Customers want the benefits of modern technology—fast responses, comprehensive information, 24/7 availability—but they also want to feel they're engaging with real expertise and genuine care.

This trust gap widens when businesses treat AI as a complete replacement rather than a strategic tool. The most effective approach bridges automation and authenticity by using AI for leverage while maintaining human judgment, strategic oversight, and quality control. Your AI outputs should amplify your expertise, not replace it.

Building trust in an AI-assisted world requires transparency about your process combined with unwavering commitment to quality. Customers don't necessarily object to AI-generated content—they object to low-quality content that wastes their time. When your quality control ensures every output delivers genuine value, the trust gap closes.

Strategic AI Quality Control Framework

Quality control isn't a final editing step—it's a comprehensive framework spanning the entire content creation process. Effective AI quality control happens in three distinct phases: pre-generation setup, mid-generation checkpoints, and post-generation refinement. Each phase serves specific functions that collectively ensure human-sounding outputs.

Pre-Generation Setup: Training AI with Brand Voice

Quality control begins before you generate a single word. The foundation is a comprehensive brand voice document that codifies your communication style, key phrases, strategic positioning, and content boundaries. This document becomes your AI training manual.

Effective brand voice documentation goes beyond describing tone as "professional" or "friendly." It includes specific examples of acceptable and unacceptable phrasing, industry terminology preferences, sentence length guidelines, and strategic messaging priorities. The more specific your guidelines, the more consistent your AI outputs.

Feed these guidelines into your AI prompts systematically. Rather than hoping the AI intuitively understands your brand, explicitly instruct it with phrases like "avoid generic business jargon" or "use direct language that respects the reader's technical competence." Strategic prompt engineering eliminates 70% of quality issues before they appear.

Read the Expert AI Prompts guide on how to train AI to write in your brand voice before building your prompt library — it covers the exact documentation structure that eliminates generic outputs.

Test your brand voice prompts with multiple AI generations to identify gaps. When outputs consistently miss the mark on specific elements, refine your prompt instructions rather than repeatedly fixing the same issues during editing. This upfront investment creates a reusable quality control foundation.

Mid-Generation Checkpoints: Structure and Tone Validation

Don't wait until you have a complete draft to assess quality. Strategic checkpoints during generation help you course-correct before investing time in content that requires extensive revision. For longer pieces, review after each major section to ensure you're maintaining voice consistency.

Structure validation focuses on logical flow, strategic progression, and adherence to your intended hierarchy. Check whether each section delivers on its promised topic, whether transitions feel natural, and whether the content builds toward a clear conclusion. Structural problems compound as content develops—catching them early saves significant time.

Tone validation requires reading sections aloud to detect rhythm issues, unnatural phrasing, and emotional disconnect. Your ears catch problems your eyes miss. Listen for the specific voice patterns you've documented in your brand guidelines. If a section doesn't sound like something you'd say in a client conversation, flag it immediately.

Use these checkpoints to adjust your prompts for subsequent sections. If the AI is drifting toward generic language or losing your strategic focus, refine your instructions before generating more content. This iterative approach prevents the need for wholesale rewrites.

Post-Generation Refinement: Human Polish Strategies

Even with excellent pre-generation setup and mid-generation checkpoints, human refinement remains essential. This isn't about rewriting AI content—it's about strategic polish that elevates good outputs to excellent. Focus your human effort where it delivers maximum impact.

Start with strategic review: Does the content advance your business positioning? Does it provide insights competitors can't easily replicate? Does it demonstrate expertise that builds client confidence? If the content feels like something any company could publish, identify what specific elements need strengthening.

Layer in personality and perspective. AI tends toward neutral presentation; humans inject strategic opinions, specific examples from experience, and nuanced positions on industry debates. These additions transform generic information into thought leadership that differentiates your brand.

Final refinement targets sentence-level issues: varying rhythm, eliminating hedge words, sharpening transitions, and ensuring every paragraph earns its place. This polish phase should require 20-30 minutes for a 1500-word piece, not hours of rewriting. If you're investing more time, your pre-generation and mid-generation processes need refinement.

Five Tactical Quality Control Techniques

Implementing quality control requires specific techniques beyond general principles. These five tactical approaches address the most common AI content quality issues that damage brand perception. Master these techniques to systematically eliminate robotic characteristics from your outputs.

1. Voice Consistency Testing

Create a voice consistency checklist drawn from your brand voice documentation. After generating content, review it against specific criteria: sentence length distribution, terminology choices, tone markers, and strategic messaging alignment. Score each element to identify patterns in where AI outputs diverge from your brand.

This systematic approach prevents subjective editing where different reviewers apply inconsistent standards. Your checklist becomes a shared quality benchmark that maintains consistency across all content, regardless of who's managing the AI process. Track scores over time to measure improvement as you refine your prompts.

To accelerate this process, download the free Generic-to-Expert AI Content Audit Checklist — a ready-made scoring tool you can apply to any AI output immediately.

Pay particular attention to openings and conclusions—areas where AI often defaults to generic phrasing. Your first paragraph should immediately establish your brand's perspective and tone. Your conclusion should reflect strategic positioning, not summarize obvious points. These high-visibility sections disproportionately impact reader perception.

2. Emotional Resonance Verification

Robotic content lacks emotional connection because AI doesn't experience the frustrations, victories, and anxieties your customers face. During quality review, identify moments where genuine empathy should appear but feels manufactured. These gaps are opportunities to inject authentic understanding.

Test emotional resonance by asking: Would this content reassure a frustrated customer? Would it motivate someone facing the challenges you're addressing? Would it build confidence in your expertise? If the emotional impact feels flat, you need specific examples, stronger positioning, or more direct acknowledgment of customer pain points.

Avoid the AI tendency toward excessive positivity that dismisses real challenges. Customers trust expertise that acknowledges difficulties while providing strategic paths forward. Balance encouragement with realism, demonstrating you understand both the obstacles and the solutions.

3. Industry-Specific Language Validation

Generic AI outputs use broad business terminology that applies to any industry. Quality content incorporates specific language that signals insider expertise. Review your content for ratio of generic to specific terms—if "digital landscape" and "innovative solutions" appear more frequently than your industry's actual terminology, you have a quality problem.

Industry-specific language isn't jargon for jargon's sake—it's efficient communication that demonstrates competence. Your target audience already understands these terms; using them appropriately builds credibility. However, balance specificity with clarity, avoiding unnecessarily obscure terminology that serves only to impress.

Test whether someone outside your industry could replicate your content by simply swapping industry names. If yes, you haven't provided the specific insights that differentiate expertise from surface knowledge. Add concrete examples, specific frameworks, and nuanced perspectives unique to your experience.

4. Sentence Rhythm and Variation Analysis

AI tends toward predictable sentence structures: medium-length sentences with consistent complexity that create a numbing rhythm. Human writers naturally vary length, alternate between simple and complex construction, and create intentional rhythm that maintains reader engagement.

Analyze your sentence structure by counting words per sentence across paragraphs. Look for patterns: clusters of similar-length sentences, excessive use of compound structures, or monotonous subject-verb-object patterns. Break the pattern deliberately with short, direct statements followed by more complex exploration.

Read your content aloud at natural speaking pace. Note where you stumble, where breath feels unnatural, and where rhythm disrupts rather than supports comprehension. These friction points reveal structural issues your eyes miss. Rhythm isn't decorative—it's functional element that determines whether readers can maintain focus through your content.

5. Strategic Prompt Engineering for Natural Outputs

The most effective quality control happens before generation through strategic prompt design. Rather than generic "write an article about X," engineer prompts that preemptively prevent quality issues. Include specific instructions about what to avoid, examples of desired voice, and strategic parameters that guide output quality.

Effective prompts specify structural preferences: "Start with a specific scenario, not a generic statement" or "Include at least three concrete examples from the industry." They define voice parameters: "Use direct language that respects technical competence" or "Avoid hedging phrases like 'may help' or 'could improve'—state benefits directly."

Most importantly, strategic prompts include your unique perspective on the topic. Feed the AI your strategic position, specific insights, and differentiated approach before asking it to generate content. This input ensures outputs reflect your expertise rather than generic information anyone could research.

Refine prompts iteratively. When outputs consistently miss specific quality markers, adjust your prompt instructions rather than repeatedly editing the same issues. Build a library of proven prompts for different content types, creating reusable quality control infrastructure that compounds efficiency over time.

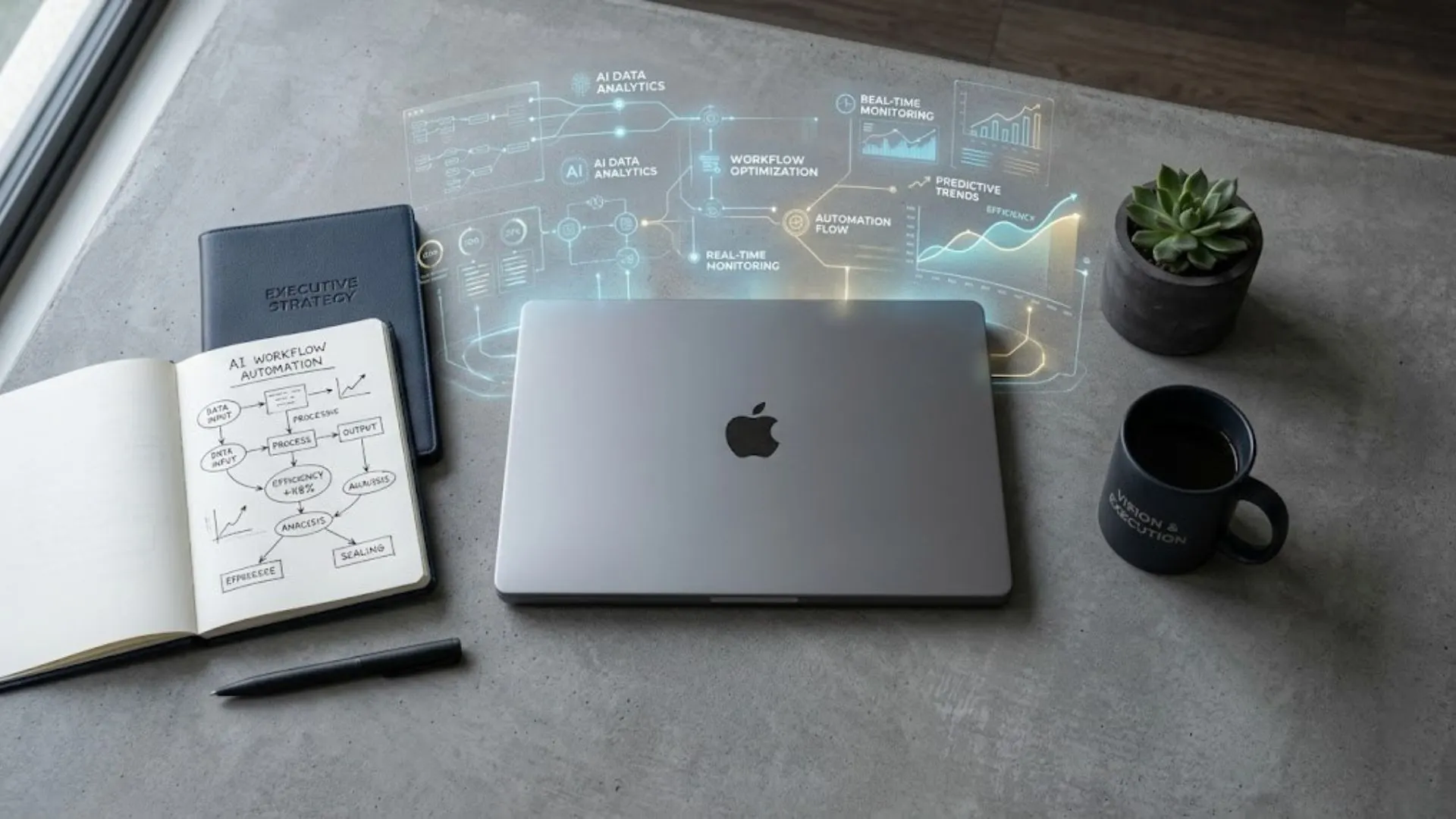

Building Your AI Quality Control System

Individual quality control techniques solve immediate problems, but sustainable success requires systematic infrastructure. Building a comprehensive quality control system transforms AI content generation from ad hoc experimentation into a reliable, scalable business capability that maintains brand integrity while delivering efficiency gains.

Creating Reusable Brand Voice Guidelines

Comprehensive brand voice documentation is your most valuable AI quality control asset. Go beyond basic tone descriptors to create a detailed playbook that covers industry-specific terminology, strategic messaging priorities, acceptable and unacceptable phrasing patterns, sentence structure preferences, and examples of on-brand vs. off-brand content.

Structure these guidelines for practical application, not as a theoretical document. Include quick-reference sections that address common AI quality issues: generic openings to avoid, transition phrases that align with your voice, conclusion frameworks that reinforce positioning, and industry-specific language to incorporate. Make this playbook your first reference during content creation.

The Prompt-Driven Branding Playbook for Brand Managers delivers a ready-built brand voice framework with 50 prompts engineered for consistent, on-brand AI outputs — eliminating the need to build your guidelines from scratch.

Update guidelines based on quality control experience. When you identify new patterns of AI outputs that miss your brand voice, document the issue and solution. This living document becomes increasingly valuable as it accumulates specific insights from your quality control practice.

Establishing Review Workflows

Ad hoc quality review creates inconsistent results and inefficient resource use. Establish clear workflows that define who reviews what, when checkpoints occur, and what standards guide decisions. This systematization ensures consistent quality regardless of time pressure or team member availability.

Define review stages: initial AI output assessment, strategic content validation, voice consistency check, and final polish. Assign appropriate expertise to each stage—strategic validation requires different skills than copy editing. Not every piece needs identical scrutiny; establish criteria for fast-track versus comprehensive review based on content importance and audience.

Document decision frameworks for common quality trade-offs. When do you accept "good enough" to maintain publishing velocity versus investing additional polish time? What quality issues are non-negotiable versus nice-to-have improvements? Clear frameworks eliminate decision fatigue and ensure team alignment on quality standards.

Training Your Team on AI Quality Standards

If multiple team members generate AI content, inconsistent quality control standards create brand inconsistency. Invest in training that aligns everyone on quality expectations, common AI pitfalls, brand voice application, and effective prompt engineering techniques. This upfront investment prevents costly quality problems.

Use real examples from your content creation process—both successful outputs and problematic ones that required significant revision. Discuss what specific elements work, why certain approaches fail, and how to recognize quality issues during generation rather than after completion. Practical pattern recognition is more valuable than theoretical principles.

Create shared resources: prompt templates, quality checklists, brand voice references, and decision frameworks. Make these easily accessible during content creation workflow. The easier you make it to do quality control correctly, the more consistently your team will maintain standards even under time pressure.

Measuring Quality Improvements Over Time

You can't improve what you don't measure. Establish metrics that track AI content quality over time: percentage of outputs requiring extensive revision, average editing time per piece, voice consistency scores from your quality checklist, and customer engagement metrics that indicate content resonance.

Track these metrics at individual, team, and organizational levels. Identify patterns: Are certain content types consistently problematic? Do specific team members need additional training? Are particular prompt templates producing better quality outputs? Data-driven insights guide targeted improvements rather than general quality complaints.

Celebrate progress. As your prompt engineering improves and your team develops expertise, you should see measurable reductions in revision time and increases in content quality scores. Document these wins to build organizational confidence in AI adoption and justify continued investment in quality control infrastructure.

Conclusion: Quality Control as Competitive Advantage

The businesses that win with AI aren't the ones generating the most content—they're the ones generating the highest quality content at scale. Strategic quality control transforms AI from a commodity tool anyone can access into a competitive advantage that differentiates your brand from competitors publishing generic outputs.

Your quality control investment pays dividends beyond individual content pieces. As you refine prompts, establish systems, and build team expertise, the efficiency gap between you and competitors widens. You're producing expert-level content that builds credibility while competitors are still wrestling with robotic outputs that damage trust.

The path from AI skepticism to confident adoption runs through quality control. When you know your systems consistently produce authentic, strategic content that reflects your brand voice, fear transforms into competitive advantage. You're not hoping AI will work—you're systematically ensuring it does.

Not sure where to start? Explore all free AI resources — prompts, templates, and checklists that get you producing human-quality AI content today, at no cost.

When you're ready to go further, browse the full Expert AI Prompts pricing catalog — 30 industry-specific prompt packs built to eliminate generic outputs and deliver expert-level results from day one.

Ready to build quality control directly into your AI content process? Our Competitor-Proof Brand Voice Kit provides the frameworks, templates, and strategic guidance to ensure every AI-generated piece sounds authentically yours. Download it now:

https://expertaiprompts.com/lead-magnet-competitor-proof-brand-voice-kit

Further Reading

• 5 Signs Your AI Content Writer Is Hurting Your Brand Authority

• How to Train AI to Write in Your Brand Voice